AI audio watermarking embeds hidden data into audio files to verify authenticity, protect copyrights, and combat misuse like deepfakes or voice cloning. However, tampering attacks – such as MP3 compression, re-recording, or AI-generated distortions – threaten its reliability. Research has shown that no watermarking method can fully withstand all attack types, with detection accuracy often dropping to unreliable levels. Advanced attacks like overwriting watermarks or using AI voice conversion models can reduce detection success rates to nearly zero.

Key Points:

- How It Works: Watermarks are embedded using neural networks, ensuring they remain inaudible but detectable.

- Attack Types:

- Signal-level: Compression, noise, or pitch shifts.

- Physical-level: Re-recording audio.

- AI-driven: Voice cloning or text-to-speech models.

- Challenges: Detection accuracy can drop by over 50% under certain attacks, making watermarks ineffective.

- Solutions: Tools like ScoreDetect integrate watermarking with blockchain and automated enforcement for better protection.

AI watermarking is effective under normal conditions but struggles against sophisticated tampering. Solutions must evolve to address these growing threats.

How AI Audio Watermarking Works

Embedding Hidden Watermarks

AI audio watermarking relies on two main components: a neural embedder that inserts a hidden signature and a detector that retrieves it for verification [3]. Essentially, it embeds a digital signature into the audio, making it invisible to listeners but recoverable through specialized tools.

The embedder uses neural networks to integrate the watermark data without affecting the audio’s quality. To keep the watermark undetectable, techniques like perceptual loss functions and adversarial training are employed. For instance, a discriminator is trained to differentiate between the original and watermarked versions, ensuring the altered audio sounds indistinguishable from the original [3][4].

Various embedding techniques address specific challenges. Here are a few examples:

- Encoder-decoder architectures (e.g., AudioSeal): These embed redundant messages within latent spaces learned by neural networks [3].

- Invertible Neural Networks (e.g., WavMark): They treat watermarking as a reversible process, allowing for high-quality audio reconstruction [3].

- Frequency-domain methods (e.g., Timbre): These encode watermark data into specific frequency coefficients, which are often more resistant to distortions than time-domain approaches [3][4].

"Audio watermarking, as a proactive defense, can embed secret messages into audio for copyright protection and source verification."

– Lingfeng Yao et al., arXiv [3]

These advanced embedding methods form the backbone of AI-powered watermarking systems.

Key Features of AI-Driven Watermarking

AI-driven watermarking must strike a balance between three critical aspects: robustness, fidelity, and capacity [5]. Achieving this balance involves careful tradeoffs.

- Robustness: This measures the watermark’s ability to endure unintentional changes, such as MP3 compression or format conversions. Leading methods like AudioSeal and WavMark have shown near-perfect bit recovery rates under normal conditions [3]. While robustness focuses on resisting accidental alterations, security addresses protection against intentional attacks or attempts to remove the watermark [3].

- Fidelity: Watermarked audio must sound identical to the original. Metrics like PESQ and ViSQOL are used to evaluate this [4]. For example, major platforms like Netflix and Disney+ use forensic watermarking to track pre-release leaks without compromising the viewer’s experience [6].

- Localized Detection: AI watermarking can pinpoint tampered segments rather than providing a simple pass/fail integrity check [1]. Some systems even include fragile watermarking, where the watermark becomes unreadable if the content is altered [6].

These features showcase the precision and adaptability of AI-driven watermarking in protecting audio and generative content.

sbb-itb-738ac1e

AI Music Copyright: The Watermarking Solution Explained

Common Tampering Attacks on Audio Content

These attacks directly threaten the reliability of AI-based watermarking systems, making them a critical concern for content security.

Overwriting Attacks

Overwriting attacks involve replacing original watermarks with fake ones. In these cases, attackers use adversarial optimization to alter the audio so that the detection system reads a counterfeit watermark instead of the original [7]. This undermines the legitimacy of the content’s ownership.

These attacks vary based on how much the attacker knows about the watermarking system. In white-box attacks, the attacker has complete knowledge of the system. Gray-box attacks occur when the attacker has partial information, and black-box attacks involve no access to internal details [8]. Research shows that overwriting attacks can achieve nearly a 100% success rate against advanced neural audio watermarking systems [8].

"The proposed overwriting attacks can effectively compromise existing watermarking schemes across various settings and achieve a nearly 100% attack success rate." – Lingfeng Yao et al. [8]

Overwriting is especially effective when attackers aim to fraudulently claim intellectual property by embedding a fake watermark [8].

While overwriting focuses on replacing watermarks, other methods aim to remove or subtly alter them.

Removal and Forgery Methods

Attackers often dismantle existing watermarks using techniques that distort the audio at different levels: signal, physical, or AI-driven.

Signal-level attacks modify the digital audio directly. For example:

- MP3 compression at 64 kbps increases WavMark’s Bit Error Rate (BER) to 24.12%.

- Pitch shifting by +100 cents reduces Timbre’s detection accuracy to just 6.37% [1][7].

- Adding pink noise raises WavMark’s BER to 28.59% and AudioSeal’s to 10.89%.

- Cropping over 90% of an audio file typically drops detection accuracy to 49–57% [1][7].

Physical-level attacks, often exploiting the "analog hole", involve re-recording watermarked audio through speakers and microphones. This process, done over distances of 1.6 to 16.4 feet (0.5 to 5 meters), introduces environmental noise and hardware distortions that can obscure the watermark [1].

AI-induced distortions represent some of the most advanced methods. For instance:

- Voice conversion models like AdaIn-VC and FragmentVC can lower detection accuracy to around 50%, making it as unreliable as random guessing [1].

- Text-to-Speech systems like Tacotron2 and Fastspeech2 generate audio that mimics the original speaker’s voice but excludes the watermark [1].

- Processing watermarked audio through a neural vocoder (used in voice cloning) can raise AudioSeal’s BER to 39.01% and WavMark’s to 50.00% [7].

AI-driven techniques are particularly effective because they can remove watermarks while preserving the audio’s quality, making them increasingly popular among attackers.

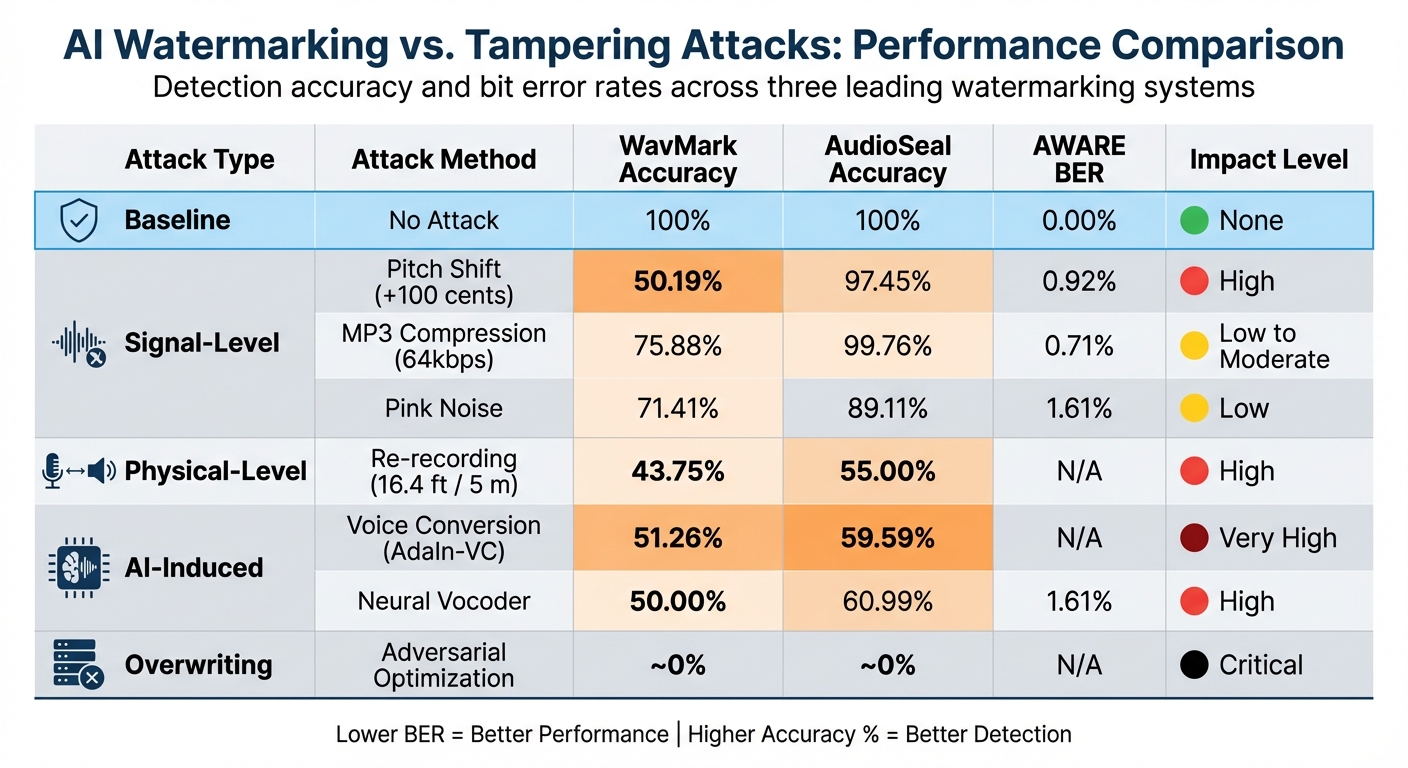

AI Watermarking vs. Tampering Attacks

AI Audio Watermarking Performance Under Different Tampering Attacks

AI watermarking systems are effective against everyday distortions but struggle when faced with advanced tampering techniques. This section examines how these systems perform under various conditions, highlighting both their strengths and vulnerabilities.

Strengths of AI Watermarking

AI watermarking systems are designed to handle routine distortions with impressive reliability. For instance, the AWARE system achieved a 0.00% Bit Error Rate (BER) under low-pass and high-pass filtering, while AudioSeal recorded a 14.58% BER under the same conditions. Similarly, when tested against pink noise corruption, AWARE maintained a 1.61% BER, outperforming AudioSeal’s 10.89% and WavMark’s 28.59% [7].

These systems also ensure that embedding watermarks doesn’t compromise audio quality. AudioSeal, for example, achieves a PESQ score of 4.32, which is close to the "excellent" threshold of 4.5. This is accomplished through perceptual budgeting, where louder sections of audio allow for larger modifications, while quieter parts are adjusted more subtly to keep watermarks imperceptible [7].

Advanced features like AWARE’s Bitwise Readout Head (BRH) further enhance robustness. By aggregating evidence across the entire audio file, this architecture ensures reliable watermark detection even after operations like cropping or splicing, which typically disrupt synchronization [7].

"Watermarking has re-emerged as a practical mechanism to label both synthetic and authentic content to support provenance, traceability, and downstream moderation." – Kosta Pavlović, Lead Author, AWARE Research [7]

Weaknesses of AI Watermarking

Despite their strengths, AI watermarking systems are vulnerable to targeted attacks. Overwriting attacks, for example, can completely replace legitimate watermarks with forged ones, reducing detection accuracy to nearly 0% [8].

AI-driven distortions also pose a significant challenge. When watermarked audio undergoes voice conversion through models like AdaIn-VC or pitch shifting by +100 cents, detection accuracy drops sharply. For example, WavMark’s accuracy falls to 51.26% with voice conversion and 50.19% with pitch shifting, while Timbre’s accuracy plummets to just 6.37% under pitch shifting [1][7].

Physical-level attacks, such as exploiting the "analog hole", further highlight these weaknesses. While WavMark achieves 97.50% accuracy at a recording distance of 1.6 ft (0.5 m), its performance drops drastically to 43.75% at 16.4 ft (5 m) [1]. In a broader study involving 25 watermarking schemes and 22 attack types, researchers concluded that no system could withstand all real-world distortions [1].

Comparison Table: AI Watermarking vs. Tampering Attacks

Below is a summary of how different watermarking systems perform under various attack scenarios.

| Attack Type | Attack Method | WavMark Accuracy | AudioSeal Accuracy | AWARE BER | Impact Level |

|---|---|---|---|---|---|

| Baseline | No Attack | 100% | 100% | 0.00% | None |

| Signal-Level | Pitch Shift (+100 cents) [7] | 50.19% | 97.45% | 0.92% | High |

| Signal-Level | MP3 Compression (64kbps) [7] | 75.88% | 99.76% | 0.71% | Low to Moderate |

| Signal-Level | Pink Noise [7] | 71.41% | 89.11% | 1.61% | Low |

| Physical-Level | Re-recording (16.4 ft / 5 m) [1] | 43.75% | 55.00% | N/A | High |

| AI-Induced | Voice Conversion (AdaIn-VC) [1] | 51.26% | 59.59% | N/A | Very High |

| AI-Induced | Neural Vocoder [7] | 50.00% | 60.99% | 1.61% | High |

| Overwriting | Adversarial Optimization [8] | ~0% | ~0% | N/A | Critical |

Note: Lower Bit Error Rates (BER) indicate stronger performance, while higher accuracy percentages reflect better detection resilience under attack conditions.

Future Directions and Enterprise Solutions

Tamper-Resistant Watermarking Technologies

A review of 25 watermarking schemes revealed a troubling reality: all of them failed when tested against 22 different types of removal attacks, highlighting significant weaknesses in current approaches [1]. To stay ahead of these threats, future watermarking technologies must tackle three key categories of attacks:

- Signal-level distortions: Examples include MP3 compression, which can degrade or erase watermarks.

- Physical-level attacks: Methods like re-recording audio to strip embedded markers.

- AI-driven manipulations: Techniques such as voice conversion and text-to-speech forgeries that bypass traditional watermarking.

Another growing concern is adaptive attacks, where generative models are fine-tuned specifically to remove watermarks. These attacks expose the shortcomings of static watermarking systems that lack the ability to evolve after deployment [2]. Researchers also uncovered eight new types of black-box attacks that have proven highly effective against today’s watermarking strategies [1].

"This persistent vulnerability emphasizes the urgent need for next-generation watermarking."

The challenges presented by these vulnerabilities underscore the need for enterprise solutions that go beyond the limitations of traditional watermarking.

ScoreDetect for Enterprise Protection

To address these advanced threats, enterprise solutions have evolved to offer more comprehensive protection. While traditional AI watermarking often struggles against sophisticated tampering methods, tools like ScoreDetect bring a multi-layered defense system to the table.

ScoreDetect combines several technologies into a unified solution:

- Invisible watermarking: Non-intrusive yet effective, it prevents unauthorized use without affecting content quality.

- Blockchain timestamping: Provides a secure and immutable record of ownership.

- Automated takedown notifications: Ensures swift removal of infringed content, streamlining enforcement.

Unlike academic methods that primarily focus on detection, ScoreDetect offers a complete protection workflow. It not only identifies unauthorized use with a 95% success rate but also provides quantitative proof of infringement. Furthermore, its automated delisting system achieves a takedown rate exceeding 96%, making it a powerful tool for enterprises looking to safeguard their content.

Conclusion

AI audio watermarking plays a key role in protecting content, but it faces significant challenges when confronted with advanced tampering techniques [1]. While these technologies are effective at embedding hidden markers for verifying ownership and protecting intellectual property, a review of 25 watermarking schemes revealed that none could withstand all forms of real-world attacks [1].

The vulnerabilities fall into three main categories: signal-level distortions (like MP3 compression), physical-level attacks (such as re-recording, which can drastically reduce detection accuracy – from 97.50% at about 1.6 feet to just 43.75% at 16.4 feet), and AI-driven manipulations that evade traditional defenses [1]. In some cases, detection accuracy drops so low it becomes comparable to random guessing [1]. Additionally, overwriting attacks have been shown to succeed almost 100% of the time [8].

These limitations make it clear that traditional watermarking approaches alone aren’t enough. Solutions like ScoreDetect tackle these issues by combining invisible watermarking with blockchain-based timestamps and automated enforcement. This multi-layered approach delivers a 95% success rate in detecting unauthorized content and achieves a takedown rate of over 96%. Such comprehensive systems demonstrate a clear advantage over standalone watermarking technologies.

Ultimately, the future of audio content protection lies in integrated solutions that can adapt to ever-evolving threats. As tampering methods grow more sophisticated, the gap between academic research and practical enterprise security continues to widen. For organizations determined to protect their digital assets, robust, multi-faceted strategies are no longer optional – they’re essential.

FAQs

Can audio watermarks survive voice cloning or text-to-speech?

Audio watermarks encounter tough obstacles when up against voice cloning and text-to-speech (TTS) technologies. Although they are built to endure distortions, many watermarking methods struggle to hold up against advanced AI-driven techniques like voice synthesis. Research reveals that no existing watermarking technology can completely resist all AI-driven alterations, such as cloning or TTS. These methods can modify or even erase watermarks, undermining their reliability and effectiveness.

What tampering attacks break audio watermark detection most often?

Audio watermark detection often faces challenges from tampering attacks like signal compression, noise addition, and temporal changes such as cuts or desynchronization. These tactics distort the audio signal, reducing the effectiveness of detection. On top of that, adversarial edits and techniques like time-stretching can disrupt the alignment of watermarks, making detection even more difficult. Research indicates that no existing watermarking method can withstand all these types of distortions completely.

How can ScoreDetect help if the watermark gets removed or overwritten?

ScoreDetect employs advanced AI methods to identify and verify watermarks, even in cases where tampering has occurred. Its detection system meticulously examines content for identifiable signatures and integrates blockchain technology to establish unchangeable proof of ownership. By accurately uncovering and analyzing unauthorized use and supporting automated takedown processes, ScoreDetect ensures content remains protected – even when watermarks are altered or erased.