Invisible digital watermarks are widely used to protect ownership of media like images, audio, and videos. However, attackers are increasingly using noise injection to remove or manipulate these watermarks, undermining their effectiveness. Traditional detection methods struggle to handle these attacks, but AI-powered systems are stepping in to address these challenges.

Key Takeaways:

- Noise injection attacks come in two forms: evasion (removing watermarks) and spoofing (adding fake watermarks to genuine content).

- AI-based detectors analyze watermark patterns and content features, overcoming the limitations of traditional rule-based systems.

- Techniques like diffusion inversion for images, auditory masking for audio, and spatio-temporal analysis for video enable precise detection even after transformations like cropping, compression, or editing.

- Advanced tools like Google’s SynthID, Meta’s AudioSeal, and InCyan’s Tectus offer scalable solutions for embedding and detecting watermarks.

AI-driven detection systems are critical for safeguarding digital assets, but they face challenges from adversarial attacks, high error rates, and evolving manipulation techniques. A multi-layered approach combining watermarking, AI detection, and blockchain verification is becoming the standard for protecting media in today’s digital landscape.

SynthID: A tool for watermarking and identifying AI-generated content

sbb-itb-738ac1e

AI Methods for Detecting Noise in Images, Audio, and Video

AI techniques for detecting noise in media focus on separating authentic signals from any type of interference. These approaches often rely on statistical fingerprinting, where randomness in content generation is subtly influenced to leave hidden patterns that can later be identified [7].

Detecting Noise in Watermarked Images

When it comes to images, AI employs tools like diffusion inversion and frequency-domain analysis to verify watermarks. By reconstructing the original noise in an image using inverse diffusion, systems can compare this noise to known patterns using cosine similarity [4]. If the similarity score surpasses 0.5, the watermark is validated. In contrast, random or tampered noise typically falls far outside the expected range – 5.3 to 9.4 standard deviations from the mean [4].

Techniques like Tree-Ring watermarking add circular patterns in the Fourier transform of Gaussian noise, making them resistant to spatial changes. In December 2024, researchers at New York University introduced WIND (Watermarking with Indistinguishable and Robust Noise), a method that uses Fourier-based patterns to efficiently sift through large datasets of noise seeds [4]. These innovations allow reliable watermark detection even after modifications like cropping, rotation, or compression, with false-positive rates as low as p < 10^-19 [4] [5].

By 2025, Google DeepMind‘s SynthID had successfully watermarked over 10 billion images and video frames across various Google platforms [5].

Detecting Noise in Audio Watermarks

Audio watermarking requires a different approach due to the unique constraints of sound perception. AI algorithms hide signals in parts of the spectrum masked by louder sounds, a technique known as auditory masking. Detection systems then analyze waveforms to locate these embedded signals while filtering out natural variations or deliberate noise [5] [6].

Meta AI’s AudioSeal, launched in 2024, marked a major step forward. It uses a generator-detector framework and a perceptual loss function to ensure watermarks remain below the threshold of human hearing [5] [6]. This system counters noise injection by leveraging auditory masking, preserving the watermark’s integrity even under adversarial conditions.

"The idea behind statistical watermarking is to put a thumb on the scale of randomness during generation so it leaves a fingerprint that can be detected later."

- Siddarth Srinivasan, Postdoctoral Fellow at Harvard University [7].

What sets AudioSeal apart is its sample-level localization. According to Wikipedia:

"AudioSeal is the first audio watermark to provide sample-level localization."

This capability allows precise identification of watermarked segments in long audio streams, even after editing, compression (e.g., MP3), or time-stretching attacks [5].

Detecting Noise in Video Watermarks

Detecting noise in video is particularly challenging due to the need to account for both spatial and temporal variations. Watermarks in video must survive a range of attacks, from frame-dropping to advanced editing techniques. AI systems analyze frame-level consistency and temporal patterns to detect tampering, identifying irregularities that suggest noise injection or watermark removal [1] [2].

Dual-branch architectures like DuB3D-FF use spatio-temporal data and optical flow to differentiate between genuine artifacts and watermarks [2]. These models are designed to withstand challenges like frame averaging and pixel "hallucination", which aim to remove watermarks while preserving visual quality [1] [2].

Transformer-based models like MViT V2 and VideoSwin-T have proven highly effective, though their detection accuracy depends on watermark patterns, with performance dropping by 2–8 percentage points when noise removal tools like DiffuEraser are used [2]. To counter this, newer systems employ watermark-aware training strategies, teaching detectors to rely on authentic content features rather than just watermark patterns. This combined spatial-temporal analysis strengthens detection against sophisticated attacks [2].

For businesses needing robust protection across various media formats, platforms like InCyan’s Tectus offer blind watermarking solutions. These systems embed invisible ownership signals that survive transformations and attacks, enabling effective copyright enforcement without compromising user experience.

Challenges in AI-Based Noise Detection Systems

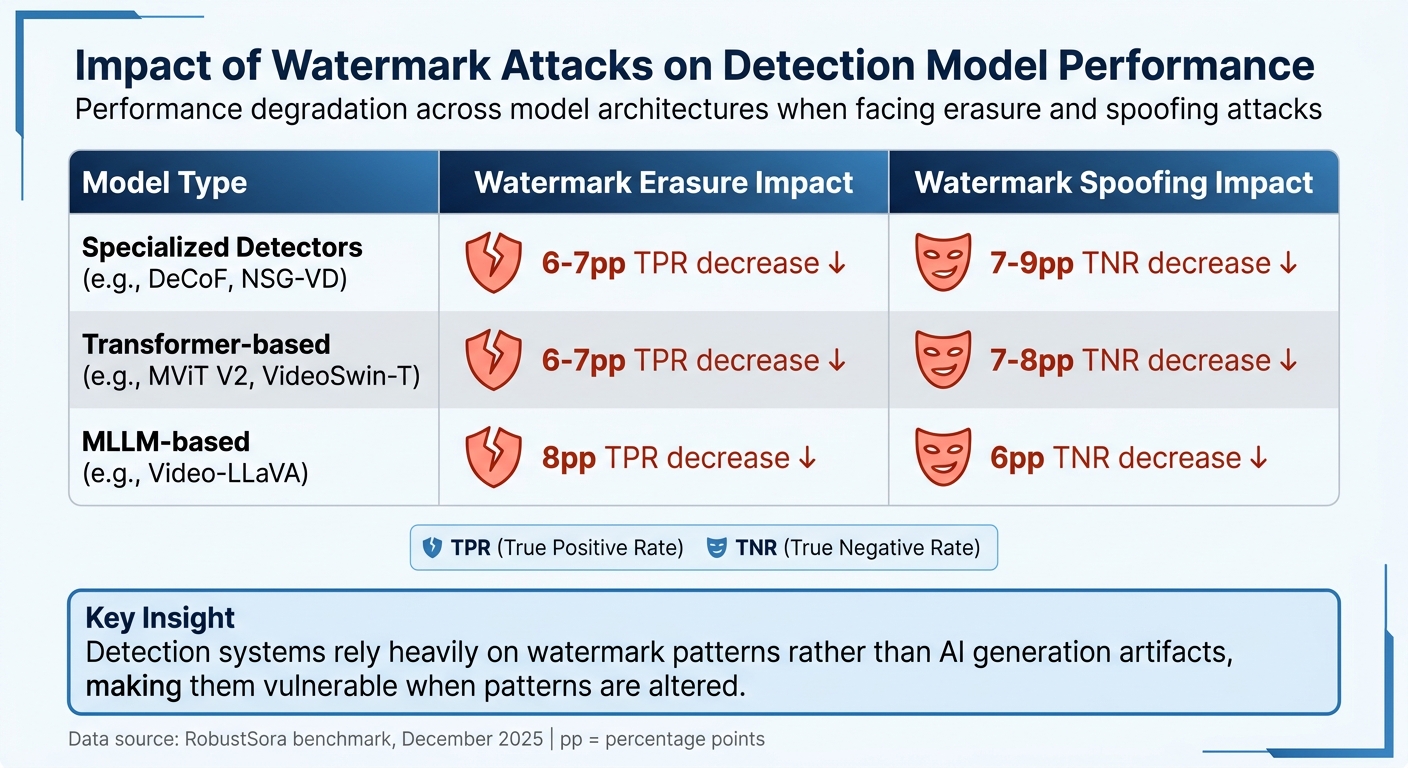

Impact of Watermark Attacks on AI Detection Model Performance

AI detection systems, no matter how advanced, face notable hurdles when it comes to protecting watermarked content. These challenges arise from technical limitations and targeted adversarial tactics aimed at exploiting weaknesses in detection algorithms.

Adversarial Attacks on Detection Systems

One of the toughest challenges comes from adversaries who develop methods to strip watermarks while maintaining content quality. A prime example is diffusion purification, which uses diffusion models to introduce noise to an image and then reverse the process. This method effectively erases watermark signals, dropping detection rates from nearly 100% to what amounts to random guessing [5].

Another significant threat is model substitution, where attackers use an alternate, unwatermarked AI model to recreate content with the same meaning, bypassing the original watermark entirely [5] [9]. Additionally, adversaries can exploit APIs to steal watermarking rules for under $50. This enables large-scale attacks, including fake watermark creation (spoofing) and watermark removal (scrubbing) [5].

In April 2026, researchers Gautier Evennou (IMATAG) and Ewa Kijak (University of Rennes) demonstrated that while watermark removal is possible, it leaves behind detectable traces. They explained:

"The act of removal leaves distinct statistical trails – high-frequency adversarial noise, characteristic diffusion artifacts, or both – that push the image off the manifold of natural images." [10]

Their forensic detector, built with a ConvNextTiny-v2 backbone, achieved an impressive Area Under the Curve (AUC) above 0.988 in detecting removal attempts, even after challenges like JPEG compression or resizing [10]. At a 10⁻³ false positive rate, the system achieved a 99.8% true positive rate for detecting WMForger attacks [10].

These adversarial strategies make it harder to set reliable detection thresholds, which are crucial for system effectiveness.

Error Rates and Detection Thresholds

Determining the right detection threshold is tricky. If the threshold is set too low, legitimate human-created content could be mistakenly flagged as AI-generated (false positives). If it’s set too high, AI-generated content could slip through undetected (false negatives) [5] [7]. In high-stakes fields like safeguarding educational content or spam detection, even a 1% false positive rate is often considered unacceptable [7].

The December 2025 RobustSora benchmark highlighted how watermark manipulation can undermine detection accuracy across various architectures. For example, when researchers used DiffuEraser to remove watermarks from Sora 2 and KLing videos, detectors like DeCoF and NSG-VD saw their performance drop by 6-7 percentage points [2]. Transformer-based models faced similar setbacks, with True Positive Rates (TPR) falling by 6-7 percentage points after watermark removal, and True Negative Rates (TNR) declining by 7-8 percentage points when authentic content was spoofed with fake watermarks [2].

| Model Type | Watermark Erasure Impact | Watermark Spoofing Impact |

|---|---|---|

| Specialized Detectors (e.g., DeCoF) | 6-7pp TPR decrease [2] | 7-9pp TNR decrease [2] |

| Transformer-based (e.g., MViT V2) | 6-7pp TPR decrease [2] | 7-8pp TNR decrease [2] |

| MLLM-based (e.g., Video-LLaVA) | 8pp TPR decrease [2] | 6pp TNR decrease [2] |

These results highlight a key issue: many detection systems rely heavily on watermark patterns rather than the actual artifacts of AI generation [2]. When those patterns are altered, detection accuracy takes a hit. As Hany Farid from the University of California, Berkeley, explains:

"Watermarking is not an elimination strategy. It’s a mitigation strategy." [8]

For organizations looking to counter these evolving threats, InCyan’s Tectus offers a promising solution. Their blind watermarking embeds invisible ownership signals designed to withstand advanced removal efforts. When paired with forensic detection tools, such multi-layered defenses offer a stronger shield against both technical evasion and adversarial attacks.

Enterprise Tools for AI-Driven Content Protection

ScoreDetect: AI-Powered Digital Asset Protection

As threats like adversarial attacks become more advanced, businesses need protection tools that surpass basic watermarking. Enter ScoreDetect from InCyan – a platform designed to safeguard digital assets using blockchain timestamping and workflow automation. Instead of storing actual files, it captures a checksum of your content and records it on the blockchain, creating a verifiable chain of custody. Plus, it integrates with over 6,000 web apps via Zapier, enabling automated protection workflows.

ScoreDetect also features a WordPress plugin that timestamps content to establish ownership and enhance SEO. With a 95% success rate in combating web scraping and a 96% takedown rate for infringing content, this tool supports the entire protection process: prevention, detection, analysis, and removal.

Blockchain timestamping alone isn’t enough. For deeper protection, InCyan’s Tectus offers invisible watermarking, embedding ownership signals into images, videos, and audio. This scalable solution has been applied to billions of media assets successfully [5]. To complement this, the Idem platform uses a multimodal database to identify ownership, even when only 10% of the original content remains intact.

For businesses battling search-based piracy, Indago de-indexes unauthorized listings from search engines in under 60 minutes, cutting off traffic at its source. Additionally, TorrentWatch monitors the BitTorrent ecosystem, while Txtmatch provides forensic-level text matching for written content. Together, these tools create a multi-layered defense against content misuse.

Building a Multi-Layered Protection Strategy

As adversarial tactics like noise injection grow more sophisticated, relying on just one protection method is risky. Visible logos can be cropped, metadata can be stripped by social media platforms, and cryptographically signed credentials can be lost during re-encoding. A multi-layered defense ensures that if one layer fails, others remain effective.

Start by conducting a cross-functional review to map out how content flows through your systems and identify high-value assets. Define a "robustness profile" that outlines the transformations your watermarks need to endure – such as compression from social media, AI-driven edits, or format changes.

Next, deploy a four-stage verification pipeline to analyze suspect assets. This pipeline should: ingest the content, use AI detectors to extract watermarks, compare findings against reference databases, and deliver confidence-scored results. Many modern systems use two-stage detection frameworks like WIND. In Stage 1, Fourier patterns narrow down the search space; in Stage 2, similarity-based searches analyze reconstructed noise [4]. For audio, tools like AudioSeal provide per-sample probability scores to detect manipulated segments [5].

Maintaining a secure chain of custody is also critical. Cryptographically verifiable ledgers document how evidence is collected and analyzed, making it defensible in legal or regulatory scenarios. As Nikhil John explains:

"Invisible digital watermarking is not a silver bullet, but it is a powerful control point in a world of frictionless copying and increasingly sophisticated synthetic media." [3]

The industry is now leaning toward a hybrid approach to provenance. While C2PA metadata offers detailed tracking when available, invisible watermarking acts as a reliable fallback. This dual approach addresses the fragility of metadata while ensuring watermarks remain as durable proof of ownership [5].

Conclusion

AI-powered noise detection is transforming how organizations safeguard watermarked content. Tools like WIND and SynthID showcase the advancements in watermarking techniques and their ability to function effectively on a large scale [4][5]. For instance, Google’s SynthID has embedded watermarks in over 10 billion images and video frames, demonstrating its scalability and durability [5].

But embedding watermarks is only part of the equation – detecting them in adversarial scenarios is where the real challenge lies. Advanced two-stage detection frameworks, which first group data using Fourier analysis and then perform precise database matching, make it possible to identify watermarks even under heavy noise [4]. The odds of mistaking random noise for a specific watermark are less than 10^-19, ensuring highly accurate identification of assets [4]. In the audio domain, Meta’s AudioSeal takes it a step further by providing sample-level localization, pinpointing exactly which segments have been AI-generated or tampered with [5].

These technical achievements pave the way for enterprise-grade solutions. For example, ScoreDetect by InCyan goes beyond detection by incorporating blockchain timestamping and automated workflows. This has led to a 95% success rate in preventing web scraping and a 96% takedown rate for infringing content. For more resilient protection, InCyan’s Tectus uses invisible watermarking that withstands transformations, while Idem can identify ownership even if only 10% of the original asset remains intact.

Nikhil John from InCyan emphasizes the importance of these advancements:

"Invisible watermarking becomes a strategic control point for authenticity, licensing, and provenance across images, video, audio, and documents" [3].

The industry is increasingly adopting hybrid approaches that combine C2PA metadata with invisible watermarks. This ensures that protections remain intact, even when metadata is stripped by social media platforms [5].

Staying ahead of sophisticated attacks requires a multi-layered defense strategy – one that integrates in-generation watermarking, AI-driven detection, blockchain verification, and automated enforcement. This comprehensive approach is becoming the standard for protecting digital assets in an evolving landscape.

FAQs

How can AI identify a real watermark from injected noise?

AI determines genuine watermarks by examining the unique patterns and features embedded during the watermarking process. Using advanced algorithms, it identifies subtle signals like frequency irregularities or energy patterns that set real watermarks apart from random noise. Techniques such as frequency domain analysis enhance high-frequency signals associated with watermarks, while blind detection models analyze statistical and spectral characteristics to differentiate authentic watermarks from tampering or artificially added noise.

What edits most often disrupt watermark detection?

Edits designed to interfere with watermark detection often use sophisticated strategies aimed at the watermark’s underlying signals or characteristics. These can include pixel-level modifications, such as applying filters or making subtle adjustments to the image. Another approach is semantic-level attacks, like Next Frame Prediction, which modifies the content itself to erase watermarks. For videos, tampering with noise patterns or disrupting temporal consistency can undermine watermarking systems, especially those that depend on consistent noise templates or distributions.

How do watermarking and blockchain timestamping work together?

Watermarking involves embedding subtle, invisible markers into digital content – such as images, audio, or video – that help confirm its origin and ownership. These markers are crafted to be resistant to alterations, ensuring the content’s integrity remains intact. Blockchain timestamping takes this a step further by generating an unchangeable record that verifies the content’s existence at a specific point in time. When combined, watermarking and blockchain timestamping create a powerful system for safeguarding content, offering both authenticity and traceability – even if the original watermark is tampered with or removed.