Invisible watermarks are a hidden way to protect digital content without altering its appearance. Unlike visible watermarks, they embed ownership data directly into an image’s pixels or AI-generated latent spaces, making them harder to remove. These tools are crucial for verifying content origins, combating false attribution, and addressing challenges posed by AI optimization attacks.

Key points:

- What They Are: Invisible markers embedded in digital media, undetectable to the naked eye.

- Why They Matter: Protect ownership, trace distribution, and prevent misuse in the age of generative AI.

- Challenges: Balancing invisibility with durability, surviving transformations like resizing or compression, and resisting AI-based removal attacks.

- Threats: AI tools like WMCopier can remove or forge watermarks, leading to false attributions.

- Solutions: Multi-layered watermarks (image-space and latent-space), adaptive algorithms, and blockchain verification ensure stronger protection.

Invisible watermarks are evolving to counter increasingly advanced threats, making them vital for safeguarding digital assets in an AI-driven world.

Watermarking in Generative AI: Opportunities and Threats

sbb-itb-738ac1e

Technical Challenges in Watermark Design

Creating effective invisible watermarks is no easy task. The difficulty lies in ensuring that watermarks remain intact under various attacks while staying completely invisible. Push the durability too far, and you risk creating visible artifacts. But if the watermark isn’t strong enough, it might not survive common processes like resizing or compression.

Balancing Invisibility and Durability

At the heart of watermark design is the fidelity-robustness trade-off. Strengthening a watermark to resist removal often results in visible degradation, creating a tricky paradox. The more robust the watermark, the more likely it is to attract attention or be targeted by systems designed to remove it.

To address this, advanced systems like OptMark use a two-stage approach. First, they embed a "structural watermark" early in the AI generation process to withstand generative attacks. Then, a "detail watermark" is added later to handle transformations like resizing or compression. This method uses tailored regularization to find a balance between signal strength and image quality.

"OptMark strategically inserts a structural watermark early to resist generative attacks and a detail watermark late to withstand image transformations, with tailored regularization terms to preserve image quality and ensure imperceptibility." – Jiazheng Xing et al., Authors [3]

Another innovative approach is dual-modality watermarking, which adds an extra layer of defense. By incorporating multiple types of watermarks, attackers face a tougher challenge. If one type is removed, the other may still remain, making complete removal far more difficult without compromising the overall image quality [1].

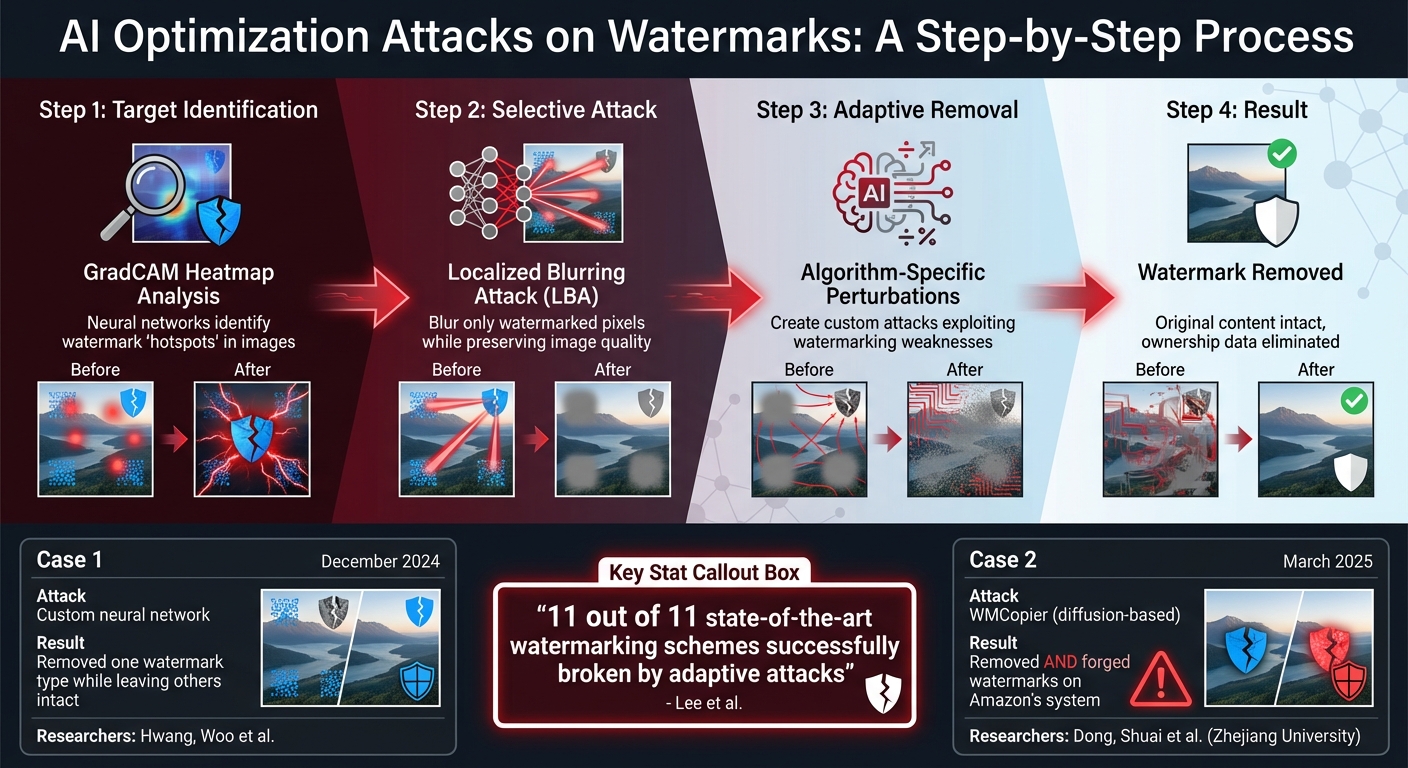

Modern watermarking systems also have to contend with localized attacks. For instance, attackers use GradCAM heatmaps to identify and blur specific "hotspots" where watermarks are embedded. Techniques like Localized Blurring Attacks (LBA) exploit these vulnerabilities by neutralizing watermarks while minimizing visible quality loss [1]. This forces designers to avoid creating obvious embedding patterns that attackers can easily target.

These challenges highlight the constant need for advanced defenses to stay ahead of AI-driven optimization attacks.

Surviving Content Transformations

Beyond attacks, watermarks also need to endure everyday content transformations. Compression, format conversion, cropping, and color adjustments are just a few examples of changes that can jeopardize watermark survival. Social media platforms, cloud storage providers, and content management systems often apply these transformations automatically, adding another layer of complexity.

Watermarking systems fall into two main categories: zero-bit and multi-bit systems. Zero-bit watermarks simply verify whether a watermark exists, while multi-bit systems can store detailed metadata like user IDs or distribution channels. However, multi-bit systems are generally more vulnerable to routine transformations [3].

A striking example of these vulnerabilities came in October 2025, when researchers Ziping Dong and colleagues from Zhejiang University demonstrated how even sophisticated systems could be tricked. Using an attack method called "WMCopier", they embedded target watermarks into non-watermarked images with alarming accuracy. This wasn’t just about removing watermarks – it was about forgery, where attackers copied watermarks to create false attribution [2].

"Forging traceable watermarks onto illicit content leads to false attribution, potentially harming the reputation and legal standing of Gen-AI service providers." – Ziping Dong et al., Authors [2]

To counter these threats, designers are turning to layered defense strategies. By embedding watermarks in multiple spaces, they make it exponentially harder to remove all traces without degrading the image quality. Additionally, optimization-based embedding methods now use adjoint gradient techniques to significantly reduce memory usage, making these solutions more scalable [3].

Understanding how watermarks can be compromised through transformations is key to building systems that can withstand even the most advanced neural network-based attacks.

AI Optimization Attacks on Watermarks

How AI Optimization Attacks Remove Invisible Watermarks

As invisible watermarking technologies advance, so do the methods designed to compromise them. The same neural networks that drive generative AI are now being used to strip watermarks meant to protect digital content. These attacks go beyond basic editing techniques, employing machine learning to surgically remove identifiers while leaving the original content intact.

How Neural Networks Remove Watermarks

Modern attacks rely on specialized networks to target and eliminate specific watermark types with precision. For instance, an attacker might train a neural network to remove a latent-space watermark while leaving an image-space watermark untouched, making detection more challenging [1].

One notable method is the Localized Blurring Attack (LBA). Unlike uniform blurring, this approach uses GradCAM heatmaps to identify the exact areas where watermarks are embedded. By blurring only those targeted pixels, LBA neutralizes the watermark while maintaining the overall image quality [1].

"LBA degrades the image significantly less compared to uniform blurring of the entire image." – Dongjun Hwang, Researcher [1]

Adaptive attacks take this concept even further. By understanding the underlying watermarking algorithm, attackers can create perturbations specifically designed to exploit its weaknesses. Research led by Suyoung Lee and colleagues analyzed 11 prominent watermarking schemes and found that adaptive attacks could completely erase watermark ownership [4].

"State-of-the-art trigger set-based watermarking algorithms do not achieve their designed goal of proving ownership… the proposed [adaptive] attacks effectively break all of the 11 watermarking schemes." – Suyoung Lee et al., Researchers [4]

These advanced techniques have moved from theory to practice, as documented in several real-world examples.

Documented Cases of AI-Based Attacks

Real-world applications of these attacks highlight their growing sophistication.

- December 2024: Researchers Dongjun Hwang, Sungwon Woo, and their team developed a custom neural network capable of attacking multiple watermarking methods. Their system successfully removed one type of watermark during the decoding process while leaving others intact [1].

- March 2025: A team from Zhejiang University, including Ziping Dong and Chao Shuai, introduced WMCopier, a tool that not only removes watermarks but can also forge them onto other images. Using unconditional diffusion models, WMCopier bypassed both open-source and closed-source systems, including Amazon‘s watermarking system [2].

"WMCopier effectively deceives both open-source and closed-source watermark systems (e.g., Amazon’s system), achieving a significantly higher success rate than existing methods." – Ziping Dong, Researcher [2]

What makes WMCopier particularly dangerous is its ability to operate without prior knowledge of the target watermarking algorithm. Instead, it learns the statistical patterns of a watermark through shallow inversion processes, allowing it to manipulate or even forge watermarks. This capability introduces new risks, such as enabling false attributions that could harm the credibility and legal standing of legitimate creators [2].

Defense Methods Against AI Attacks

Developers are now employing layered defenses that combine techniques like multi-modal embedding, adaptive algorithms, and blockchain verification to counter AI-driven attacks.

Semantic-Preserving Watermarks

The most effective watermarks are designed to endure AI attacks by embedding themselves in ways that are difficult to remove without damaging the content. A key strategy is multi-modal embedding, which applies watermarks in both the image-space and latent-space. This dual-layer method ensures that even if one watermark is stripped by a neural network, the other remains intact for verification. By leveraging this approach, watermarks become more resilient against advanced AI optimizations [1].

Research conducted by Dongjun Hwang and his team highlights the effectiveness of modality-preserving decoders – specialized networks that protect one watermarking layer while processing another. These decoders can significantly enhance detection performance, even against AI-driven attempts to remove watermarks. They are also designed to resist localized blurring attacks, which often use GradCAM heatmaps to target specific pixels where watermarks are embedded.

"Invisible watermarking methods act as identifiers of generated content, embedding image- and latent-space messages that are robust to many forms of perturbations."

- Dongjun Hwang et al., Researchers [1]

Latent-space integration takes this a step further by embedding messages directly within generative model representations, making the watermarks more resistant to typical image manipulations [1].

Adaptive Watermarking Algorithms

Static watermarks are relatively easy for AI to target and remove. In contrast, adaptive algorithms adjust their embedding strategy based on the content itself. These algorithms use GradCAM-based sensitivity analysis to pinpoint critical regions in an image where watermarks are most effective. Instead of spreading protection evenly across the image, they concentrate embedding strength in these key areas. This targeted approach makes it harder for attackers to remove the watermark without compromising image quality [1].

Beyond algorithmic flexibility, the integration of an immutable verification layer adds another layer of defense, ensuring the watermark’s integrity even if attackers attempt removal.

Using Blockchain for Added Security

Blockchain technology provides an extra layer of security by creating an immutable verification system that operates independently of the watermark. By recording a cryptographic hash of the watermarked content on a blockchain ledger, creators can establish undeniable proof of ownership. This record remains intact even if the watermark is removed or altered by an attacker, preserving the original creator’s rights and timestamp [2].

For example, ScoreDetect uses blockchain-enhanced protection to safeguard content. The system captures a checksum of the watermarked material and stores it on an unchangeable ledger, ensuring a tamper-proof ownership record without storing the actual digital assets. Even if the watermark is compromised, the blockchain record remains valid. Additionally, ScoreDetect’s WordPress plugin tracks every update to an article, reinforcing copyright protection while also improving SEO.

Conclusion

Invisible watermarking is caught in a quiet yet intense battle as neural networks develop sophisticated methods like Deep Image Prior and localized blurring attacks. To stay ahead, advancements in multi-modal embedding, adaptive algorithms, and blockchain verification are becoming increasingly critical [5]. The challenge lies in creating watermarks that are both undetectable to the human eye yet resilient enough to withstand AI-driven attacks. Modern approaches address this by layering multiple watermarking techniques, ensuring that if one is compromised, others remain intact and detectable.

A growing concern is watermark forgery. Attackers now use diffusion models to embed fake, traceable marks into unauthorized content, leading to false claims of ownership [2]. This makes blockchain-based verification indispensable, as it provides an unalterable record of ownership that persists even if a watermark is tampered with.

To combat these evolving threats, more comprehensive solutions are emerging. Organizations handling sensitive digital assets – whether in media, healthcare, or law – require more than basic watermarking tools. For example, ScoreDetect combines blockchain timestamping with invisible watermarking to deliver a robust system for content protection. Its WordPress plugin automates the process by tracking every article update, offering verifiable proof of ownership while also improving SEO. For enterprises needing invisible watermarking across various formats like images, video, and audio, InCyan, ScoreDetect’s parent company, offers platforms like Tectus. This blind watermarking solution ensures defensible ownership without leaving visible traces. These multi-layered, automated strategies highlight how digital content can be safeguarded in an AI-driven world.

As the arms race in watermarking continues, combining layered defenses with immutable verification remains the most reliable way to secure digital assets.

FAQs

Can an invisible watermark be forged to frame someone?

Invisible watermarks, while useful, are not foolproof – they can be forged. Research indicates that tools like WMCopier can embed a traceable watermark into content even without knowing the original algorithm, making it possible to falsely attribute ownership. Additionally, other studies point to methods for either forging or removing watermarks altogether. These vulnerabilities highlight the importance of combining strong watermarking techniques with additional layers of verification to guard against tampering or potential misuse.

What edits usually break an invisible watermark?

Edits like cropping, resizing, compression, or adding noise can disrupt an invisible watermark. These actions modify pixel data or underlying features, potentially weakening the watermark. Watermarks based on pixel-level adjustments are particularly susceptible, while those embedded in frequency domains or latent spaces tend to hold up better. However, even these more resilient methods can be affected by major or deliberate alterations.

How does blockchain help if a watermark is removed?

Blockchain creates a permanent, unchangeable record of content ownership, offering secure, verifiable proof stored directly within the blockchain. This guarantees the authenticity and origin of digital assets, even if a watermark is tampered with or removed.