Invisible watermarking is a hidden method to identify AI-generated images without altering their appearance. Unlike metadata, which can be stripped away, these watermarks embed directly into the image’s pixel data, making them resilient to edits like cropping or compression. They help trace image origins, protect copyrights, and combat misuse, especially as AI-generated content becomes harder to distinguish from real images. Advanced techniques like latent-space embedding and semantic watermarking are now addressing challenges like removal attacks and forgery, ensuring better durability and accuracy. With global regulations mandating such measures, watermarking is now crucial for verifying AI-generated content across industries.

Watermarking in Generative AI: Opportunities and Threats

sbb-itb-738ac1e

Recent Research in Invisible Watermarking

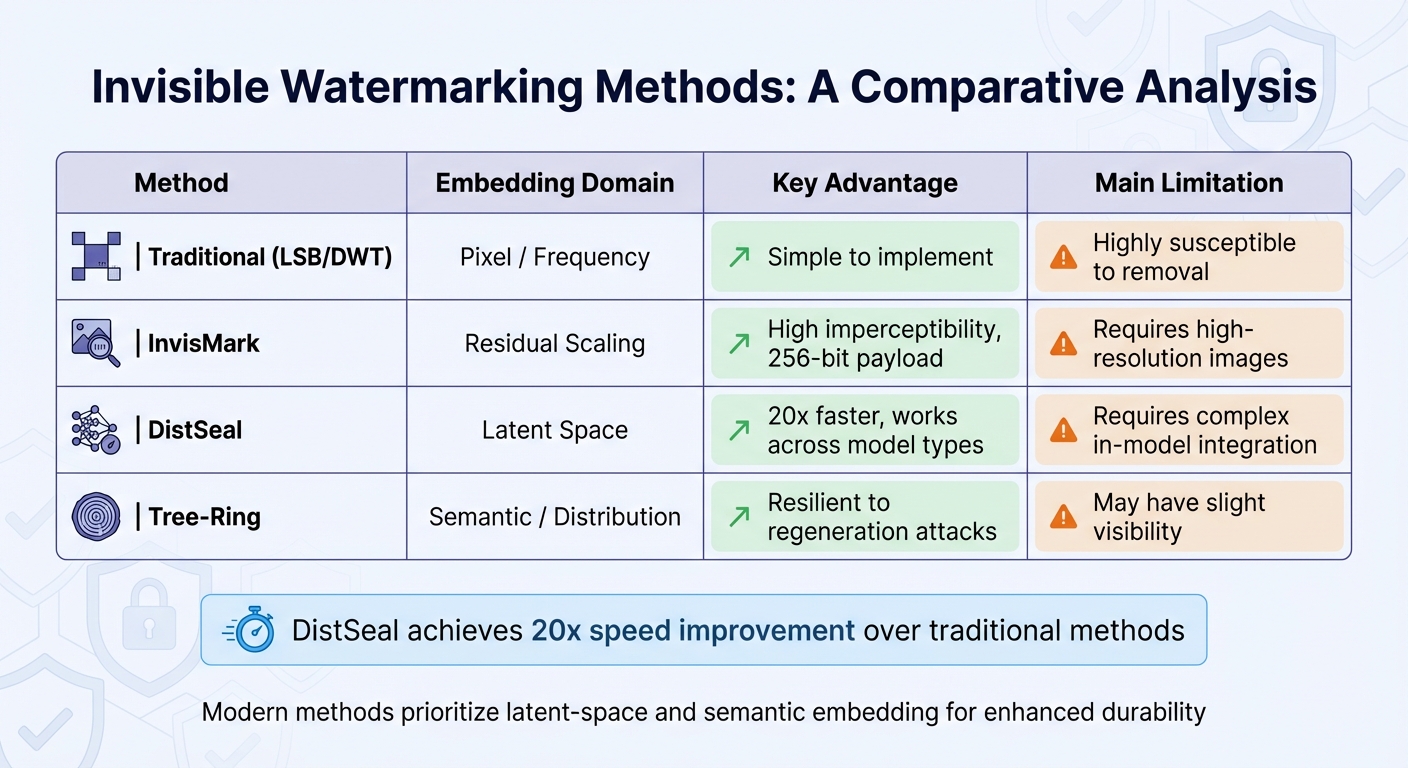

Comparison of Invisible Watermarking Methods for AI-Generated Images

Invisible watermarking has seen a shift from focusing on pixel-level techniques to embedding in latent spaces and semantic distributions. These newer methods aim to overcome the limitations of older approaches, offering improved durability and performance.

Embedding Techniques for Durability

Recent innovations embed watermarks into the latent space of generative models, prioritizing both speed and resilience. In January 2026, researchers Sylvestre-Alvise Rebuffi and Hady Elsahar introduced DistSeal, a method that embeds watermarks directly into latent spaces. This approach delivers a 20x speed improvement over traditional pixel-based methods while maintaining strong resistance to image distortions [5]. Similarly, Microsoft’s Responsible AI team developed InvisMark, which uses MUNIT-based architectures to achieve high imperceptibility and bit accuracy [1][2].

These techniques treat watermark resilience as a robust optimization problem, training decoders to withstand worst-case scenarios rather than random noise. They also incorporate resolution scaling during training, ensuring watermarks remain intact even in high-resolution images up to 2K [2].

By stepping away from pixel constraints, these methods open up new possibilities for watermarking, which are explored further.

Cross-Domain Watermarking Applications

Researchers are now embedding watermarks into the semantic distribution of content rather than just pixel values, enhancing protection for AI-generated images. For example, the Tree-Ring method creates watermarks that endure even when AI systems attempt to regenerate an image by adding noise and reconstructing it [3].

"Latent watermarks achieve competitive robustness while offering similar imperceptibility and up to 20x speedup compared to pixel-space baselines." – Sylvestre-Alvise Rebuffi et al. [5]

This shift to latent space watermarking allows for a unified framework that works across various generative AI systems, including GANs, diffusion models, and autoregressive systems. These methods also resist regeneration attacks by embedding watermarks directly into the generative model or its decoder, eliminating the need for computationally heavy post-processing steps [5].

Examples from Recent Studies

Despite these advancements, vulnerabilities remain. In June 2023, researchers from UC Berkeley and CMU demonstrated a "regeneration attack" capable of removing 98% of RivaGAN watermarks while maintaining a PSNR above 30. This attack used Gaussian noise and diffusion models to reconstruct clean versions of watermarked images [3].

"Our finding underscores the need for a shift in research/industry emphasis from invisible watermarks to semantic-preserving watermarks." – Xuandong Zhao et al. [3]

These findings have driven the development of semantic watermarking approaches. Below is a comparison of current methods:

| Method | Embedding Domain | Key Advantage | Main Limitation |

|---|---|---|---|

| Traditional (LSB/DWT) | Pixel / Frequency | Simple to implement | Highly susceptible to removal [2] |

| InvisMark | Residual Scaling | High imperceptibility, 256-bit payload | Requires high-resolution images [2] |

| DistSeal | Latent Space | 20x faster, works across model types | Requires complex in-model integration [5] |

| Tree-Ring | Semantic / Distribution | Resilient to regeneration attacks | May have slight visibility [3] |

These new techniques address a critical challenge: traditional pixel-level watermarks are often "provably removable" by generative AI. As a result, the adoption of semantic-preserving methods is becoming essential for long-term protection of digital content [3].

Challenges in Detecting and Protecting Watermarked Images

Watermarking systems, no matter how advanced, face significant hurdles when it comes to resisting attacks aimed at removing or tampering with them. These challenges arise from various methods, including technical manipulations, routine image edits, and outright forgery. Together, they pose a serious threat to the reliability of watermarks. Broadly, these challenges can be grouped into three categories: adversarial attacks, common post-editing operations, and sophisticated spoofing techniques.

Adversarial Attacks and Evasion

One of the most concerning threats comes from diffusion-based regeneration attacks, which target invisible watermarks. In June 2023, researchers from UC Berkeley and UC Santa Barbara, led by Xuandong Zhao, demonstrated how these attacks could eliminate 98% of RivaGAN watermarks while maintaining high image quality (with a PSNR above 30) [3]. This method works by adding noise to an image and then using a diffusion model to reconstruct it, essentially producing a clean version without the watermark.

"Invisible watermarks are provably removable using generative AI… our finding underscores the need for a shift in research/industry emphasis from invisible watermarks to semantic-preserving watermarks."

– Xuandong Zhao, UC Berkeley [3]

Adversarial techniques can also create examples that mimic the latent space features of watermarked images, tricking detection systems into generating false positives or negatives. Diffusion-based regeneration not only reduces watermark recovery rates to nearly zero but also erases the mutual information between the image and its embedded payload [7].

But adversarial attacks aren’t the only problem. Even routine image edits can significantly weaken watermark integrity.

How Post-Editing Affects Watermarks

Everyday image editing operations can severely damage watermarks. Geometric changes like cropping (e.g., RandomResizedCrop), rotation, and perspective adjustments are particularly harmful compared to basic pixel-level edits [2]. JPEG compression, for instance, degrades high-frequency watermark signals, while brightness and contrast tweaks further erode bit accuracy.

Traditional frequency-domain watermarking techniques, such as Discrete Wavelet Transform (DWT) or Discrete Cosine Transform (DCT), are highly vulnerable to even minor changes [2]. Testing has shown that methods like SSL are especially sensitive to JPEG compression and color alterations, while TrustMark struggles with Gaussian noise and rotation. Moreover, there’s an inherent trade-off between capacity and robustness: increasing the watermark payload to 256 bits often leads to higher error rates or visible artifacts [2].

This makes watermarking a useful, albeit fragile, "soft binding" backup – it can endure as long as the pixel data remains relatively unaltered [2].

Spoofing and Mimicking Watermarks

Beyond technical attacks and editing, watermark forgery presents another significant concern. Forgery introduces the risk of false attribution, which can have serious consequences. In March 2025, a team led by Ziping Dong developed WMCopier, a tool capable of embedding target watermarks into unrelated images. This attack successfully deceived even closed-source systems, including Amazon‘s watermarking solution [6].

"Forging traceable watermarks onto illicit content leads to false attribution, potentially harming the reputation and legal standing of Gen-AI service providers."

– Ziping Dong, Researcher [6]

Attackers can exploit noise patterns from watermarked images to create entirely new images carrying the same watermark, which can mislead provenance systems [4]. This opens the door to misuse, potentially holding AI providers accountable for harmful content they didn’t produce. To counter these risks, researchers are working on semantic-aware watermarking techniques that tie detection to the original image content, reducing the chances of successful forgery [4].

Regulations and Industry Adoption of Watermarking

Global Regulatory Requirements

Governments around the world are stepping up to require watermarking for AI-generated content. California has introduced two major laws to address this. The California AI Transparency Act (SB 942), effective January 1, 2026, mandates that generative AI providers with over 1,000,000 monthly users embed "latent disclosures" – invisible watermarks – into AI-generated images, videos, and audio. Violations come with civil penalties of $5,000 per instance [8]. Meanwhile, the California Digital Content Provenance Standards Act (AB 3211) requires AI developers to build in watermarking technologies and obligates social media platforms to label AI-generated content clearly when shared by users [10].

"Failing to appropriately label synthetic content created by GenAI technologies can skew election results, enable defamation, and erode trust in the online information ecosystem."

– California Bill AB 3211 [10]

The European Union AI Act, passed in 2023, also requires companies to disclose when content is AI-generated [10][11]. In China, the rules are even stricter, with a complete ban on AI-generated media that lacks watermarks [12]. In the United States, at the federal level, the proposed Advisory for AI-Generated Content Act (S.2765) would task the Federal Trade Commission (FTC) with setting watermarking standards for AI-generated content [9].

These regulations are shaping not only government policies but also how industries approach watermarking.

Industry Adoption Trends

In response to these regulations, industries are quickly making watermarking an essential part of their accountability practices. Traditional metadata, which can be easily removed by bad actors or stripped away on social media platforms, is being replaced by latent watermarking techniques [8][2].

In February 2025, Microsoft’s Responsible AI researchers Rui Xu and Mengya Hu unveiled InvisMark at the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV). InvisMark is designed for high-resolution AI-generated images, embedding 256-bit watermarks with an impressive 97% bit accuracy across manipulations, all while maintaining a PSNR (Peak Signal-to-Noise Ratio) of approximately 51 [2][1].

In May 2025, IBM Research introduced TabWak (Tabular Watermark) at the ICLR conference in Singapore. Developed by Pin-Yu Chen and his team, this method embeds ownership signatures into AI-generated tabular data row-by-row. TabWak is particularly targeted at industries like banking, where synthetic datasets – such as credit scores or shopping patterns – are often used. Its goal is to prevent competitors from stealing these datasets to train rival models. Pin-Yu Chen highlighted the importance of this innovation:

"If a company’s platform is used to generate tables meant to deceive investors or regulators, that company could be held liable. If the synthetic data is automatically watermarked, however, bad actors may think twice about misusing it."

– Pin-Yu Chen, Expert on AI Adversarial Testing, IBM Research [13]

Tech giants are also stepping up their efforts to integrate watermarking into AI-generated images, driven largely by government pressure [3]. The shift is unmistakable: watermarking is no longer a nice-to-have but a core necessity for ensuring accountability and content transparency in the era of generative AI.

ScoreDetect‘s Invisible Watermarking for AI Images

ScoreDetect Features for AI Image Watermarking

ScoreDetect Enterprise embeds invisible watermarks into AI-generated images without compromising image quality. The fidelity of the images remains intact, with metrics like PSNR (~51) and SSIM (~0.998) confirming there’s no noticeable visual alteration [1] [2]. Unlike metadata-based methods, which can be easily stripped by social media platforms or malicious users, ScoreDetect embeds watermarks directly into the image’s pixel data.

The system’s 256-bit payload enables robust tracking with built-in error correction [1] [2]. To further strengthen protection, it incorporates blockchain timestamping by capturing a content checksum. This provides verifiable proof of ownership without requiring the asset itself to be stored. Together, these technologies create a dual-layer defense against unauthorized use.

ScoreDetect also offers a complete protection workflow that includes:

- Intelligent web scraping: Achieves a 95% success rate in bypassing protection measures to find unauthorized content.

- Automated analysis: Verifies infringement with quantitative proof.

- Automated delisting notices: Ensures over 96% of takedown requests are successful.

Additionally, the platform integrates seamlessly with over 6,000 web apps via Zapier and includes a WordPress plugin for automatic content protection.

These features address critical challenges in protecting AI-generated images, as explored in the next section.

How ScoreDetect Addresses Research Challenges

ScoreDetect tackles key research challenges with innovative solutions designed to ensure resilience and accuracy.

The platform maintains over 97% bit accuracy even after aggressive image manipulations like JPEG compression, rotation, cropping, and Gaussian noise [1] [2]. This is achieved through an advanced neural network architecture that uses skip connections to preserve fine image details. The system is also trained to withstand "worst-case" scenarios, ensuring content authenticity is preserved even under extreme conditions [2].

Another standout feature is its semantic-aware watermarking, which embeds information about the image’s content. This allows verification without needing a central database of keys [4].

| Challenge | Traditional Approach | ScoreDetect Solution |

|---|---|---|

| Metadata Stripping | Relies on external metadata (easily removed) | Embeds watermark directly into pixel data [2] |

| Resolution Scaling | Limited to low-resolution images | Supports high-resolution outputs like DALL-E 3, Stable Diffusion [2] |

| Regeneration Attacks | Watermark removed by AI noise addition | Maintains 97%+ accuracy against reconstruction [1] [2] |

| Geometric Distortions | Fails under rotation and cropping | Trained to resist RandomResizedCrop and rotation [2] |

| Payload Limitations | <100 bits (insufficient for robust tracking) | 256-bit capacity with error correction [1] [2] |

By addressing these challenges, ScoreDetect ensures its watermarks remain durable and effective in protecting AI-generated content.

Use Cases and Applications

ScoreDetect is designed for a wide range of industries, including media, marketing, finance, government, and research, where protecting AI-generated images is critical. Here’s how it’s being used:

- Media and Entertainment: Watermarking AI-generated promotional materials to prevent unauthorized redistribution.

- Marketing Agencies: Safeguarding client assets by tracking their usage online.

- Finance and Banking: Using blockchain timestamping to provide auditable proof of content creation for regulatory purposes.

- Government Agencies: Fighting misinformation by watermarking official AI-generated communications.

- Research Teams: Protecting proprietary AI-generated outputs from competitors.

Conclusion and Future Outlook

Key Takeaways

Invisible watermarking has become a critical tool for protecting AI-generated images in an era where copying and manipulation are easier than ever. Recent studies highlight how modern techniques can embed 256-bit watermarks with impressive imperceptibility metrics (PSNR ~51, SSIM ~0.998) while maintaining over 97% bit accuracy, even after challenges like JPEG compression, rotation, and cropping [1][2].

ScoreDetect tackles these challenges by combining pixel-embedded watermarking with blockchain timestamping, creating a protection system that stays embedded within the image itself. Unlike metadata-based methods, which social media platforms can strip away, this approach ensures durability. The platform’s 95% web scraping success rate and 96% takedown effectiveness show its capability to enforce protection across various industries, including media, marketing, finance, government, and research. These advancements lay a strong foundation for further developments in watermarking technologies.

Future Developments in Watermarking Technology

Looking ahead, watermarking technology is moving toward latent-space integration, embedding watermarks directly into the generative model’s architecture instead of applying them after content creation. Research from January 2026 demonstrates that systems like DistSeal can achieve up to a 20x speedup by incorporating watermarks into the model’s latent decoder, enabling real-time protection for high-volume AI content generation [5].

"Our finding underscores the need for a shift in research/industry emphasis from invisible watermarks to semantic-preserving watermarks." – Xuandong Zhao et al., University of California, Berkeley [3]

The industry is now leaning toward semantic-aware watermarking, where protection ties directly to the content’s meaning. This method addresses sophisticated AI-driven "regeneration attacks", which can remove 98% of traditional invisible watermarks by introducing noise and reconstructing images [3]. Rui Xu from Microsoft Responsible AI highlights how advanced systems like InvisMark "provide a robust foundation for ensuring media provenance in an era of increasingly sophisticated AI-generated content" [2]. These advancements not only strengthen technical defenses but also align with growing global regulations for verifying AI-generated content, making watermarking a crucial tool for compliance and trust in the digital landscape.

FAQs

How can I tell if an image has an invisible AI watermark?

Detecting an invisible AI watermark isn’t easy because these marks can’t be seen by the naked eye. To uncover them, specialized software examines the image data to identify any embedded watermarks. In some cases, advanced tools like neural networks are employed to spot more resilient digital watermarks. That said, certain detection methods can still be bypassed, and researchers are continuously working to make these systems more dependable.

Can cropping or JPEG compression remove an invisible watermark?

Cropping or applying JPEG compression can diminish the strength of an invisible watermark, potentially compromising or even erasing it. This is particularly likely if the watermark wasn’t created to withstand these types of modifications.

What’s the difference between pixel, latent-space, and semantic watermarking?

Pixel watermarking involves embedding hidden data directly into the pixels of an image. While this approach can make the watermark nearly invisible to the naked eye when executed effectively, it’s prone to being disrupted by manipulations like adding noise or cropping the image.

Latent-space watermarking takes a different approach by encoding information within the internal representations of a generative model. This method offers better durability against changes made to the image itself, as the watermark exists at a deeper, structural level.

Semantic watermarking, on the other hand, operates at a conceptual level. It embeds data into the image’s semantic features, ensuring the watermark remains intact even after attempts to alter the image. This technique not only resists attacks but also retains the image’s intended meaning.