Invisible watermarks are now essential for verifying digital content ownership, especially with the explosion of AI-generated media. Traditional methods often fail when content is edited or shared, but AI has introduced new approaches that make watermarks harder to remove while keeping them undetectable to the human eye. Here’s what you need to know:

- Durability Matters: Watermarks need to survive edits like cropping, compression, and rotation. AI-driven systems now achieve this by embedding stronger signals in textured areas and using error correction.

- AI’s Role: Techniques like GANs (Generative Adversarial Networks) and diffusion models help create watermarks that are invisible but resilient, even against major distortions or regeneration attacks.

- Real-World Use: Companies like Microsoft and Google have developed systems like InvisMark and SynthID, which protect content integrity across billions of images and videos.

- Challenges Remain: Regeneration attacks and adversarial manipulations still pose risks, but ongoing research focuses on improving detection and resistance.

Watermarking is evolving to meet the challenges of modern digital threats, blending AI, cryptography, and error correction to protect content across industries.

Tree-Ring Watermarks: Fingerprints for Diffusion Images that are Invisible and Robust (Explained)

sbb-itb-738ac1e

AI Techniques for Embedding Durable Watermarks

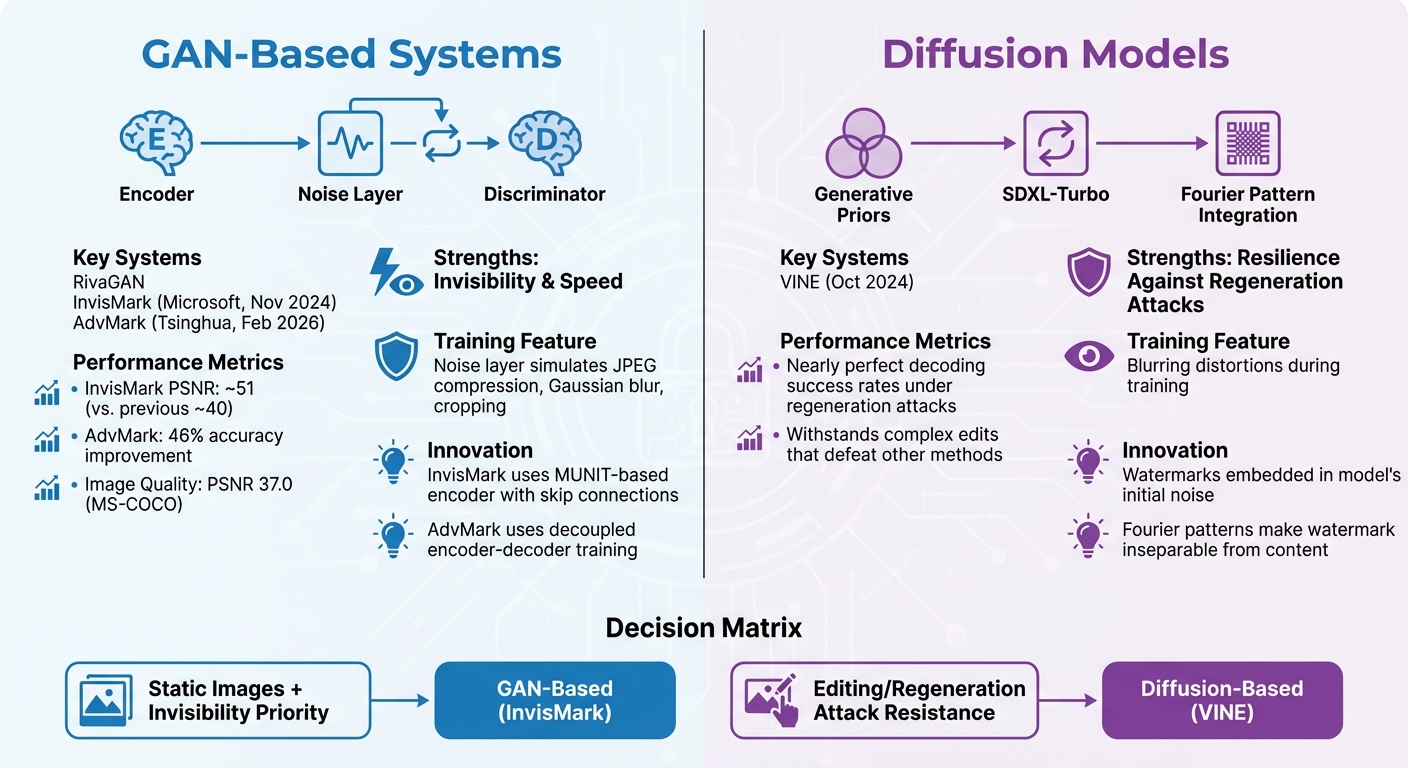

GAN vs Diffusion Model Watermarking: Architecture Comparison and Performance Metrics

Modern watermarking techniques leverage two main AI architectures – Generative Adversarial Networks (GANs) and diffusion models. These systems focus on balancing watermark invisibility, robustness, and capacity, reflecting advancements in the field.

GAN-Based Watermarking Systems

GAN-based systems use a competitive framework where an encoder embeds the watermark, and a discriminator works to identify watermarked images. This setup pushes the encoder to create watermarks that are nearly invisible [3][8]. A notable feature in these systems is the inclusion of a noise layer during training, which mimics real-world attacks like JPEG compression, Gaussian blur, and cropping. This ensures the watermark remains recoverable even under severe distortions [2][3].

Take RivaGAN, for instance. This encoder-decoder system is specifically built to withstand distortions in both images and videos [3][6]. In November 2024, Microsoft introduced InvisMark, a watermarking framework that uses a MUNIT-based encoder. By utilizing skip connections, InvisMark preserves fine image details, achieving impressive results in both invisibility and robustness.

"The residuals from InvisMark are smaller and more imperceptible compared to previous works… achieving PSNR values around 51, significantly outperforming prior works that reached approximately 40." – Rui Xu et al., Microsoft Responsible AI [3]

Another noteworthy development is AdvMark, introduced by Tsinghua University in February 2026. AdvMark takes a different approach by decoupling the training of the encoder and decoder. This separation avoids compromising accuracy on unaltered images while enhancing resistance to attacks. The result is improved image quality and robust watermarking.

"By decoupling the defense, we can achieve the highest clean and robust accuracy… AdvMark outperforms with up to 46% accuracy improvement and the highest image quality." – Jiahui Chen et al., Tsinghua University [6]

While GANs excel in invisibility and speed, diffusion models bring a different strength to the table: resilience against regeneration attacks.

Diffusion Models for Improved Resilience

Diffusion models address a critical challenge: regeneration attacks, where adversaries attempt to erase watermarks by re-synthesizing the image. These models use generative priors to embed watermarks that withstand advanced editing techniques [4].

In October 2024, Shilin Lu and colleagues introduced VINE, a watermarking system based on the SDXL-Turbo diffusion model. VINE incorporates blurring distortions during training, taking advantage of shared frequency characteristics between these distortions and image editing. This approach allowed VINE to maintain nearly perfect decoding success rates, even under regeneration attacks and complex edits that defeated other methods [4].

Some diffusion-based frameworks go a step further by embedding watermarks directly into the model’s initial noise. By integrating Fourier patterns into this noise, the watermark becomes an inseparable part of the generated content. This technique not only resists forgery but also ensures the watermark’s authenticity by linking it to specific noise patterns [5].

Ultimately, the choice between GANs and diffusion models hinges on the threat model. GAN-based systems like InvisMark shine in creating invisible watermarks for static images, while diffusion-based solutions like VINE offer unmatched resilience against editing and regeneration attacks [3][4][8].

Error Correction and Adversarial Training in Watermarking

Creating a watermarking system that can withstand both accidental distortions and deliberate attacks is no small feat. Even the most advanced systems must be equipped to handle real-world challenges. The key lies in combining error-correcting codes (ECC) and adversarial training – two techniques that work hand-in-hand with sophisticated embedding methods. Together, they form watermarks capable of "healing" from damage while standing strong against intentional tampering.

Error-Correcting Codes for Self-Healing Watermarks

Error-correcting codes are like a safety net for watermark data. By adding redundant bits to the original message, ECC allows the system to reconstruct the watermark even if some bits are corrupted. This becomes crucial when images are subjected to processes like JPEG compression, cropping, or scaling, all of which can disrupt embedded watermarks. ECC steps in to detect and fix these errors automatically.

"ECC augments watermark data with redundant bits, forming codewords that correct errors introduced by distortions like compression or cropping." – Frontiers in Physics [10]

For latent-space watermarking, BCH and LDPC codes are particularly effective. They handle independent bit flips – the type of errors common in AI model latent spaces. On the other hand, Reed-Solomon codes, which excel in correcting burst errors in traditional data transmission, aren’t as efficient for these specific scenarios [10].

In January 2026, researchers implemented a two-layer error correction strategy using BCH and LDPC codes within diffusion models to safeguard AI-generated content for critical infrastructure. The first layer, a block-based ECC, managed global structural constraints, while the second layer, repetition coding, addressed finer random bit flips. This dual approach proved resilient, maintaining watermark recoverability even after aggressive distortions like cropping and noise injection [10].

While ECC offers a self-healing mechanism, adversarial training takes protection a step further by preparing watermarks for deliberate attacks.

Adversarial Training for Resilient Decoding

Error correction handles accidental distortions, but what about intentional attacks? This is where adversarial training comes into play. By simulating various attack scenarios – such as JPEG compression, Gaussian blur, and scaling – during training, the encoder learns to embed watermarks that can survive these threats.

Traditional methods relied on Joint Adversarial Training (JAT), which optimized both the encoder and decoder simultaneously. However, this often compromised "clean accuracy", or the system’s ability to extract watermarks from untouched images, by altering the decoder’s decision boundaries [6].

In February 2026, researchers from Tsinghua University, Swinburne University of Technology, and The Australian National University introduced AdvMark, a method that employs Encoder-focused Adversarial Training (EAT). This two-stage process starts by fine-tuning the encoder to move watermarked images into "safe zones" that are resistant to attacks. The second stage uses direct image optimization to counter regeneration attacks, where adversaries attempt to erase watermarks by re-synthesizing the image [6].

"The encoder is fine-tuned to mitigate adversarial attack by moving the image into a safe zone." – Jiahui Chen et al., Tsinghua University [6]

AdvMark delivered impressive results, improving accuracy against adversarial attacks like WEvade by up to 46%, distortion attacks by 29%, and regeneration attacks by 33%. Despite these gains, it maintained a PSNR of 37.0 on the MS-COCO dataset, ensuring the image quality remained high [6].

Another noteworthy development came from Microsoft’s InvisMark, which employed "worst-case" optimization. By identifying the most damaging attack scenarios and incorporating them into training, InvisMark strengthened the decoder against the toughest challenges [3].

Industry Applications and Use Cases

The AI techniques outlined earlier are reshaping how we protect digital content, especially as the rise of AI-generated material increases the need for stronger safeguards [1]. From media companies battling piracy to healthcare providers securing patient data, durable watermarking has become a key defense. These challenges have led to diverse applications across industries, reinforcing the importance of effective watermarking solutions.

Applications in Media and Content Creation

Enhanced watermarking techniques provide practical answers to pressing content protection issues. Media companies and creators constantly struggle to prevent unauthorized distribution. Traditional methods, like C2PA metadata, can be easily stripped away by social media platforms or malicious users. Watermarking offers a "soft binding" approach, embedding origin information directly into the content so it remains intact even when metadata is removed [3].

For brands and agencies, watermarking ensures the security of promotional materials without sacrificing visual quality. AI-powered tools like InvisMark and AdvMark embed nearly invisible identifiers into high-resolution images and videos, enabling traceability. These systems maintain over 97% bit accuracy even after various image manipulations while achieving a PSNR of around 51, making the watermark virtually undetectable [3].

The need for these tools is especially urgent in the fight against deepfakes and misinformation. A 2023 Microsoft survey conducted across 17 countries found that 71% of respondents were concerned about AI-driven scams, and 69% expressed worries about deepfakes and online abuse [1].

In education, durable watermarking supports academic integrity by identifying AI-generated text or images, helping to curb plagiarism. Healthcare institutions use watermarking to verify the authenticity of AI-generated medical predictions – like diagnoses or prognoses – and to secure sensitive medical data from tampering [1]. Similarly, financial organizations rely on these techniques to combat identity fraud and AI-driven scams, such as the impersonation of public figures or officials [1].

ScoreDetect‘s AI-Powered Solutions

ScoreDetect offers a comprehensive platform for digital content protection, leveraging advanced AI and blockchain technology. Its system operates in four stages – Prevent, Discover, Analyze, and Take Down – providing a streamlined approach to combating piracy and unauthorized use. Invisible, non-invasive watermarking prevents misuse of content, while intelligent web scraping achieves a 95% success rate in bypassing anti-scraping measures [Enterprise plan].

When potential violations are detected, ScoreDetect’s AI-powered analysis identifies and matches the infringing content, delivering quantitative proof of unauthorized usage. The platform automates the process of sending delisting notices, achieving a 96%+ take down rate [Enterprise plan]. This end-to-end solution is particularly valuable for industries like media and entertainment, marketing, legal services, and healthcare, all of which face unique challenges in protecting their content.

ScoreDetect also incorporates blockchain technology by capturing a checksum of the content, strengthening copyright claims without storing the actual digital assets. For content creators and publishers, the WordPress plugin automatically logs every published or updated article, creating verifiable proof of ownership on the blockchain while also boosting SEO. These AI-driven measures provide a strong foundation for tackling modern digital content threats.

Challenges, Vulnerabilities, and Future Directions

Current Vulnerabilities in Watermarking Systems

Even with advancements in AI-powered watermarking, these systems still face several weaknesses that threaten their reliability. One of the biggest threats comes from regeneration attacks, where attackers can strip away invisible watermarks by introducing random noise to an image and then reconstructing it using diffusion models or denoising algorithms. Studies have demonstrated that pixel-level invisible watermarks can be completely removed using generative AI, with some attack scenarios resulting in a 0% detection rate [9].

Another pressing issue is adversarial attacks. Techniques like WEvade can create malicious images that disrupt decoders, either causing them to fail or to extract incorrect messages, requiring advanced deep learning in steganalysis for reliable detection, all while making only subtle, nearly imperceptible changes [6]. Frameworks such as AdvMark, which have been previously mentioned, highlight progress in countering these attacks but also underscore the challenges that remain.

Traditional strategies, like joint adversarial training, can sometimes reduce accuracy when dealing with clean images. Furthermore, large-scale text-to-image models can distort watermarks during processes like global or local editing, image-to-video generation, or image regeneration [4]. Watermarks, while intended to protect content, can unintentionally alter the distribution of generated images. This can expose details about the watermarking technique, making it easier for attackers to target and remove them [5]. These vulnerabilities enable bad actors to either erase watermarks to disguise AI-generated content as human-made or create fake watermarks to falsely credit AI models for human-created works [11].

As Li Guanlin, a researcher at Shannon.AI, explains:

"If the watermark on AIGC is easy to remove or forge, we can freely make others believe an artwork is generated by AI by adding a watermark, or an AIGC is created by humans by removing the watermark." [11]

Addressing these vulnerabilities is crucial as researchers work on the next generation of watermarking systems that aim to preserve semantic meaning and incorporate cryptographic enhancements. These ongoing challenges have inspired new research directions that are reshaping watermark design.

Future Directions for AI-Driven Watermarking

To overcome these challenges, researchers are focusing on semantic-preserving watermarks. Unlike pixel-based watermarks, these are designed to remain detectable even if low-level pixel data is altered [9][7]. Xuandong Zhao, a lead author from the University of California, Santa Barbara, emphasized this shift:

"Our finding underscores the need for a shift in research/industry emphasis from invisible watermarks to semantic-preserving watermarks" [9]

Another promising approach involves hybrid cryptographic techniques that combine digital signatures and cryptography with watermarking. These methods aim to bolster authentication and make watermarking systems more resilient [11]. Additionally, experts are exploring two-stage defense frameworks that separate protection against adversarial attacks from protection against other distortions, sidestepping the accuracy trade-offs seen in older methods [6][5].

In November 2024, Microsoft Responsible AI introduced InvisMark, a watermarking system designed for high-resolution AI-generated images. Developed by Rui Xu, David Lowe, and their team, InvisMark successfully encoded 256-bit watermarks (UUIDs with error correction) into DALL-E 3 images, achieving a near-perfect decoding rate and a PSNR of 51.4 [3]. This increased bit capacity allows for error-correcting codes, ensuring reliable decoding even under significant distortions.

Another emerging trend is cross-modal detection. By May 2025, Google’s SynthID technology had watermarked over 10 billion pieces of content. This system offers a unified portal capable of detecting watermarks across various formats, including text, audio, images, and video [12]. Pushmeet Kohli, VP of Science and Strategic Initiatives at Google DeepMind, highlighted its strengths:

"SynthID not only preserves the content’s quality, it acts as a robust watermark that remains detectable even when the content is shared or undergoes a range of transformations" [12]

Li Guanlin from Shannon.AI summed up the ongoing battle between watermarking systems and those attempting to bypass them:

"It will always be a cat-and-mouse game… That is why we need to keep updating our watermarking schemes." [11]

Conclusion

AI-powered watermarking is reshaping how digital content is protected. Traditional pixel-based methods fall short against advanced attacks, such as those using diffusion models. By embedding watermarks into the latent space of AI models and utilizing error-correcting codes like BCH and LDPC, modern systems can retrieve identification data even after significant distortions.

The numbers speak for themselves. Google’s SynthID has already watermarked over 10 billion pieces of content [12], showcasing the scalability of these technologies. Meanwhile, decoupled defense frameworks have shown up to a 46% improvement in resisting adversarial attacks compared to older joint optimization methods [6]. These advancements are proving their worth across various sectors.

Industries face high stakes. In media and entertainment, watermarking ensures ownership and tracks content origins. In education, it helps detect AI-generated assignments, preserving academic integrity. For cybersecurity, it offers a reliable defense against identity fraud and deepfakes. With AI-generated elements now present in over 30% of social media images [1], the demand for strong protection is more pressing than ever.

Looking forward, watermarking systems are set to integrate post-quantum cryptography and cross-modal detection. Pushmeet Kohli from Google DeepMind emphasizes the importance of these efforts:

"Content transparency remains a complex challenge. To continue to inform and empower people engaging with AI-generated content, we believe it’s vital to continue collaborating with the AI community and broaden access to transparency tools." [12]

For large-scale digital asset management, tools like ScoreDetect (https://www.scoredetect.com) combine invisible watermarking with blockchain verification and automated takedown workflows, offering robust protection against evolving threats.

As the technology evolves, it continues to balance imperceptibility, robustness, and payload capacity, staying one step ahead of increasingly sophisticated attacks. AI is both a challenge and a powerful ally in the ongoing mission to protect digital content.

FAQs

What’s the difference between GAN and diffusion watermarking?

The main distinction between GAN (Generative Adversarial Network) watermarking and diffusion watermarking lies in their methods and ability to withstand challenges. GAN watermarking relies on neural networks to embed and retrieve watermarks, prioritizing subtlety to ensure the watermark remains unnoticed. However, it can face difficulties when dealing with unpredictable distortions. On the other hand, diffusion watermarking integrates data into noise or patterns during the generative process. This approach enhances its ability to endure advanced attacks and unexpected alterations, making it notably more resilient.

How do error-correcting codes help recover damaged watermarks?

Error-correcting codes play a crucial role in improving watermark recovery by addressing errors introduced during embedding, transmission, or manipulation of digital content. By incorporating redundancy into the watermark data, these codes allow the decoding process to detect and correct distortions caused by factors like compression or noise. This makes it possible to reconstruct the original watermark with precision, even when parts of it are damaged, ensuring greater reliability and resistance to tampering or degradation.

How can watermarking resist regeneration and adversarial attacks?

Watermarking stands strong against regeneration and adversarial attacks by leveraging advanced techniques designed to balance durability and quality. Strategies like adaptive frameworks optimize how watermarks are embedded, ensuring they remain robust without compromising the content’s integrity. Tools such as residual optimization, error correction codes, and adversarial training play a key role in safeguarding watermarks from noise and distortions.

By fine-tuning encoders and employing randomly parameterized decoders, these methods ensure watermarks stay detectable even under aggressive attacks. What’s more, they achieve this resilience without the need for extensive retraining, making the process efficient and reliable.