AI systems are transforming how video tampering is detected, identifying manipulations invisible to the human eye. Tampering typically involves altering individual frames (intra-frame) or modifying sequences (inter-frame). These changes can lead to fraud, misinformation, and legal disputes. Traditional methods often fail against advanced techniques, but AI achieves over 90% accuracy by analyzing subtle inconsistencies in textures, edges, and noise patterns.

Key insights:

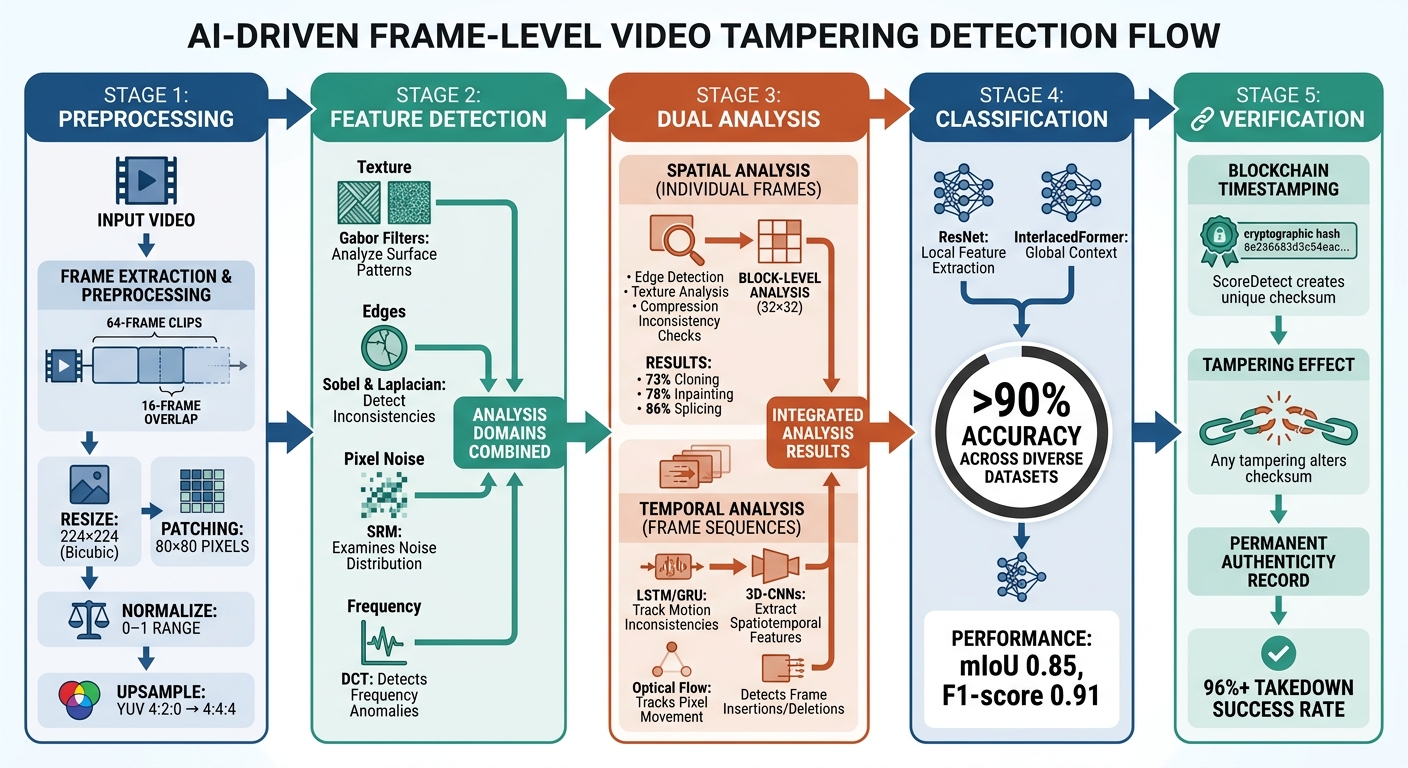

- Preprocessing: Videos are broken down into frames, resized, normalized, and analyzed in 80×80 pixel patches to detect anomalies.

- Detection: AI uses tools like Gabor filters for texture, Sobel operators for edges, and Discrete Cosine Transform (DCT) for frequency analysis.

- Classification: Deep learning models, such as ResNet, identify tampered frames and provide measurable evidence for enforcement.

- Temporal Analysis: Models like LSTM and GRU track motion inconsistencies, revealing frame insertions or deletions.

- Blockchain: Tools like ScoreDetect timestamp videos, ensuring any tampering alters the checksum for easy detection.

AI’s forensic capabilities, combined with blockchain verification, safeguard video integrity across industries, from legal services to media organizations.

AI Video Tampering Detection Process: From Frame Extraction to Classification

Tampering Detection in Live Streaming – How Big is the Problem? – Challenges and Modern Approaches

sbb-itb-738ac1e

How AI Analyzes Video Frames

AI breaks down video content frame by frame to detect even the smallest signs of tampering. This process involves two key steps: preparing the video data and then analyzing it for patterns that indicate manipulation. Together, these steps form the foundation of modern video forensic techniques. These methods are often complemented by invisible watermarks to ensure long-term content integrity.

Frame Extraction and Preprocessing

Before detecting tampering, AI systems must first prepare the raw video data. This starts by dividing the video into overlapping clips (e.g., 64 frames per clip with a 16-frame overlap) to maintain the flow of motion and context. Spatiotemporal Average Pooling (STP) is then applied to emphasize movement patterns and highlight inconsistencies [1].

Next, individual frames are processed to reduce noise and improve the detection of anomalies. For example, 3×3 averaging filters are used to smooth out random pixel variations [1]. Frames are resized to a standard 224×224 pixels using bicubic interpolation, which creates smoother textures and minimizes artifacts compared to simpler resizing techniques [1]. Additionally, frames are upsampled from YUV 4:2:0 to 4:4:4 format to retain more detail, and pixel values are normalized to a 0–1 range, making it easier for neural networks to analyze the data efficiently [3][4].

To pinpoint tampering, AI systems divide frames into 80×80 pixel patches. This helps localize issues like compression artifacts or irregularities along edges [3]. Some methods also use an "absolute difference algorithm" to compare consecutive frames, isolating changes and filtering out redundant data. This streamlined data is then processed by 3D-CNNs for further analysis [2]. Each step in this preprocessing pipeline is carefully designed to ensure that even minor manipulations are detectable.

Once the frames are prepped, the system moves on to extracting forensic features.

Feature Detection and Pattern Analysis

After preprocessing, AI shifts its focus to identifying forensic fingerprints – subtle clues left behind by tampering. These fingerprints are detected across four main domains: texture, edges, pixel noise, and frequency. Each domain provides unique insights into potential manipulation.

- Texture: Gabor filters analyze surface patterns to detect irregularities.

- Edges: Sobel and Laplacian operators identify inconsistencies in edges.

- Pixel Noise: The Spatial Rich Model (SRM) examines noise distribution for anomalies.

- Frequency: Discrete Cosine Transform (DCT) is used to detect tampering in the frequency domain [4].

"Any processing can leave a forensic fingerprint on the pixels of a video sequence. Analysing this fingerprint can provide evidence of video tampering, such as splicing, inpainting or inter-frame tampering." – Springer Nature [3]

AI also estimates compression parameters, such as Quantization Parameter (QP) and deblocking filter settings, to spot mismatches with the video’s metadata [3]. For temporal analysis, advanced models like Long Short-Term Memory (LSTM) and Gated Recurrent Units (GRU) are employed to track inconsistencies across frames. These tools can detect subtle manipulations, such as frame deletions or insertions, by analyzing long-range dependencies in pixel values [1].

Deep learning models trained on these features achieve impressive detection accuracy, even in cases where human evaluators struggle to identify tampering [3].

Detecting Tampering with Spatial and Temporal Analysis

When AI processes video frames, it employs two main approaches to identify tampering: spatial analysis and temporal analysis. Spatial analysis inspects individual frames for visual irregularities, while temporal analysis looks at how frames relate to one another over time. Together, these methods refine the detection process by isolating both static and dynamic anomalies.

Spatial Inconsistency Detection

Spatial analysis hones in on irregularities within single frames – issues like mismatched lighting, unnatural edges, or texture differences that can hint at tampering. To catch these, AI models use several advanced detection tools.

- Edge detection tools, such as Sobel and Laplacian operators, are key for spotting brightness changes and texture boundaries where spliced clips meet [4].

- Gabor filters analyze textures across multiple scales and directions, helping to identify footage from different environments that has been stitched together [4].

- At a finer level, the Spatial Rich Model (SRM) examines statistical features like inter-pixel relationships, skewness, and kurtosis. Since deep learning manipulations often disrupt natural pixel patterns, SRM can flag these micro-level anomalies [4].

Another telltale sign of tampering is compression inconsistencies. For example, when a video’s visible "blockiness" doesn’t align with its claimed high bitrate, it points to recompression and potential manipulation [3].

A notable advancement came in January 2025, when researchers Sumaiya Shaikh and Sathish Kumar Kannaiah introduced the DLBA framework. Using the MobileNetV2 architecture, this system analyzed video frames in 32×32-pixel blocks. The results were impressive: a true positive rate of 73% for cloning, 78% for inpainting, and 86% for splicing forgeries. This highlighted the effectiveness of block-level analysis in detecting subtle anomalies that broader assessments might overlook [5].

"Compression formats have been designed with the human visual system in mind, and the effects remain largely below the threshold of detection for human eyes." – Neural Computing and Applications [3]

Temporal Artifact Detection

While spatial analysis focuses on individual frames, temporal analysis evaluates the flow between them. It looks for motion inconsistencies or abrupt changes that could signal tampering. Advanced models like RNNs (including LSTM and GRU architectures) are particularly adept at detecting long-range dependencies in video sequences, which can reveal traces of frame deletion or insertion [1]. Similarly, 3D-CNNs extract spatiotemporal features by analyzing both spatial and temporal dimensions simultaneously [1][2].

Another critical tool is optical flow, which tracks pixel movement between frames. Sudden changes or irregularities in motion patterns often suggest that a segment has been spliced or an object removed [1][4]. Additionally, mismatched compression parameters across frames can indicate tampering and recompression [3]. This combination of spatial and temporal analysis ensures the precision needed for modern video forensics. This is especially critical for the media and entertainment industry, where digital asset integrity is paramount.

In June 2024, researchers Naheed Akhtar and Zulfiqar Habib unveiled the DEEP-STA method, which fused a 2D-CNN for spatiotemporal analysis with LSTM/GRU units. This method achieved over 90% accuracy in detecting tampered regions across diverse datasets, even when only 10 frames were altered. Its robustness extended across various frame rates, formats, and compression qualities [1].

"The proposed method can identify and pinpoint tampering regions with more than 90% accuracy, irrespective of video frame rates, video formats, number of tampering frames, and the compression quality factor." – Naheed Akhtar, Department of Computer Science, COMSATS University Islamabad [1]

AI-Powered Tampering Classification

After AI identifies spatial and temporal anomalies in video frames, the next step is classification – determining whether a frame is authentic, tampered, or manipulated. This stage uses advanced deep learning models that not only detect irregularities but also provide measurable evidence to support enforcement actions.

Using ResNet Models for Classification

ResNet models play a key role in frame-level tampering classification because they excel at capturing detailed features like edges, textures, and pixel-level inconsistencies. These models are often part of multi-stage frameworks that combine local and global feature analysis [1][4].

For instance, researchers designed the UVL2 framework, which uses a ResNet module for extracting local features and an InterlacedFormer for analyzing global context. When applied to the DAVIS-VI dataset – featuring 33,550 frames across 300 tampered videos – the model achieved a mean Intersection over Union (mIoU) of 0.85 and an F1-score of 0.91. This study highlighted that examining diverse feature types (like edges, textures, and frequency domains) boosts the model’s ability to detect unfamiliar tampering techniques [4].

To address the heavy computational demands of high-dimensional feature analysis, researchers often use autoencoders to reduce data dimensions while maintaining detection accuracy. Additionally, ResNet is integrated into Siamese network architectures for tasks like verifying frame duplication, enabling a more refined detection process [1].

| Feature Extraction Level | Model/Method Used | Role in Tampering Detection |

|---|---|---|

| Local Spatial Features | ResNet / VGG-16 | Identifies edge artifacts, texture mismatches, and pixel noise [1][4] |

| Global Temporal Features | ViT / LSTM / GRU | Examines sequence inconsistencies and long-range dependencies [1][4] |

| Quantitative Proof | SSIM / mIoU / F1-Score | Provides measurable evidence for localization and enforcement [1][4] |

| Classification Output | Softmax Activation | Generates probabilities for different tampering types [1] |

These outputs are further validated by precise metrics, ensuring reliability in tampering detection.

Quantitative Proof for Tampering Detection

After extracting detailed frame-level features, AI models generate quantitative evidence to support tampering classification. These systems go beyond flagging suspicious frames – they produce measurable proof that can back actions like copyright enforcement and takedown requests. This quantifiable evidence is essential for legal and rights enforcement [1][2].

By analyzing texture, edges, pixels, and frequency domains, advanced models deliver comprehensive mathematical proof of inconsistencies in video data [4]. Some systems even create "grayscale video" outputs, where white pixels highlight tampered areas and gray indicates original content, providing clear visual evidence [4]. This precision is crucial: studies show deep neural networks can detect manipulated content with less than 1% error on specific datasets, while human evaluators often fail to outperform random guessing when tasked with identifying fake content [3].

AI’s ability to quantify tampering transforms it from a simple detection tool into a forensic instrument. Techniques such as Quantization Parameter (QP) analysis for recompression traces and multi-scale SSIM for artifact identification provide the defensible proof needed for protecting digital content through digital watermarking [2][3]. These methods not only pinpoint tampered content but also deliver the hard evidence required for enforcing digital rights effectively.

ScoreDetect‘s Role in Digital Asset Protection

When tampering is identified, strong measures ensure that a video’s authenticity can still be verified. Digital asset protection blends proactive embedding with forensic analysis. ScoreDetect leverages blockchain technology to create a unique checksum for your video content – without ever storing the actual video files.

Blockchain Timestamping for Video Integrity

This method offers proof of authenticity that stands up to legal challenges. By timestamping a video through ScoreDetect, the blockchain generates a permanent record of when that specific version was created. Any change to the video – whether it’s removing frames, splicing, or even altering pixels – results in a different checksum, instantly flagging tampering [1].

For industries like media, legal services, or government agencies managing sensitive video evidence, this blockchain-backed integrity is a reliable tool for resolving copyright disputes and enforcing ownership rights.

ScoreDetect also simplifies integration with over 6,000+ web apps via Zapier automation. Additionally, its WordPress plugin automatically timestamps every video published or updated. This seamless automation ensures continuous protection for creators and businesses alike.

Beyond authenticity verification, advanced content analysis adds another layer of security to digital assets.

Content Analysis and Protection

ScoreDetect’s ability to pinpoint tampered frames enhances its overall protection strategies. For companies dealing with advanced tampering threats, InCyan – ScoreDetect’s parent platform – provides additional tools to complement blockchain timestamping.

InCyan’s offerings include:

- Tectus: Embeds invisible watermarks that remain intact through compression and cropping.

- Idem: Uses multimodal matching to identify ownership, even if only 10% of the original video is available.

- Indago: Rapidly removes unauthorized video listings, de-indexing infringing links in under 60 minutes.

Indago works at search-engine speed, targeting piracy at its source by stopping traffic to unauthorized content. Combined with ScoreDetect’s tampering detection, this creates a robust enforcement system. Businesses can timestamp their original videos, identify unauthorized copies with AI-powered matching, and initiate takedowns with measurable outcomes. Enterprise users of ScoreDetect consistently achieve takedown rates exceeding 96%, thanks to automated delisting notices and rapid response times.

Conclusion

AI-powered frame-level detection plays a key role in protecting video integrity, boasting over 90% accuracy in identifying tampering through subtle digital fingerprints that are invisible to the human eye [1][3].

For industries handling sensitive video evidence – like legal services, government agencies, and media organizations – these tools address critical vulnerabilities. Researchers at the Institute of Information Engineering emphasize the risks:

"Malicious video tampering can lead to public misunderstanding, property losses, and legal disputes" [4].

Modern AI systems can detect even minor alterations, such as a change spanning just 10 frames, ensuring that surveillance footage and video recordings remain admissible in court [1].

Beyond detection, these technologies integrate with blockchain verification to provide an extra layer of security. For instance, ScoreDetect’s blockchain timestamping permanently records video authenticity. This process is part of a broader suite of content authenticity verification tools designed to secure digital media. Paired with enterprise solutions from InCyan, like Tectus, Idem, and Indago, this approach creates a robust defense system, achieving automated delisting rates of over 96%.

The adoption of passive forensic analysis and temporal artifact detection ensures these tools remain effective against the rapid evolution of AI-generated manipulation techniques. By leveraging spatiotemporal analysis, organizations can stay ahead, safeguarding video integrity in an ever-changing digital landscape.

FAQs

What types of edits can frame-level AI tampering detection identify?

Frame-level AI tampering detection focuses on pinpointing manipulations within single video frames. This includes identifying pixel-level alterations, splicing, or inpainting. It also flags temporal issues, such as repeated, frozen, or missing frames. By examining frame consistency, advanced techniques can detect AI-generated videos by uncovering artifacts or irregularities, both spatially within frames and over time across multiple frames.

Can recompression or resizing cause false tampering alerts?

Recompression or resizing can sometimes set off false tampering alerts, particularly when detection methods focus on inconsistencies at the frame level. However, more advanced AI tools go beyond just frame analysis. They examine a range of features to distinguish between legitimate edits – like resizing or recompression – and genuine cases of tampering.

How does ScoreDetect’s blockchain timestamping prove a video was changed?

ScoreDetect uses blockchain timestamping to create a unique checksum for a video at a specific point in time. This checksum acts like a digital fingerprint. By comparing the original checksum with the one from the current version of the video, any changes can be identified, offering undeniable proof of tampering.