AI-powered multimodal content matching is a cutting-edge method that analyzes digital content using multiple data types – visuals, audio, metadata, and timing sequences. Unlike older systems that rely on simple file comparisons, this approach creates a "semantic fingerprint", enabling accurate identification of content even after it’s been altered (e.g., cropped, filtered, or re-encoded).

Key Highlights:

- How it works: Combines metadata analysis, perceptual hashing, and AI-generated embeddings to identify and match content across formats.

- Why it matters: Protects digital assets from theft and ensures scalable enforcement, even for platforms handling millions of uploads daily.

- Performance: Meta‘s SSCD model achieves 90% accuracy with a cosine similarity threshold of 0.75, even for heavily modified content.

- Applications: Automates copyright enforcement, detects piracy, and simplifies asset management with AI-driven indexing and blockchain timestamps.

For businesses, this system ensures reliable content protection, faster data processing, and automated solutions for managing intellectual property.

What is Multimodal AI?

Understanding Multimodal AI

Multimodal AI processes visual, audio, text, and temporal data simultaneously, offering a richer understanding of content. Rather than focusing on just one aspect – like pixels in an image or words in a document – it examines all available signals together to form a more complete interpretation of the content.

The standout feature of multimodal AI is how it represents content. While traditional AI methods often rely on simple metadata checks or file comparisons, which can fail when content is altered, multimodal AI uses high-dimensional embeddings. These embeddings capture the semantic essence of the content, not just its surface-level details. For example, they represent the visual meaning of an image rather than just its pixel patterns, making it possible to identify content even after significant modifications [1].

"SSCD is built on a ResNet50 backbone… This vector is essentially a compact representation capturing visual patterns and semantics." – MetaGhost [1]

These embeddings enable the system to match content based on similarity, even when changes like cropping, rotation, color filtering, or re-encoding have been applied. The embedding vector remains consistent, ensuring that similar content maps to nearby points in the high-dimensional space. On Meta’s DISC2021 benchmark, using a cosine similarity threshold of about 0.75 achieves 90% precision in identifying content copies [1].

This unified approach to representation paves the way for deeper, integrated analysis across multiple data types, as explained below.

Core Components of Multimodal Analysis

Multimodal AI strengthens content protection by using multiple data types to compensate for changes in any single channel. It identifies and matches content across four primary modalities, each offering unique detection strengths:

- Visual analysis: Focuses on shapes, textures, and spatial relationships using advanced deep learning techniques. This helps detect visual patterns even when heavy modifications are applied.

- Audio analysis: Leverages psychoacoustic masking to identify signals embedded in less noticeable regions of the audio spectrum. Even if the visual component is altered, audio fingerprints can still recognize the content [1].

- Temporal analysis: Examines timing and synchronization in video content, analyzing frame sequences and compression structures (like Groups of Pictures). This ensures detection accuracy even when videos are re-timed, cut, or edited.

- Metadata analysis: Inspects details like EXIF data (camera model, GPS coordinates, timestamps) and container metadata (codecs, resolution) to authenticating digital images to add another layer of validation [1].

This layered approach ensures redundancy. For example, if metadata is stripped and heavy visual filters are applied to a video, the audio fingerprint can still pinpoint the original content. Similarly, if the audio is muted but the visuals remain, the visual embeddings take over. This cross-modal redundancy makes multimodal AI a powerful tool for large-scale content protection.

sbb-itb-738ac1e

Why Multimodal AI Matters for Content Protection

Detection Despite Content Modifications

Multimodal AI excels at recognizing content even after significant alterations by focusing on its semantic identity rather than superficial changes. By creating high-dimensional embeddings that capture the core meaning of the content, the system ensures stability and reliability, no matter how much the content is modified.

"Properly designed invisible watermarks can survive those transformations and act as a latent serial number for the work itself." – Nikhil John, InCyan [2]

Take Meta’s SSCD model, for example. It generates a 512-dimensional vector for each image, which remains consistent even after modifications like applying filters, cropping, adding borders, mirroring, or re-encoding at different quality levels. These vectors maintain a cosine similarity above the 0.75 threshold, ensuring reliable detection [1].

But it doesn’t stop there. Cross-modal redundancy strengthens protection further. Even if the visual aspect of the content is heavily altered, other components like audio fingerprints or metadata ensure the content remains identifiable [1]. This layered approach means that at least one detection method will work, even when the content undergoes multiple rounds of editing. This combination of semantic consistency and redundancy allows for fast, scalable content verification. This process is often reinforced by blockchain-based timestamps to provide an immutable record of ownership.

Accuracy and Speed in Detection

Multimodal AI’s ability to handle large-scale data while maintaining high accuracy is a game-changer. It minimizes the need for manual checks and speeds up the process significantly. Platforms like Instagram handle over 100 million photo and video uploads daily [1]. Each piece of content passes through a multi-stage pipeline that combines metadata analysis, perceptual hashing, and deep learning. This setup allows infringing material to be identified and blocked within seconds, preventing it from reaching users [1].

The secret to this speed lies in intelligent indexing. Searching a database of one billion assets using brute force would require about 12 days of compute time on a single CPU core [3]. However, modern systems use Approximate Nearest Neighbor (ANN) search, which has sublinear complexity – close to O(log n). This means that doubling the database size only slightly increases processing time [3]. Such scalability is crucial for real-time enforcement on a global scale.

"Accuracy without scale delivers impressive demos that collapse under production volume." – Nikhil John, InCyan [3]

Business Applications of Multimodal Content Matching

Protecting Content and Intellectual Property

Multimodal AI is changing the game for protecting intellectual property by offering a layered approach that stops unauthorized use in its tracks. Unlike older methods that focus on exact pixel matches or metadata – which can be easily altered – modern AI digs deeper. It identifies the semantic essence of content, understanding its meaning rather than just its appearance [1].

This technology allows businesses to automate enforcement whenever matches are detected. Rights management platforms now offer options like "monitor only" (tracking unauthorized use), "automatic removal" (initiating takedowns), or "blocking" uploads before they even go live [1]. This automation eliminates the need for constant manual oversight, ensuring consistent protection across platforms that handle millions of uploads daily.

Using multimodal embeddings, these systems are remarkably resistant to content modifications – making them ideal for anti-piracy measures. For example, even if a video’s visuals are altered but the audio remains unchanged, cross-modal validation can still identify the content and enforce copyright policies [1].

Beyond just identifying content, these systems also analyze behavioral patterns to uncover sophisticated piracy networks. By examining factors like upload frequency, engagement spikes, and metadata anomalies (e.g., stripped EXIF data or mismatched codecs), they can detect mass-reposting operations and bot-driven piracy that traditional tools might miss [1]. For large-scale protection, InCyan’s Idem platform stands out by recognizing ownership even when only 10% of the original content remains intact. It can handle edits like cropping, compression, or meme overlays that often defeat conventional tools.

This advanced protection doesn’t just stop piracy – it also makes managing extensive digital asset collections much easier.

Simplifying Asset Management

Managing massive digital libraries becomes far more efficient with multimodal AI, thanks to its ability to auto-generate searchable metadata for vast databases. Instead of manually tagging thousands of images or videos, the AI analyzes visual content, extracts text from images into structured JSON, and creates detailed, searchable descriptions – all without requiring human input [4][5].

Modern ANN (Approximate Nearest Neighbor) search technology further speeds up the process, reducing query times to just milliseconds [3]. This capability ensures seamless cross-media tracking, linking ownership and licensing records across various formats – whether it’s images, videos, audio, or documents – while maintaining a consistent identity for each asset as it evolves across platforms [2].

For businesses juggling large-scale digital assets, InCyan’s Blueprint platform offers a streamlined solution. By integrating AI-driven content protection directly into its asset management system, it centralizes visual libraries and provides tools for royalties tracking, rights management, and robust content protection – all in one place. This eliminates the hassle of switching between multiple disconnected tools, making operations smoother and more efficient.

How do Multimodal AI models work? Simple explanation

How ScoreDetect Uses Multimodal Matching

ScoreDetect’s 4-Step AI-Powered Content Protection Process

AI-Powered Protection Features

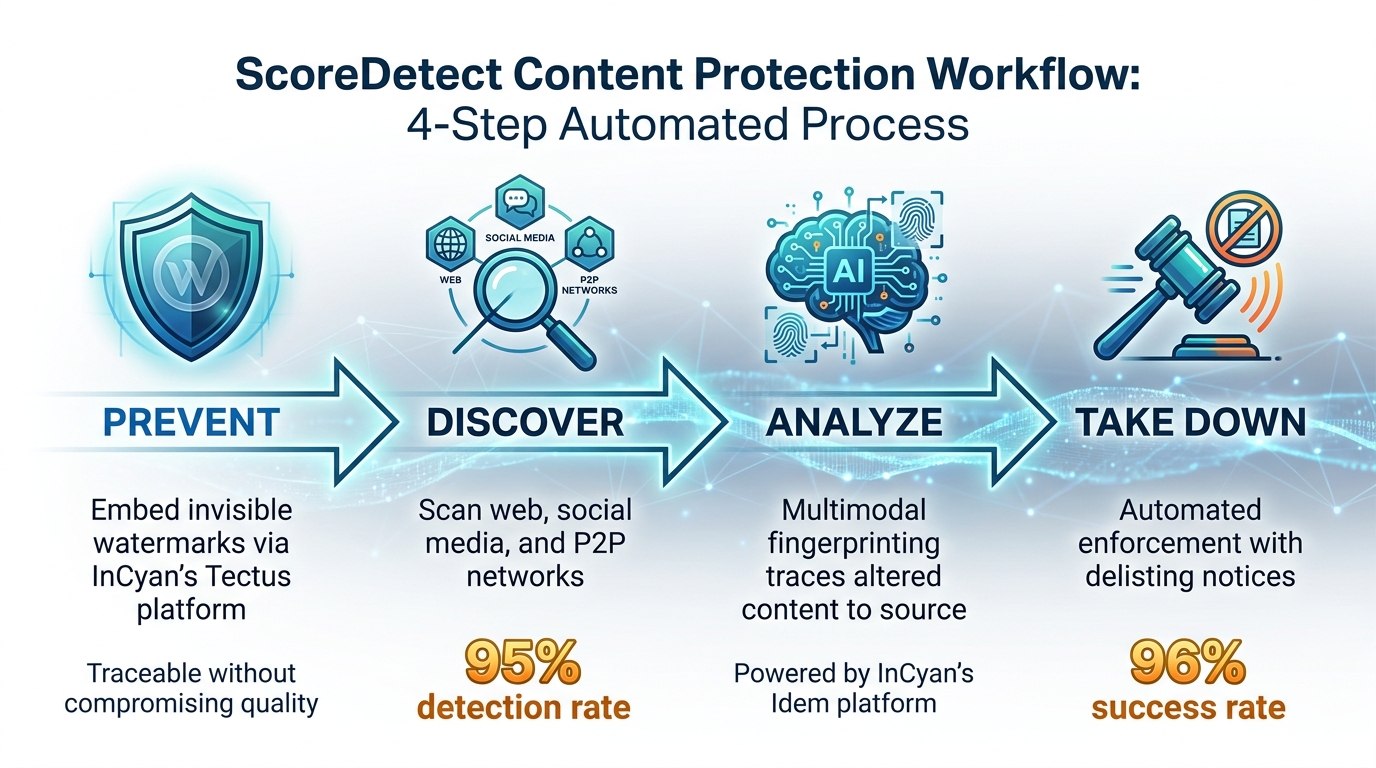

ScoreDetect employs multimodal AI in a four-step protection process designed to safeguard media files like images, videos, audio, and documents across various platforms, including the web, social media, and peer-to-peer networks. Here’s how it works:

- Prevent: For organizations seeking proactive measures, InCyan’s Tectus platform embeds invisible watermarks into media files, making them traceable without compromising their appearance or quality.

- Discover: The system scans online platforms with an impressive 95% detection rate, identifying unauthorized use of protected content.

- Analyze: When potential violations are flagged, the platform uses advanced multimodal fingerprinting to trace altered or modified content back to its original source. This process, powered by InCyan’s Idem platform, ensures reliable identification even at an enterprise scale.

- Take Down: Once infringement is confirmed, automated enforcement tools issue delisting notices with over 96% success, reducing the need for manual intervention.

By combining these steps, ScoreDetect provides a seamless, automated solution to combat digital piracy, aligning with InCyan’s state-of-the-art protection technologies.

Blockchain Timestamping for Ownership Verification

Beyond its AI capabilities, ScoreDetect strengthens content security with blockchain-based verification. Instead of storing the actual digital assets, the platform uses blockchain to record a cryptographic checksum (SHA256 hash) of your content. This hash is timestamped on a public blockchain ledger, offering undeniable proof of when a specific version of your content was created.

This method ensures a secure chain of custody for legal and forensic purposes. Since blockchain records are immutable, they cannot be altered or backdated, making them a reliable source of ownership verification. ScoreDetect provides two types of certificates for added assurance:

- Verification Certificates and Formal Recognition Certificates include details like the blockchain URL, public ledger URL, registration date, and the SHA256 hash of your content.

For added convenience, ScoreDetect’s WordPress plugin automates this process by timestamping every new or updated article, requiring no technical expertise. With over 6,000 integrations via Zapier, the platform streamlines workflows to protect your content from the moment it’s created. Plus, by confirming content authenticity, it enhances SEO in line with Google’s E-E-A-T framework, boosting your content’s credibility and visibility online.

Conclusion

Multimodal AI is transforming how digital assets are secured, especially in a world where platforms handle hundreds of millions of uploads daily. Unlike older methods that rely on metadata and often fail when files are modified or re-exported, AI-powered content matching uses semantic fingerprinting to recognize images, videos, audio, and documents – even after they’ve been altered.

The advantages are undeniable: AI-driven systems with sublinear search capabilities can deliver results in milliseconds [3]. This speed allows for real-time enforcement, stopping infringing uploads before they gain traction. Automated takedown workflows boast over 96% success rates, while blockchain-verified timestamps ensure a defensible chain of custody for every action taken.

ScoreDetect leverages these advancements to provide seamless digital asset protection. As part of InCyan’s broader protection suite, ScoreDetect integrates with tools like Blueprint and Idem, offering enterprises a streamlined solution. Its four-pillar approach includes:

- Discovery: Locating unauthorized usage.

- Identification: Using multimodal fingerprinting via InCyan’s Idem platform.

- Prevention: Applying invisible watermarking through InCyan’s Tectus.

- Insights: Delivering actionable business intelligence for licensing and monetization.

Blockchain vs traditional timestamping methods highlight how cryptographic verification further enhances security by creating tamper-proof records without storing the actual files. Plus, with integrations across over 6,000 apps via Zapier, it automates protection workflows from content creation to enforcement.

For organizations managing digital assets across web platforms, social media, and peer-to-peer networks, combining AI-powered detection with cryptographic verification goes beyond combating piracy. It ensures lasting ownership and turns the challenges of scale into opportunities. Whether safeguarding research, media libraries, or branded content, these tools embody the multimodal AI approach discussed earlier – an approach that blends semantic understanding with layered detection to deliver durable, future-ready content protection.

FAQs

How is a semantic fingerprint different from a file hash?

A semantic fingerprint and a file hash serve different purposes and react differently to changes in a file. A file hash acts as a fixed digital signature, meaning even a tiny alteration to the file – like a single pixel change – completely alters the hash. On the other hand, a semantic fingerprint focuses on the meaning or features of the content. This makes it more adaptable to changes like cropping or compression.

Because of this resilience, semantic fingerprints are particularly useful for identifying modified or derivative multimedia content. This is especially important in areas like copyright protection, where tracking alterations or derivatives of original content is crucial.

What edits can multimodal matching reliably detect?

Multimodal matching is highly effective at spotting edits such as cropping, compression, color adjustments, and minor transformations. It can recognize content even when as little as 10% of the original asset is preserved, making it a dependable method for detecting significant changes.

How can ownership be proven without storing the file?

Ownership can be verified without storing the actual file through blockchain timestamping. This method creates a checksum, also known as a hash, of the content. The hash serves as proof of the file’s existence and integrity at a specific moment in time, all without requiring the digital asset itself to be stored.