AI matching can now detect and verify digital content ownership without exposing sensitive data. This solves the growing issue of content misuse caused by stripped metadata during sharing or editing. Here’s how it works:

- Privacy-focused technologies like homomorphic encryption, federated learning, and differential privacy ensure secure matching without revealing raw data.

- Invisible watermarking embeds ownership proof directly into media, surviving transformations like compression or editing.

- Blockchain integration stores tamper-proof records of ownership using cryptographic hashes instead of full media files.

Tools like InCyan‘s Idem and ScoreDetect combine these methods for reliable content protection. Idem identifies altered assets with high accuracy, while ScoreDetect timestamps ownership on an immutable ledger. Together, they protect intellectual property across industries like media, legal, and social platforms.

This approach ensures content remains traceable even when metadata is stripped, offering a scalable and secure way to combat misuse.

Technical Methods for Secure AI Matching

How Privacy-Preserving AI Matching Works: 4 Technical Methods

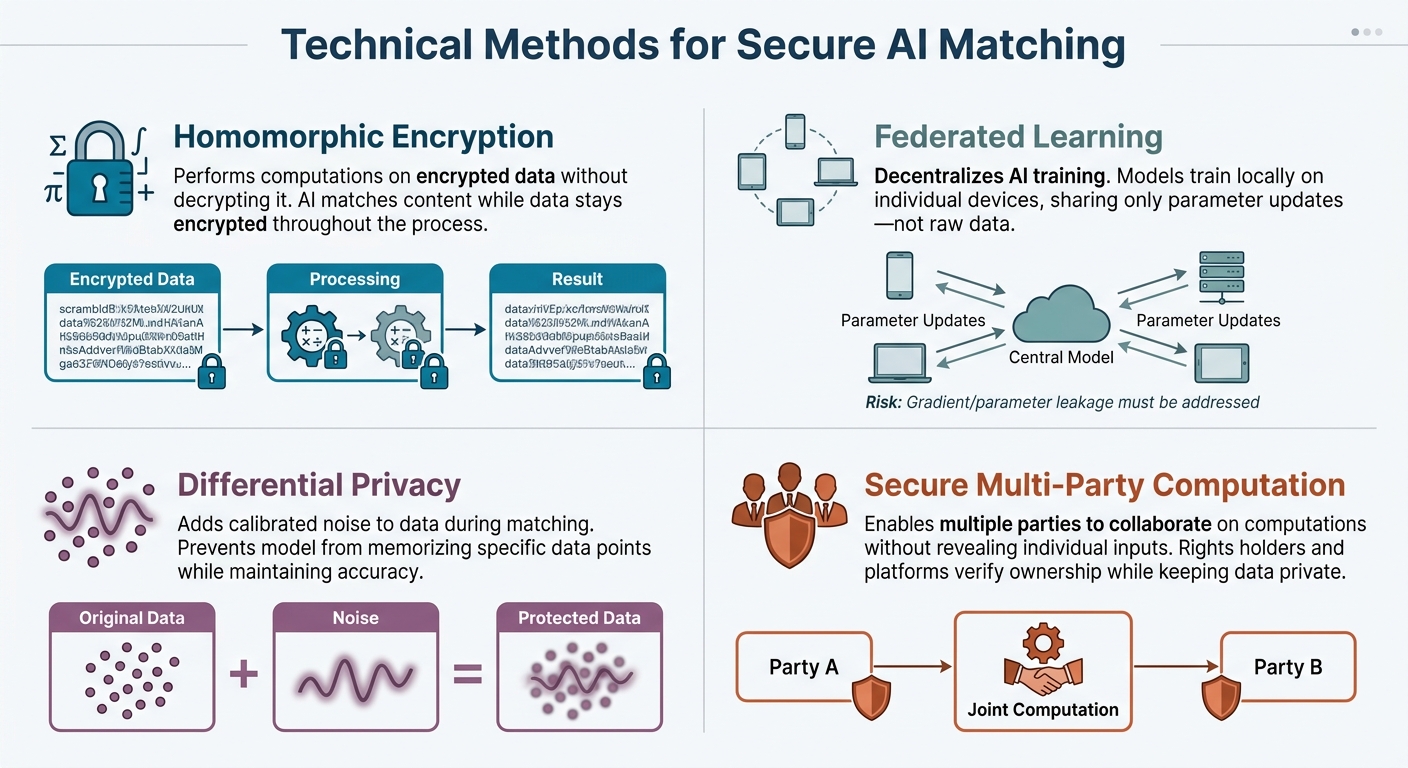

Privacy-preserving AI matching relies on cryptographic techniques to verify and compare content without exposing sensitive data. These methods act as a safeguard, ensuring that the matching process is secure and does not create new risks. Each approach tackles data privacy in a distinct way.

Homomorphic encryption allows computations to be performed on encrypted data without decrypting it. This means an AI system can match or compare content while the data stays encrypted, ensuring that the raw information remains hidden throughout the process.

Federated learning operates by decentralizing the training process. Instead of gathering data in one location, the AI model is trained locally on individual devices, sharing only parameter updates[2]. This reduces the risks associated with centralized data storage. However, it’s important to address potential risks like "gradient or parameter leakage", where shared updates could unintentionally reveal details about the training data.

Differential privacy works by adding carefully calibrated noise to data during the matching process. This reduces the likelihood of the model memorizing and leaking specific data points, while still maintaining the accuracy of the overall system[2].

Secure Multi-Party Computation (SMPC) enables multiple parties to collaborate on computations without revealing their individual inputs. In the context of content matching, this allows rights holders and platforms to verify ownership or detect infringements with AI tools together while keeping their data private.

sbb-itb-738ac1e

Invisible Watermarking for Secure Content Verification

Invisible watermarking offers a clever way to secure content by embedding ownership proof directly into the media itself. Unlike cryptographic methods that rely on external metadata, these watermarks stay with the content, even if metadata is removed or the file undergoes transformations. This ensures a persistent identity for the asset.

How Invisible Watermarking Works

Invisible watermarking embeds subtle, undetectable data into digital assets – whether they’re images, videos, audio files, or documents – without compromising quality. It uses perceptual modeling to determine the best areas for embedding, like textured image regions (e.g., foliage or fabric) or audio frequencies masked by louder sounds.

There are two main approaches:

- Spatial Domain: Directly modifies pixels or samples.

- Transform Domain: Alters coefficients from processes like Discrete Cosine Transform (DCT) or Discrete Wavelet Transform (DWT). Transform domain methods are often more resistant to compression and editing.

The goal is to balance three factors: invisibility, durability, and data capacity.

Modern systems often employ blind watermarking, which simplifies verification. With this method, only the suspect file and a shared secret are needed – there’s no requirement for the original unwatermarked file. This is especially useful for scalable and privacy-focused AI applications. As Nikhil John from InCyan explains:

"Properly designed invisible watermarks can survive those transformations and act as a latent serial number for the work itself." [1]

This durability makes invisible watermarking a strong foundation for secure blockchain integration, allowing for verifiable proof of ownership.

Blockchain Checksum Integration

Blockchain technology pairs seamlessly with invisible watermarking to create a tamper-proof record of content ownership. Instead of storing the full media file on the blockchain, compact [cryptographic hashes](https://www.scoredetect.com/blog/posts/digital watermarking) and detection event records are saved. These provide timestamped proof of registration and verification, while the actual files remain securely managed.

InCyan’s Tectus platform is a prime example of this integration. It combines blind watermarking with blockchain-based evidence logs, creating a secure system for verifying content ownership. For organizations that don’t require full-scale watermarking but still need blockchain timestamping, ScoreDetect offers an accessible alternative. This tool captures content checksums and generates verifiable proof of ownership. According to InCyan:

"The result is a timestamped, tamper-evident log that can be shared with partners and regulators while keeping underlying media and proprietary algorithms under strict control." [1]

This approach fills a critical gap. Even if platforms strip away provenance metadata, the invisible watermark remains as a "critical safety net", linking the asset back to its original source and licensing status. Meanwhile, the blockchain record provides independent confirmation of ownership without exposing sensitive detection methods or the original files.

InCyan‘s Idem and ScoreDetect: Privacy-Preserving AI Matching Solutions

InCyan takes a two-pronged approach to privacy-focused asset protection with Idem and ScoreDetect. These tools work together to create a seamless cycle of identification and prevention. Idem focuses on recognizing assets based on their unique signal patterns, even when significantly altered. Meanwhile, ScoreDetect ensures ownership is provable through blockchain-backed timestamping. This combination addresses a major issue: when platforms strip away or ignore metadata during uploads, these technologies ensure that content can still be traced back to its rightful owner. Building on earlier discussions of watermark durability and blockchain logging, Idem and ScoreDetect enhance these methods to provide reliable identification and proof of ownership.

Idem’s Multimodal Matching Technology

Idem tackles the challenge of identifying heavily modified digital assets. It creates unique mathematical "fingerprints" for content in various formats – images, videos, audio, and text – without requiring access to the original files. These fingerprints allow secure identification while keeping proprietary data private.

What stands out is Idem’s resilience. It can identify an asset using as little as 10% of the original content, all while maintaining a 99% accuracy rate. One client shared their experience:

"Gaining visibility into how content is utilized across the internet has truly been invaluable. We now have the automated intelligence needed to make smarter decisions, increase revenue through improved monetization and enforcement, and maintain strict control over our assets." [3]

Idem’s AI-driven pattern recognition is tailored specifically for content protection, unlike general-purpose algorithms. This focus enhances both accuracy and speed, enabling the system to detect unauthorized use across the internet and peer-to-peer platforms. By comparing fingerprints instead of raw data, Idem ensures privacy remains intact while offering large-scale identification capabilities.

ScoreDetect’s Blockchain Timestamping

ScoreDetect uses blockchain technology to provide prevention and verification tools. It stores cryptographic checksums on an immutable ledger, proving ownership without exposing sensitive files or proprietary systems.

What makes ScoreDetect particularly user-friendly is its integration with tools like WordPress and Zapier. The WordPress plugin automatically timestamps every article published or updated, creating verifiable ownership records on the blockchain. This not only secures content, protecting digital assets while aligning with Google’s E-E-A-T framework (Experience, Expertise, Authoritativeness, and Trustworthiness), enhancing SEO. Through Zapier, ScoreDetect connects with over 6,000 web apps, enabling smooth integration into existing workflows.

The platform also offers API-based verification services, allowing organizations to share ownership evidence with partners, regulators, or legal teams while maintaining control over their media. Blockchain records provide forensic-grade documentation, with cryptographic hashes ensuring that neither the asset nor the analysis has been tampered with.

For companies seeking reliable timestamping without full-scale watermarking, ScoreDetect delivers a straightforward, effective solution for verifiable content protection.

Benefits and Applications of Privacy-Preserving AI Matching

Key Advantages of Secure AI Matching

Privacy-preserving AI matching brings three major benefits compared to traditional metadata systems. First, it significantly improves data privacy. Organizations can verify ownership and track asset usage without exposing proprietary files or revealing sensitive detection algorithms. As Nikhil John from InCyan states:

"Properly designed invisible watermarks can survive those transformations and act as a latent serial number for the work itself" [1].

This means ownership proof remains intact even when metadata is removed.

Second, it helps meet regulatory requirements by aligning with content authenticity verification tools like CAI and C2PA. This ensures compliance even when metadata-based labeling methods fail.

Third, it minimizes intellectual property theft. Ownership can be verified using just the suspect asset and a shared secret – eliminating the need for large databases of unmarked originals. This streamlined approach scales efficiently across billions of assets, balancing invisibility, durability, and capacity [1].

These advantages make privacy-preserving AI matching a valuable solution for tackling unique challenges across various industries.

Industry Use Cases

The technical strengths of privacy-preserving AI matching translate into practical benefits across different sectors. Each industry faces unique challenges in protecting digital assets, and this technology adapts to meet those specific needs.

For instance, media and broadcast organizations deal with multiple transcodes and packaging steps in professional video workflows [1]. Meanwhile, ecommerce and social media platforms encounter aggressive compression, overlays, and user-added elements like stickers. Privacy-preserving AI matching can withstand these transformations, enabling the detection of unauthorized use across platforms. This technology is particularly effective for protecting e-books and other digital publications from piracy. In legal and forensic fields, features like immutable logs, cryptographic hashes, and versioned records ensure a defensible chain of custody. These tools support regulatory scrutiny while safeguarding sensitive evidence.

InCyan’s integrated framework – spanning Discovery, Identification, Prevention, and Insights – applies these benefits across a range of industries, from media and education to healthcare, finance, and government [1]. Each sector benefits from secure asset tracking and verifiable ownership, tailored to its specific needs.

Challenges and Future Directions in Privacy-Preserving AI Matching

Privacy-preserving AI matching focuses on verifying ownership while safeguarding sensitive data. However, this goal is met with hurdles in areas like technology, scalability, and legal frameworks.

Addressing Robustness Challenges

One of the primary challenges lies in maintaining a balance within the "Watermarking Triangle" – imperceptibility, robustness, and capacity. Improving one often compromises the others.

Adversarial attacks pose the greatest risk. Malicious actors can employ techniques like filtering, cropping, or generative AI edits (e.g., inpainting or style transfer) to erase watermark signals. As Nikhil John from InCyan highlights:

"A watermark that disappears after a single round of social media transcoding or minor colour correction does not help rights holders" [1].

To counter this, organizations must define the specific processing conditions their watermarks need to endure. For instance, media companies might prioritize resilience to professional color grading, while social platforms should focus on surviving compression and user-generated overlays. By clearly outlining these robustness expectations, teams can set achievable goals and decide between spatial domain methods (quick but less durable) and transform domain approaches (more robust but complex), often utilizing deep learning in steganalysis for improved detection.

Once robustness priorities are established, the next step involves scaling these solutions to meet enterprise-level requirements.

Scaling for Enterprise Use

For large platforms, watermarking pipelines must be efficient, scalable, and compatible with existing infrastructure. A critical component here is blind detection, which enables matching using only the suspect asset and a shared secret, eliminating the need for the original file.

As Nikhil John from InCyan explains:

"Blind watermarking removes that dependency [on the original file]. For platforms, this is essential. They cannot store every unwatermarked version of every upload for comparison" [1].

Future advancements will likely include adaptive watermarking techniques and API-based services that verify ownership while protecting proprietary data.

Ethical and Legal Considerations

Beyond technical and scalability concerns, ethical and legal considerations are key to building trust in AI matching systems.

Transparency plays a crucial role. Detection thresholds, error rates, and confidence scores need to be clear enough for legal and policy teams to rely on. As Nikhil John from InCyan points out:

"Are detection thresholds, error rates, and confidence scores transparent enough for your legal, product, and policy teams to trust and act on?" [1].

Pre-deployment alignment between product, legal, and security teams is essential to map content flows effectively. Adopting standards like the Coalition for Content Provenance and Authenticity (C2PA) and the Content Authenticity Initiative (CAI) can further ensure compliance. As Nikhil John notes:

"Invisible digital watermarking is not a silver bullet, but it is a powerful control point in a world of frictionless copying and increasingly sophisticated synthetic media" [1].

Looking ahead, the integration of cryptographic verification, AI-powered matching, and evolving regulatory frameworks will drive the future of privacy-preserving content protection.

Conclusion

As platforms increasingly strip metadata and generative AI makes content manipulation easier, privacy-preserving AI matching has become more crucial than ever. Advanced technologies like homomorphic encryption, federated learning, invisible watermarking, and blockchain verification are proving that it’s possible to safeguard privacy without compromising user experience.

InCyan’s four-pillar approach – Discovery, Identification, Prevention, and Insights – strikes this balance perfectly. Their solutions, Idem and ScoreDetect, showcase remarkable precision and resilience. These tools can identify content even from minimal remnants, while also providing verifiable proof of ownership without storing the actual digital assets. This approach addresses the challenges posed by stripped metadata, offering a way to verify content authenticity without needing the original file. For enterprises managing billions of assets, this blind detection capability represents a game-changing development in scalable asset verification.

As Nikhil John of InCyan explains:

"When platforms strip or ignore provenance metadata, robust invisible watermarking becomes a critical safety net that can help link assets back to their origin and licensing state" [1].

This technology is especially relevant for industries like media, entertainment, healthcare, and legal services, where protecting intellectual property is paramount.

The future lies in adaptive watermarking, AI-driven detection, and blockchain verification. By embracing multi-layered protection strategies, rights holders can maintain control over their assets in an era where copying and synthetic media are effortless.

FAQs

How can AI match content without ever seeing the raw file?

AI identifies content without needing to access raw files by relying on invisible digital watermarking. This involves embedding a machine-readable signal into media – such as images, videos, or audio – that remains undetectable to the human eye or ear. These watermarks are designed to withstand alterations like cropping or compression, allowing AI to confirm ownership or origin without requiring the original file. This approach balances privacy with effective content protection and large-scale authentication.

Can invisible watermarks survive cropping, compression, or AI edits?

Invisible watermarks, when embedded using reliable techniques, can endure challenges like cropping, compression, and even AI-driven edits. This resilience makes them a dependable tool for protecting and verifying content.

What does blockchain prove if the media file isn’t stored on-chain?

Blockchain technology offers a secure way to verify authenticity and ownership by creating a tamper-proof, timestamped record of a media file’s details. This approach establishes proof of origin without requiring the actual file to be stored on the blockchain itself.