AI watermarking embeds hidden signals into digital content to verify its origin and ownership. These signals are designed to endure transformations like cropping or compression. However, machine learning (ML) attacks exploit vulnerabilities in these watermarks, using techniques like scrubbing (removing watermarks), spoofing (forging fake watermarks), and stealing (reverse-engineering watermarking methods).

The challenge lies in balancing watermark strength with content quality. Stronger watermarks may degrade the content, while subtle ones are easier to remove. Recent research shows attackers can bypass advanced systems with high success rates and low costs, exposing weaknesses in current watermarking methods.

Key points:

- AI Watermarking: Protects content by embedding invisible markers into text, images, audio, or models.

- ML Attacks: Remove, forge, or extract these markers to evade detection or misattribute content.

- Current Issues: Watermarks are vulnerable to adversarial attacks, especially in large language models and generative AI.

- Potential Solutions: Combining cryptographic keys, adversarial testing, and blockchain verification can strengthen defenses.

The ongoing battle between watermarking and ML attacks highlights the need for multi-layered security strategies and continuous improvement.

Watermarking in Generative AI: Opportunities and Threats

sbb-itb-738ac1e

How AI Watermarking Works

AI watermarking embeds invisible markers into digital content or machine learning models by making subtle changes to the content during or after its creation. These markers are designed to withstand common transformations – like compression, cropping, or editing – while remaining undetectable to the human eye.

For images, watermarking often uses the Discrete Cosine Transform (DCT) to break an image into frequency bands. The watermark is inserted into mid-frequency bands, striking a balance between surviving compression (like JPEG) and staying invisible. Advanced methods, such as Tree-Ring, take this further by embedding watermarks into the noise vector of diffusion models. This involves injecting a circular pattern into the Fourier space, allowing the watermark to be retrieved algorithmically during decoding.

In text watermarking, the process involves splitting the vocabulary into "green" and "red" token groups. The model is then adjusted to favor "green" tokens. Detection relies on statistical analysis – if "green" tokens appear significantly more often (Z-score > 2.0), the watermark is confirmed. Google DeepMind’s SynthID uses this approach by tweaking token probability scores during text generation. As they explain, "Large language models generate text one word (token) at a time… SynthID adjusts these probability scores to generate a watermark" [2].

For audio, tools like AudioSeal embed watermarks into imperceptible frequency ranges – either below 20 Hz or above 20 kHz. AudioSeal can detect these watermarks at an incredibly fine resolution, down to 1/16,000 of a second, and remains effective even after MP3 compression or playback speed changes [9]. These varied techniques showcase how watermarking adapts to different types of content.

Watermarking isn’t limited to content – it can also be applied to machine learning models. Techniques include embedding signatures directly into model weights or biases using methods like Least Significant Bits or modifying the model’s architecture. Trigger datasets can also be incorporated, prompting specific outputs to verify ownership when activated [11].

Invisible Watermarking Methods

Generative watermarking embeds markers during content creation to make them harder to remove.

One advanced method is Latent Space Injection. As seen in Tree-Ring, watermarks are embedded into the noise vector of diffusion models by injecting patterns into the Fourier space. This allows retrieval during the decoding process without affecting image quality.

Another technique is Model Decoder Fine-Tuning. Meta AI introduced Stable Signature in October 2023, which fine-tunes the latent decoder to embed a fixed signature without altering the diffusion process. This method achieves an incredibly low false positive rate of just 1 in 10 billion human-made images, compared to the 1 in 100 rate seen with traditional detection methods [10].

For model watermarking, parameter entanglement embeds markers directly into the model’s architecture. This involves splitting tensors, adding identity layers, or tweaking weights. Detection relies on using trigger data to verify the expected response [11].

In text watermarking, Token-Level Probability Biasing uses a pseudorandom function to group potential next tokens into categories, favoring "green" tokens. The larger the text sample, the easier it is to detect the watermark [13].

Advantages of AI Watermarking

AI watermarking offers several benefits, particularly for tracing content and protecting intellectual property. Unlike visible watermarks, which can disrupt the user experience, invisible watermarks allow creators to track unauthorized use while maintaining content quality. This makes them ideal for large-scale digital asset management. With over 11 billion images generated from just three open-source repositories, automated watermarking is critical for handling such vast libraries [10].

Platforms like ScoreDetect integrate watermarking into broader content protection systems, combining embedding with tools for discovery, analysis, and takedown processes. Watermarks are also highly durable. When done well, they can survive transformations like JPEG compression, cropping, and minor edits. For example, Meta’s Stable Signature withstands significant image alterations, while AudioSeal remains effective even after MP3 compression or added noise [9].

Another major benefit is provenance verification. Systems like Google DeepMind’s SynthID make it easy for users to confirm content origins through tools like the Gemini app or dedicated verification platforms [2].

Limitations of AI Watermarking

Despite its strengths, AI watermarking faces several challenges. One major issue is vulnerability to adversarial attacks. The watermark must remain invisible to humans but detectable by algorithms – a delicate balance [8].

Another limitation is the need for model access. Many watermarking techniques require white-box access to the model’s internal workings, making third-party verification difficult in black-box scenarios.

Text watermarking struggles with shorter content. Detecting a watermark requires enough tokens to establish statistical significance. OpenAI‘s AI text classifier, which had only 26% accuracy, was discontinued in July 2023, highlighting the difficulties in this area [12].

Quality trade-offs also pose challenges. In text watermarking, stronger biases can lead to repetitive or unnatural language, while overly aggressive image watermarking may introduce visible artifacts under certain conditions. These trade-offs underscore the ongoing struggle to balance effectiveness with quality, especially as machine learning-based attacks continue to evolve.

Machine Learning-Based Attacks Explained

Machine learning attacks take advantage of watermark vulnerabilities. These techniques remain invisible to the human eye but are detectable by algorithms. They rely on computational methods to identify, remove, or forge watermarks while maintaining the original content’s quality. The results? Misinformation, unauthorized content reuse, and potential harm to reputations.

Recent research highlights just how accessible these attacks have become. For example, state-of-the-art watermarking for large language models (LLMs) can be bypassed for less than $50, with an 80% success rate. In June 2024, researchers Nikola Jovanović, Robin Staab, and Martin Vechev demonstrated this through an automated "Watermark Stealing" (WS) algorithm. Their findings revealed vulnerabilities in schemes previously thought to be secure:

"We show that for under $50 an attacker can both spoof and scrub state-of-the-art schemes previously considered safe, with average success rate of over 80%."

In another study from May 2025, researchers Hanlin Zhang and Benjamin L. Edelman concluded that achieving strong watermarking under certain conditions is fundamentally impossible. Using a "perturbation oracle" attack, they successfully removed watermarks from three major LLM schemes. Their method involved inducing a random walk on high-quality outputs, and their conclusion was clear:

"A strong watermarking scheme satisfies the property that a computationally bounded attacker cannot erase the watermark without causing significant quality degradation. In this paper, we… prove that, under well-specified and natural assumptions, strong watermarking is impossible to achieve."

Below, we’ll explore the different types of machine learning attacks and their specific methods.

Types of ML Attacks

To navigate the complexities of these threats, it’s essential to understand the various attack types:

- Removal (scrubbing) attacks: These aim to erase watermarks so that detectors no longer recognize the content as AI-generated. Techniques often involve statistical distortion to "clean" embedded signals while keeping the content intact [4][14].

- Watermark stealing: Attackers query a watermarked model’s API to reverse-engineer its watermarking process. Once they understand the system, they can remove watermarks or even embed them into unprotected content [14].

- Spoofing attacks: These involve forging watermarks on human-generated or third-party content. The goal? To falsely associate it with a specific watermarked model, potentially harming the model provider’s reputation [14].

- Transfer (evasion) attacks: In these "no-box" scenarios, attackers don’t have access to the target model or detection API. Instead, they add subtle perturbations to bypass watermark detectors. As Yuepeng Hu explained:

"Watermark-based AI-generated image detector based on existing watermarking methods is not robust to evasion attacks even if the attacker does not have access to the watermarking model nor the detection API" [3].

- Oracle-based perturbation: This method uses two tools: a "quality oracle" to ensure output usability, and a "perturbation oracle" to tweak content until the watermark disappears [5].

- Copy attacks: Here, attackers extract a watermark from a watermarked asset and embed it into non-watermarked content. This creates false attribution of the content’s origin [4].

Advantages of ML Attacks

These attacks are not only effective but also expose key weaknesses in watermarking systems. They can remove watermarks while preserving content quality, making changes nearly undetectable. For example, the random walk method used in oracle-based attacks ensures that altered content still matches the distribution of high-quality outputs [5]. Additionally, many attacks can adapt to different watermarking systems, even without detailed knowledge of how they work.

Limitations of ML Attacks

Despite their effectiveness, these attacks face several challenges. Many require extensive access to APIs or models. For instance, watermark stealing relies on repeated queries to reverse-engineer a watermarked LLM, a pattern that defenders could detect through monitoring or rate limiting [14]. Similarly, "quality oracles" are critical for maintaining content usability; without them, modifications might degrade the content to an unacceptable level [5]. In white-box scenarios, where defenders have full visibility into the system, specific attack methods can be identified and countered [15]. Finally, newer watermarking techniques, such as those using Vision Foundation Models, introduce complexities that demand a deep understanding of model architectures. These vulnerabilities may not translate effectively across different systems [4].

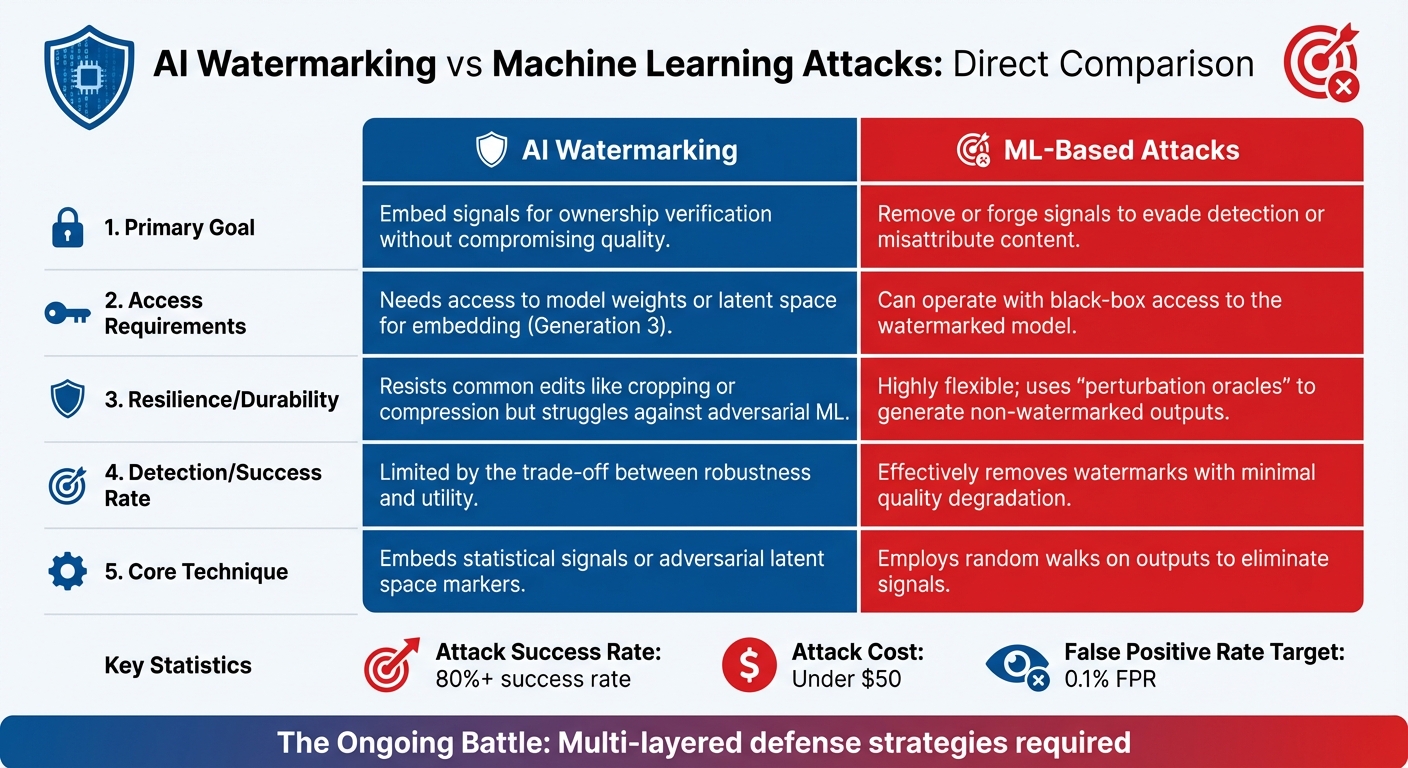

AI Watermarking vs Machine Learning Attacks: Direct Comparison

AI Watermarking vs Machine Learning Attacks: Strengths and Weaknesses Comparison

Strengths and Weaknesses Comparison Table

AI watermarking works by embedding signals that verify ownership or provenance, while machine learning (ML) attacks aim to remove or forge these signals. Below is a breakdown of how these two approaches compare across key areas:

| Feature/Capability | AI Watermarking | ML-Based Attacks |

|---|---|---|

| Primary Goal | Embed signals for ownership verification without compromising quality | Remove or forge signals to evade detection or misattribute content |

| Access Requirements | Needs access to model weights or latent space for embedding (Generation 3) | Can operate with black-box access to the watermarked model [15] |

| Resilience/Durability | Resists common edits like cropping or compression but struggles against adversarial ML | Highly flexible; uses "perturbation oracles" to generate non-watermarked outputs [15] |

| Detection/Success Rate | Limited by the trade-off between robustness and utility [7] | Effectively removes watermarks with minimal quality degradation [15] |

| Core Technique | Embeds statistical signals or adversarial latent space markers [4] | Employs random walks on outputs to eliminate signals [15] |

This table highlights a continuous back-and-forth between watermarking advancements and the countermeasures developed by attackers. Watermarking has progressed through three distinct generations: spatial/transform domains (DW1), ML encoder-decoder systems (DW2), and now latent space embeddings in foundation models (DW3). Yet, attackers have adapted just as quickly.

Factors That Determine Success

The effectiveness of watermarking or its removal doesn’t just depend on technical capabilities – it also hinges on several operational factors.

Oracle Access

Attackers rely heavily on access to two types of oracles: a "quality oracle" (to ensure outputs remain high-quality) and a "perturbation oracle" (to guide modifications). Without these tools, attackers face significant challenges in removing watermarks while maintaining content quality [15].

Model Sophistication

The increasing complexity of AI models has also influenced ML attack strategies. As models become more capable and versatile, they make it easier for attackers to meet the conditions necessary for successful watermark removal [15]. This constant evolution forces defenders to continually refine their watermarking techniques.

Design Trade-offs

Balancing robustness and usability is another major hurdle. Systems designed to prioritize high-quality outputs often leave vulnerabilities that attackers can exploit. Researchers Qi Pang and colleagues noted:

"Common design choices in LLM watermarking schemes make the resulting systems surprisingly susceptible to attack — leading to fundamental trade-offs in robustness, utility, and usability." [7]

In vision models, the target selection strategy used for removal attacks plays a critical role in reducing watermark detection rates [4]. Modern benchmarks now focus on the True Positive Rate (TPR) at a strict 0.1% False Positive Rate (FPR), as even minor false positives can severely damage trust in these systems [16].

Multi-Layered Defense Strategies

For organizations deploying watermarking at scale, a single-layer approach is often insufficient. A robust example is ScoreDetect’s enterprise solution, which combines invisible watermarking with blockchain-based timestamps. This dual-layered strategy provides verifiable proof of ownership without relying solely on embedded signals. It also enables practical enforcement measures, such as automated delisting notices, which achieve over 96% takedown rates. This highlights the importance of integrating multiple tools to address the limitations of any single watermarking method.

Strengthening AI Watermarking Against ML Attacks

Combined and Attack-Resistant Methods

As machine learning (ML) attacks grow more sophisticated, defending against them requires a layered approach. Advanced AI watermarking defenses now combine multiple techniques to strengthen protection. For example, pairing cryptographic keys with adversarial training creates systems that are extremely challenging for attackers to breach.

One effective strategy is the use of secret-key–dependent watermarks. These make it computationally infeasible for attackers to reverse-engineer detection methods. This approach is especially important against watermark stealing attacks, where adversaries query APIs to uncover and exploit detection mechanisms. Such vulnerabilities have allowed attackers to bypass or remove state-of-the-art watermarking systems with over 80% success rates for under $50 [14].

Research from the University of Maryland‘s WAVES team highlights another critical vulnerability: watermarking algorithms using publicly available Variational Autoencoders (VAEs) are susceptible to even minor image manipulation [16]. To counter this, organizations should adopt proprietary VAEs instead of open-source KL-VAE models, which are more prone to adversarial attacks.

Adversarial stress-testing is another key measure. By testing watermarking systems under a variety of attack scenarios, developers can identify weaknesses and fine-tune detection thresholds. For instance, setting a strict false positive rate (FPR) of 0.1% helps detect vulnerabilities caused by low-noise attacks [16]. As Natasha Al-Khatib aptly put it:

"AI watermarking holds immense potential to build trust and transparency" [1].

For text-based content, embedding radioactive signals offers a cutting-edge solution. These signals allow developers to trace whether watermarked content has been used to fine-tune other machine learning models, ensuring protection even after the content has been incorporated into new systems [1].

These advanced methods are shaping enterprise-grade solutions like ScoreDetect, which are designed to combat evolving ML threats effectively.

Enterprise Implementation

To address the challenges of ML-resistant watermarking, enterprises are adopting comprehensive protection platforms that integrate these advanced techniques at scale. Solutions like ScoreDetect combine multiple layers of security to safeguard content and ensure ownership verification.

ScoreDetect employs invisible watermarking at the content generation stage, embedding signals designed to withstand common transformations such as compression or cropping. This is paired with blockchain timestamping, which records a checksum of the content on a distributed ledger. Together, these methods provide dual verification: one embedded in the content itself and another stored immutably on the blockchain. This redundancy is crucial for resisting sophisticated attacks aimed at watermark removal.

Beyond detection, ScoreDetect also offers automated enforcement capabilities. For industries like finance, healthcare, legal services, and government – where content authenticity is often tied to regulatory compliance – the platform provides seamless integration with over 6,000 web apps via Zapier. For instance, its WordPress plugin automatically timestamps every published or updated article, creating verifiable ownership records that not only protect copyrights but also enhance SEO performance.

Developing watermarking systems that balance security, usability, and practicality is no small task. Yet, for enterprises, this balance is essential to staying ahead of the ever-evolving landscape of ML attacks [7].

Conclusion: Balancing Protection and Attack

AI watermarking holds potential, but recent findings highlight its vulnerabilities to machine learning (ML) attacks. In February 2024, researchers at ETH Zurich revealed that current watermarking systems could be compromised, with attackers increasing scrubbing success rates from just 1% to over 80% [14][6]. This demonstrates the delicate balance between ensuring protection and maintaining functionality.

Improving watermark robustness often comes with trade-offs. Strengthening security can sometimes degrade the quality of the content, creating opportunities for attackers to exploit weaknesses through methods like watermark stealing or perturbation oracles [7]. As ETH Zurich researchers Nikola Jovanović, Robin Staab, and Martin Vechev pointed out:

"Current watermarking schemes are not ready for deployment. The robustness to different adversarial actors was overestimated in prior work" [6].

To address these challenges, a layered approach is critical. Techniques like encryption techniques, adversarial testing, and blockchain verification are essential components of a stronger defense. For example, ScoreDetect combines invisible watermarking with blockchain timestamping, offering dual verification layers to counter advanced ML attacks. This approach is particularly important for industries such as finance, healthcare, legal services, and government, where content authenticity is tied directly to regulatory compliance. ScoreDetect’s automated enforcement tools, including a 96% takedown success rate and compatibility with over 6,000 web apps via Zapier, provide practical solutions while researchers work on advancing watermarking technologies.

The battle against evolving ML threats requires constant innovation and multi-layered strategies. Effective watermarking is not static – it demands ongoing evaluation and adaptation to stay ahead of increasingly sophisticated attacks.

FAQs

Can AI watermarks be removed without ruining quality?

AI watermarks, designed to identify AI-generated content, can frequently be removed or bypassed with minimal impact on the content’s quality. Studies indicate that methods like spoofing or scrubbing can effectively erase or reverse-engineer these watermarks. These techniques are not only inexpensive but also highly effective, enabling attackers to retain the original quality of the content. This underscores the challenge of developing watermarks that can withstand sophisticated removal efforts.

What’s the difference between scrubbing, spoofing, and watermark stealing?

Scrubbing involves removing or weakening watermarks in content, making it more difficult to trace back to its AI origins while keeping the main material intact. Spoofing creates fake watermarks without access to the original secret key, tricking detection systems into treating the content as authentic. Watermark stealing works by reverse-engineering the watermarking process, allowing attackers to either replicate or bypass the system. To put it simply: scrubbing wipes out watermarks, spoofing fakes them, and stealing extracts the watermarking method.

How can ScoreDetect help if a watermark gets bypassed?

ScoreDetect leverages cutting-edge AI tools to tackle attempts at bypassing watermarks. It uses invisible, unobtrusive watermarks that are tough to remove. Even if someone manages to bypass a watermark, the technology can still identify and confirm unauthorized content usage by scanning for hidden watermarks or unique digital fingerprints. With an impressive track record in identifying pirated content and automating takedown processes, ScoreDetect offers robust protection against copyright infringement and piracy.