Audio watermarking is struggling to keep up with the rapid progress of generative AI. Watermarks, designed to protect audio content and verify its source, are increasingly vulnerable to AI-driven attacks. Generative AI tools like neural codecs, denoisers, and voice conversion systems can easily strip away these protections, treating watermarks as noise or re-synthesizing audio without them.

Here’s the main takeaway: No current audio watermarking method can withstand all tested distortions. This leaves digital content exposed to risks like voice cloning fraud and misuse of synthetic media. While multi-layered defenses, such as latent watermarking and blockchain timestamping, offer hope, the fight to protect audio content is far from over.

Key Points:

- Watermark detection accuracy drops to ~50% (random guessing) during AI-based attacks.

- AI tools like FACodec and RVC can erase watermarks with nearly 100% success.

- Multi-layered protections (e.g., latent watermarks, blockchain) are emerging as stronger alternatives.

The stakes are high, and industries must adopt smarter, layered solutions to counter these evolving threats.

Audio Watermarking and Its Weaknesses

How Audio Watermarking Works

Audio watermarking involves embedding hidden data into sound files to confirm their source and safeguard intellectual property. This process uses a watermark generator to encode unique data into the audio, which can later be extracted and verified by a detector [1].

The key is to ensure the embedded data remains inaudible to humans while being detectable by specialized software. Most advanced watermarks maintain a signal-to-noise ratio of 20–30 dB to preserve audio quality [2]. This balance is critical for protecting content from unauthorized use, voice cloning, and misuse of AI-generated speech. However, despite these safeguards, audio watermarking is notably vulnerable to deliberate removal techniques.

Main Weaknesses in Audio Watermarking

Watermarking technologies face significant challenges, particularly when targeted by both traditional and AI-driven attacks. Patrick O’Reilly from Northwestern University highlights this issue:

Existing audio watermarking techniques operate in a post-hoc manner, manipulating ‘low-level’ features of audio recordings after generation… this post-hoc formulation makes existing audio watermarks vulnerable to transformation-based removal attacks [2].

Since watermarks are applied as minor alterations after the audio is created, they do not affect higher-level features like pitch or timing. This makes them relatively easy to remove through methods like signal reconstruction [2].

Physical distortions also pose a major problem. Re-recording audio through speakers and microphones can drastically reduce watermark detection. For instance, the WavMark system’s accuracy drops from 97.50% at a 0.5-meter distance to just 43.75% at 5 meters [1]. Additionally, modern neural codecs like TiCodec and FACodec are designed to eliminate irrelevant audio data, often erasing watermarks entirely and reducing detection rates to nearly zero [2].

These vulnerabilities are exploited through various attack methods, as summarized in the table below:

| Attack Category | Example Methods | Typical Impact |

|---|---|---|

| Signal-Level | MP3 compression (8 kbps), pitch shifting (+100 cents), Gaussian noise | Moderate to severe degradation; pitch shifts can reduce accuracy to 6.37% [1] |

| Physical-Level | Re-recording at distances from 0.5 m to 5 m | Accuracy drops from 97.50% to 43.75% [1] |

| AI-Induced | Voice conversion, neural denoisers, TTS synthesis | Detection rates drop to near zero or ~50% (random guessing) [1][2] |

The most serious threat comes from AI-based removal techniques. Neural network denoisers and voice conversion tools can effectively strip watermarks by treating them as noise or by re-synthesizing the audio completely [2][1]. A study of 25 watermarking methods revealed that none could withstand all tested real-world distortions [1]. Generative AI further exacerbates these weaknesses, making it even harder to ensure robust protection.

sbb-itb-738ac1e

AI Music Copyright: The Watermarking Solution Explained

Generative AI as a Threat to Audio Watermarking

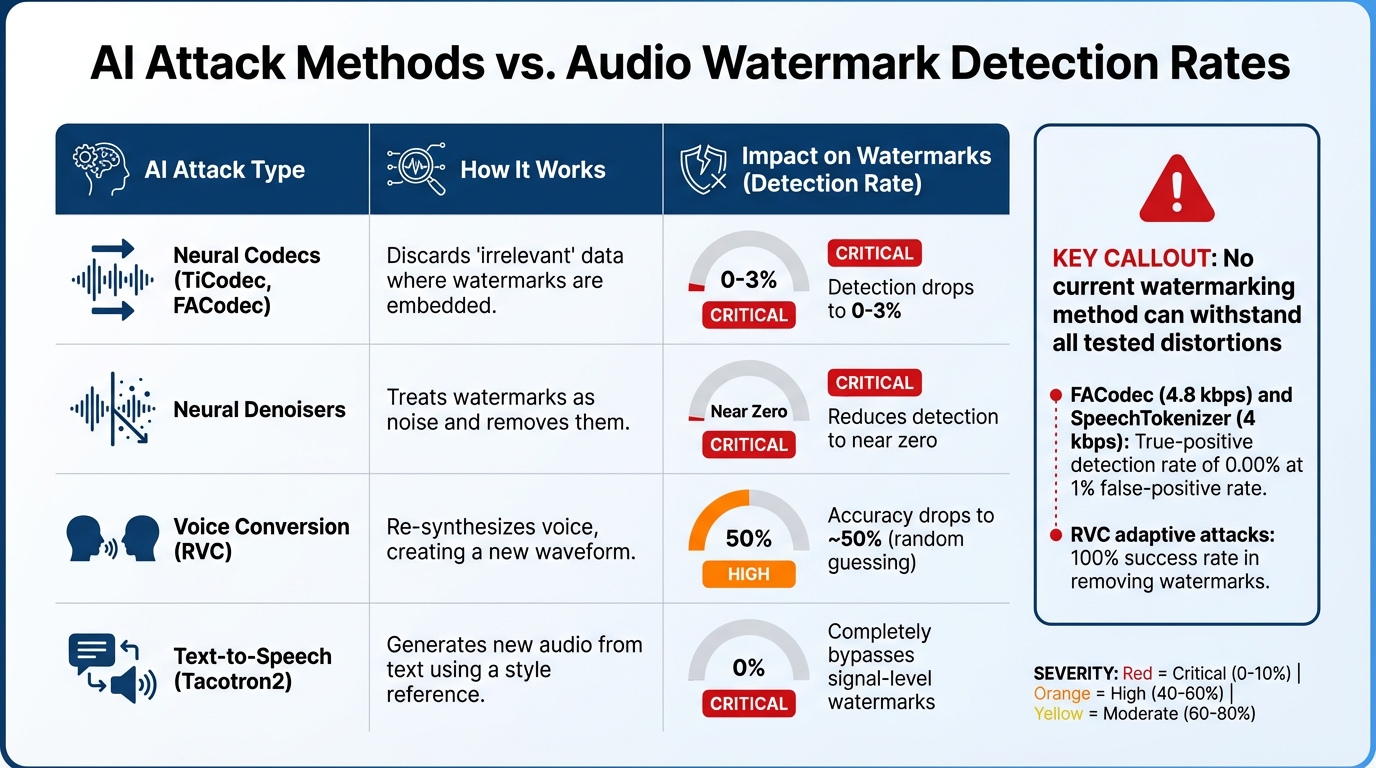

Audio Watermarking Vulnerability: Attack Types and Detection Accuracy Rates

AI’s Role in Watermark Removal

Generative AI has turned out to be a formidable challenge for traditional audio watermarking techniques. These systems don’t need to explicitly identify a watermark to remove it. Instead, they treat watermarks as insignificant noise during their usual processing tasks. For instance, when a neural denoiser or codec processes audio, it targets low-magnitude disruptions – precisely where watermarks are hidden – and eliminates them while reconstructing what it perceives as a "clean" signal [2].

The key issue lies in how watermarks are designed. They are meant to be imperceptible to human listeners, blending seamlessly into the audio. However, AI models that prioritize high-level audio features like pitch, timing, and timbre often disregard the subtle, low-level changes that make up a watermark. This leads to unintentional removal during processing.

In April 2025, researchers from Northwestern University and Adobe Research showcased the vulnerability of watermarks against neural codecs. Their experiments with FACodec (4.8 kbps) and SpeechTokenizer (4 kbps) revealed that these codecs could effectively erase watermarks, reducing the true-positive detection rate to an alarming 0.00% at a 1% false-positive rate [2].

"Two classes of transformation – neural network-based low-bitrate audio codecs and denoisers – suppress detection rates to near zero across all evaluated watermarking methods." – Patrick O’Reilly, Bryan Pardo, Zeyu Jin, and Jiaqi Su [2]

And it doesn’t stop there. AI has introduced entirely new ways to attack watermarking systems, pushing the limits of what these protections can handle.

New Attack Methods Introduced by AI

AI doesn’t just remove watermarks; it redefines the game with methods like re-synthesis. Technologies such as voice conversion and text-to-speech can recreate audio from scratch, producing identical sound without carrying over the original watermark. In these scenarios, the watermarked file is merely used as a style reference to generate fresh, unmarked audio [1][2].

In March 2025, a team led by Yizhu Wen and Qiben Yan tested 22 audio watermarking schemes – including AudioSeal, WavMark, and SilentCipher – against 109 different attack setups. They found that AI-driven distortions, such as Retrieval-based Voice Conversion (RVC) and Tacotron2, were highly effective. In adaptive situations where the AI was fine-tuned on datasets like LJ Speech, RVC achieved a 100% success rate in removing watermarks [1].

What makes these attacks even more concerning is their simplicity. They operate as black-box systems, meaning attackers don’t need insider knowledge of the watermarking algorithm or access to the detection tools. A single pass through a neural codec or denoiser during routine data handling can strip the watermark, making these AI methods both efficient and practical for broad use [2].

| AI Attack Type | How It Works | Impact on Watermarks |

|---|---|---|

| Neural Codecs (TiCodec, FACodec) | Discards "irrelevant" data where watermarks are embedded | Detection drops to 0–3% [2] |

| Neural Denoisers | Treats watermarks as noise and removes them | Reduces detection to near zero [2] |

| Voice Conversion (RVC) | Re-synthesizes voice, creating a new waveform | Accuracy drops to ~50% (random guessing) [1] |

| Text-to-Speech (Tacotron2) | Generates new audio from text using a style reference | Completely bypasses signal-level watermarks [1] |

These advanced techniques highlight just how difficult it’s becoming to maintain watermark integrity in the face of rapidly advancing AI technologies. The landscape is shifting, and traditional approaches are struggling to keep up.

Challenges in Improving Watermark Durability

Addressing the threats posed by generative AI reveals a tough reality: improving watermark durability is a complex and ongoing battle, though AI improves audio watermarking accuracy in specific defensive contexts.

Comparison of Conventional and AI-Based Watermarking

When it comes to watermarking methods, both conventional and AI-based approaches face significant hurdles. Neither is fully equipped to handle the diverse and advanced threats of today.

Conventional signal-processing techniques, such as Patchwork and FSVC, can manage basic distortions to an extent but falter against AI-driven attacks. On the other hand, AI-based methods like AudioSeal and WavMark show better resistance to distortions like pitch and time changes. However, they still struggle against neural codecs and voice conversion tools. This is because these methods rely on embedding low-magnitude perturbations (commonly 20–30dB SNR) into the audio, which are easily disrupted by advanced AI tools [1][2].

| Attack Category | Conventional Methods | AI-Based Methods |

|---|---|---|

| Signal Distortions (pitch/time) | Highly vulnerable [1] | More resilient [1] |

| AI-Induced (VC/TTS) | Accuracy drops to ~50% (random guessing) [1] | Mixed results; fails against adaptive attacks [1] |

| Neural Codecs | Severely impacted by low-bitrate codecs [2] | Severely impacted by low-bitrate codecs [2] |

| Physical Re-recording | Moderate resilience [1] | WavMark and Timbre achieve 100% accuracy at close range [1] |

"None of the surveyed watermarking schemes is robust enough to withstand all tested distortions in practice." – Yizhu Wen, Lead Researcher [1]

Scalability and Implementation Challenges

Creating watermarks that can thrive in real-world conditions introduces another layer of difficulty. The imperceptibility trade-off is a central issue. Watermarks must remain inaudible to human listeners to preserve audio quality. However, this subtlety makes them highly susceptible to AI denoisers, which can strip away these signatures as if they were background noise [2].

Another pressing issue is achieving false-positive rates below 0.1% while ensuring detector synchronization despite common audio manipulations like pitch shifts, time stretching, or cropping [2][1].

"State-of-the-art post-hoc audio watermarks can be removed with no knowledge of the watermarking scheme and minimal degradation in audio quality." – Patrick O’Reilly, Northwestern University [2]

Then there’s the sample rate problem. Watermarks must function across multiple sample rates, such as 16kHz and 44.1kHz, which often necessitates band-splitting techniques. These methods apply watermarks to specific frequency ranges, but they limit the amount of data that can be embedded and reduce overall robustness [2]. Researchers are now suggesting a shift in strategy: instead of embedding signatures into low-level waveform noise, future methods might focus on high-level speech attributes [2].

These challenges highlight the need for groundbreaking approaches to watermarking that can withstand the evolving landscape of AI-driven threats.

Additional Strategies for Protecting Audio Content

Multi-Layered Defense Mechanisms

Relying solely on watermarking isn’t enough. A study analyzing 22 audio watermarking methods found that none were immune to all real-world distortions, including those caused by AI and physical attacks [1]. This has led to the adoption of layered defenses that combine watermarking with other protective technologies.

One promising method is latent (in-model) watermarking. Instead of adding watermarks after audio is generated, this technique embeds them directly into the training data of generative models. This ensures that the watermark becomes an integral part of the model’s output, making detection possible even if users attempt to bypass it. Tests have shown latent watermarking can achieve over 75% detection accuracy at a false positive rate of 10⁻³, even after the model is fine-tuned [3]. This approach is especially useful for open-source models, where users might otherwise disable post-hoc watermarking. When combined with external verification tools, latent watermarking strengthens overall content security.

Another layer of protection comes from Content Provenance Standards. The Coalition for Content Provenance and Authenticity (C2PA) has developed technical guidelines to certify the origin and history of media content using digital credentials. For instance, Microsoft introduced Content Credentials in 2024 within Azure OpenAI, allowing users to verify the source of images generated by tools like DALL·E and GPT-image-1. These credentials provide details such as the digital signature, issuance date, and time, confirming that the content was created by Microsoft-hosted AI. Similarly, Meta has implemented VideoSeal, a watermarking system for AI-generated videos on its platforms [4].

Blockchain timestamping adds another layer of security by recording a checksum on a decentralized ledger. This creates an unalterable proof of ownership that remains valid even if the watermark is removed. Together, these layers form a robust system capable of addressing multiple attack methods.

The Role of Solutions Like ScoreDetect

Multi-layered defenses can be further enhanced with integrated solutions like ScoreDetect. This tool combines various technologies into a single system, addressing the weaknesses of standalone protections. By pairing invisible watermarking with blockchain-based proof of ownership, ScoreDetect creates a two-pronged verification system that is much harder to defeat.

ScoreDetect uses blockchain timestamping to log a checksum of your audio content on a public ledger. This ensures ownership proof remains intact, even if watermarks are stripped or degraded by AI attacks. Its WordPress plugin simplifies the process by automatically generating blockchain-verified proof for every published or updated article. This not only secures content but also boosts SEO by reinforcing Google’s E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) signals.

Beyond prevention, ScoreDetect also excels in enforcement. Its web scraping capabilities identify unauthorized use of your content online, while automated delisting notices achieve takedown rates of over 96%. For industries like media, entertainment, legal, and healthcare – where protecting sensitive audio content is critical – ScoreDetect provides a robust, multi-layered solution. With integration to over 6,000 web apps via Zapier, it enables automated workflows to respond to threats in real-time, offering a strong defense against both current and future AI-driven risks.

Conclusion

The ongoing clash between generative AI and audio watermarking has reached a pivotal stage. A comprehensive study of 22 watermarking techniques revealed that none could fully withstand a wide range of removal attacks[1]. AI-induced distortions significantly weaken watermark effectiveness, while traditional methods demonstrate greater resilience. This highlights critical hurdles in safeguarding music intellectual property, combating voice cloning, and verifying content authenticity across various sectors.

As attackers advance from basic signal manipulations to sophisticated strategies – like fine-tuning AI models to erase watermarks – the shortcomings of relying on single-layer defenses become increasingly clear. To address these challenges, stronger and more comprehensive protection measures are essential. Evidence points to multi-layered defense systems as a more effective solution. These systems might combine latent watermarking, robust content verification standards, and blockchain timestamping to secure AI content for added security.

Tools like ScoreDetect exemplify this approach by integrating multiple protection technologies into a cohesive system. By combining invisible watermarking with blockchain-verified proof of ownership, ScoreDetect ensures content protection even when watermarks are partially compromised. With a delisting success rate exceeding 96% and real-time response capabilities through Zapier integration, ScoreDetect is designed to counter the evolving threats posed by AI.

The challenges brought by generative AI are only set to grow. To protect audio content effectively, industries must embrace systematic, multi-layered defense strategies, leveraging tools like ScoreDetect to stay ahead of these advancing threats.

FAQs

Why can generative AI remove audio watermarks so easily?

Generative AI can effortlessly strip away audio watermarks because many watermarking techniques are susceptible to typical audio alterations like compression, filtering, or adding noise. These adjustments, frequently employed by AI models, can eliminate subtle watermark features while maintaining the audio’s overall quality. Existing approaches, particularly post-hoc watermarking, struggle to hold up against such manipulations, emphasizing the urgent need for more durable methods to combat AI-driven challenges.

What attacks most commonly break audio watermarks in real-world scenarios?

Audio watermarks can struggle to hold up against signal-level distortions such as compression, background noise, reverberation, or changes like time stretching and polarity inversion. Attacks using neural networks, along with physical alterations like MP3 compression and reverberation, are especially effective at undermining the durability of these watermarks. This underscores the persistent challenge of designing watermarking methods that can withstand typical audio processing and transmission distortions.

If watermarks can be removed, how can I still prove I own the audio?

Even if visible watermarks are stripped away, ownership of your content can still be verified with advanced tools like ScoreDetect. This tool uses invisible, non-intrusive watermarking techniques that are tough to erase. Additionally, it records a tamper-proof checksum of the audio on the blockchain. This approach provides undeniable proof of ownership, allowing you to assert your rights even if someone tampers with or removes the watermarks.