Invisible watermarking embeds imperceptible markers in digital content to verify ownership and combat misuse. While effective against general distortions like resizing or compression, adversarial attacks – such as localized blurring and AI-driven forgery – pose significant challenges. These attacks exploit weaknesses in watermark design, achieving up to a 60% increase in evasion success rates by removing or manipulating embedded signals.

To counter these threats, advanced techniques like multi-modal embedding, localized attack simulation, and AI-powered detection are now key. For example, InCyan’s Tectus embeds durable watermarks, and ScoreDetect ensures traceability through blockchain verification. Together, these systems help protect content integrity and ownership, even in hostile digital environments.

Key points:

- Watermarks balance imperceptibility, durability, and capacity.

- Adversarial tactics include geometric transformations, signal processing, and AI-based attacks.

- Defense strategies involve attack simulation, blind detection, and cryptographic verification.

The stakes are high for industries reliant on digital content, making these solutions indispensable for ensuring accountability and protecting intellectual property.

Invisible Watermarking: Content Provenance for Videos at Scale | Wes Castro, Meta

sbb-itb-738ac1e

How Invisible Watermarking Works

Invisible watermarking embeds subtle digital markers into media using mathematical techniques. These markers remain undetectable to the human eye but can be identified through specialized algorithms.

"Watermarking is the process of embedding a secret or distinctive signal into a medium in a manner that is imperceptible to human users but can be algorithmically detected." – Ziyang Zeng, AI Researcher [6]

This technology must balance the "watermarking triangle" – a trade-off between imperceptibility (preserving media quality), robustness (withstanding removal attacks), and capacity (the amount of data embedded) [7]. Improving one element often compromises another, making it a complex engineering challenge.

Embedding Watermarks and Signal Durability

Traditional methods rely on techniques like Discrete Cosine Transform (DCT) or Discrete Wavelet Transform (DWT) to embed watermarks into the frequency components of an image. This approach ensures that the embedded signals can survive common processes like JPEG compression, as these methods align with how modern codecs handle image data [7].

More advanced systems, powered by AI, use neural networks to create "watermark residuals" – tiny, calculated changes to the original content. For instance, InvisMark achieves a Peak Signal-to-Noise Ratio (PSNR) of about 51 and a Structural Similarity Index Measure (SSIM) close to 0.998 [5]. These systems can embed up to 256-bit payloads in high-resolution images while maintaining over 97% accuracy, even after manipulations like resizing or noise addition [5].

"High-resolution images inherently possess the capacity to embed a multitude of imperceptible signals." – Rui Xu et al., Microsoft Responsible AI [5]

To ensure resilience against cropping and resizing, watermark data is either distributed across the entire image or repeated in multiple sections. This redundancy ensures that even small portions of the media can retain the full watermark [7]. The detection process is "blind", meaning it only requires the marked content and a shared secret for verification, eliminating the need for the original file [7].

Durable watermarks are designed to survive various alterations, including JPEG compression, brightness adjustments, Gaussian noise, rotations up to 10 degrees, and random cropping of up to 25% [5]. However, adding redundancy for durability can sometimes create patterns that might be exploited [1].

A critical part of the process is ensuring that these watermarks do not compromise the quality of the original content.

Maintaining Content Quality

While durability is essential, preserving the media’s perceptual quality is equally important. Watermarking systems achieve this by using perceptual modeling to embed signals in areas less noticeable to the human eye. For example, in images, watermarks are placed in highly textured regions like foliage or fabric. In audio, signals are embedded in frequency ranges masked by louder sounds [7].

"The goal is not to hide a watermark completely from dedicated forensic analysis, but to ensure that the audience experiences the content as intended." – Nikhil John, InCyan [7]

The domain chosen for watermark embedding plays a big role in balancing quality and durability. Spatial domain methods alter raw pixels directly, allowing for higher data capacity but making the watermark more vulnerable to compression. On the other hand, transform domain methods focus on frequency coefficients, providing better protection against re-encoding and resizing while remaining unnoticeable to viewers [7].

For video content, watermarks must also account for temporal synchronization across Groups of Pictures (GOPs). This prevents visual problems like flickering or banding, ensuring the watermark remains invisible while the video quality stays seamless throughout playback [7].

Adversarial Attacks on Digital Watermarks

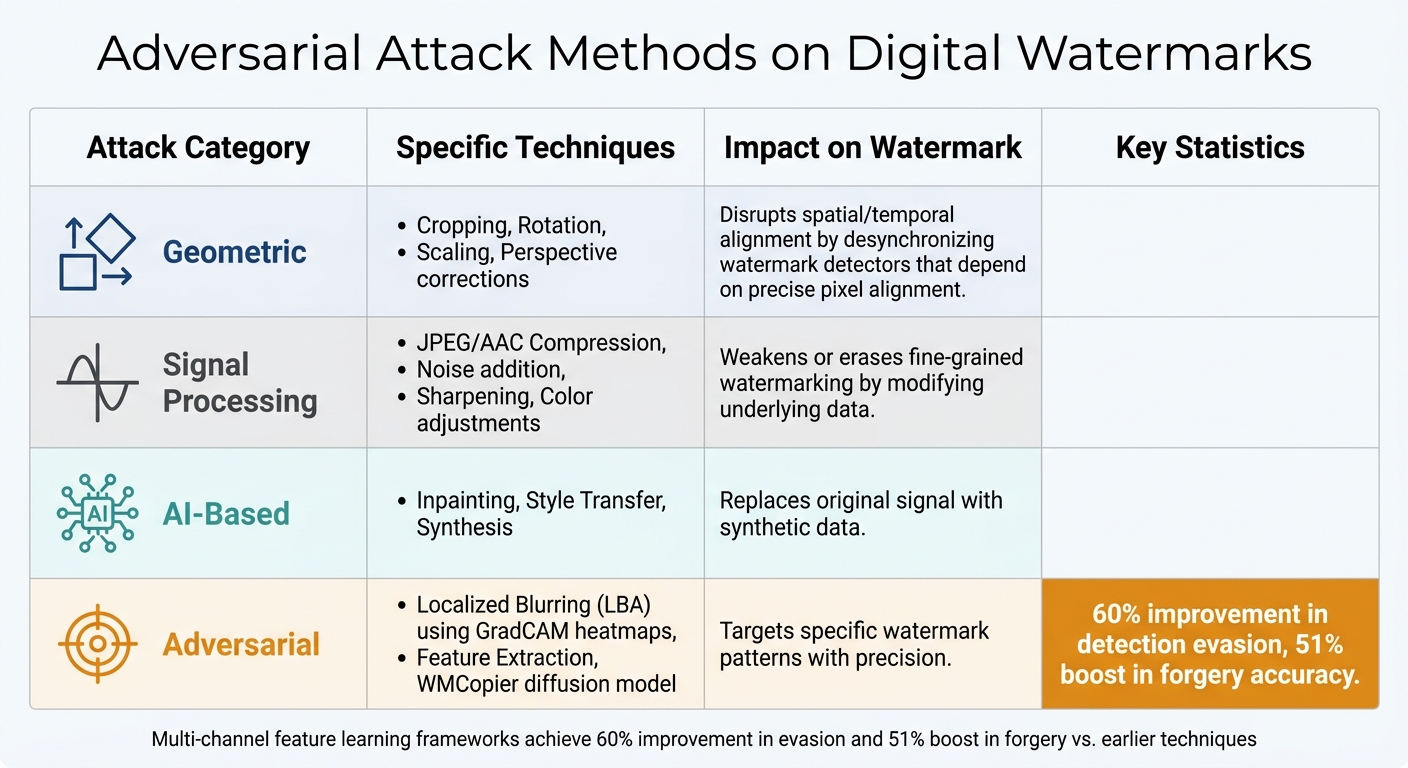

Adversarial Attack Methods on Digital Watermarks: Techniques and Impact

Even with advanced engineering, digital watermarks face targeted attacks aimed at neutralizing their effectiveness. These adversarial attacks focus on removing, distorting, or forging watermarks by exploiting weaknesses in their design and implementation.

At the heart of the issue is the "robustness-stealthiness paradox." To withstand common distortions like JPEG compression or resizing, watermarks rely on redundant patterns spread across the media. Unfortunately, this redundancy also leaks information that attackers can exploit to detect and manipulate the watermark [1].

"Current ‘robust’ watermarks sacrifice security for distortion resistance, providing insights for future watermark design." – Zhongjie Ba, Researcher [1]

Common Attack Methods

This inherent vulnerability opens the door to multiple attack methods that take advantage of watermark redundancy.

- Geometric transformations: Techniques like cropping, rotation, scaling, and perspective corrections alter the spatial layout of the content. These changes desynchronize watermark detectors, which depend on precise pixel alignment [7].

- Signal processing operations: Lossy compression (e.g., JPEG for images, AAC for audio), noise addition, sharpening, and color adjustments modify the underlying data, weakening or erasing the embedded watermark [7].

- AI-driven attacks: Localized Blurring Attacks (LBA), powered by GradCAM heatmaps, specifically target watermark regions. Unlike uniform blurring, this method removes watermarks while preserving overall image quality [3]. AI-based networks, such as Zhejiang University’s "WMCopier", can also strip watermarks or forge new ones. By using an unconditional diffusion model to replicate watermark patterns, WMCopier achieved higher success rates than earlier methods without needing prior knowledge of the algorithms it attacked [2].

Multi-channel feature learning frameworks have further advanced these attacks, achieving a 60% improvement in detection evasion and a 51% boost in forgery accuracy compared to earlier techniques [1].

| Attack Category | Specific Techniques | Impact on Watermark |

|---|---|---|

| Geometric | Cropping, Rotation, Scaling | Disrupts spatial/temporal alignment |

| Signal Processing | JPEG/AAC Compression, Noise, Sharpening | Weakens or erases fine-grained watermarking |

| AI-Based | Inpainting, Style Transfer, Synthesis | Replaces original signal with synthetic data |

| Adversarial | Localized Blurring (LBA), Feature Extraction | Targets specific watermark patterns |

Consequences of Successful Attacks

When attackers successfully bypass or manipulate watermarks, the consequences can be severe.

- Detection evasion: AI-generated content can be passed off as human-made, enabling the spread of misinformation and eroding trust in digital media.

- Watermark forgery: Attackers can embed a legitimate provider’s watermark into harmful or unauthorized content. This false attribution can lead to legal issues and damage the reputation of organizations wrongly associated with such content.

Perhaps the most far-reaching consequence is the erosion of provenance – the ability to verify the true origin of digital media using content authenticity verification tools. If watermarks can be removed or forged at scale, platforms, publishers, and consumers lose a critical tool for ensuring content authenticity. Alternative methods like digital fingerprints for content verification offer additional layers of security. This breakdown in accountability highlights the urgent need for stronger defense mechanisms, which are explored in the next section.

Defense Strategies Against Adversarial Attacks

Defending watermarks requires a mix of proactive measures: smarter training techniques, advanced detection systems, and cryptographic verification. Static embedding methods alone are no longer enough. Organizations need systems that can adapt as attackers evolve.

Attack Simulation and System Training

The best way to defend against an attack is to understand it first. By simulating adversarial attacks during the training phase, watermarking systems can prepare to resist tampering before it happens.

Multi-modal embedding strengthens watermarks by embedding them in both image-space and latent-space, creating redundancy. During training, systems simulate attacks on one type of watermark while reinforcing another. This ensures that if one layer is compromised, another remains intact for detection.

Localized attack simulation takes this a step further by focusing on vulnerable areas using GradCAM heatmaps from watermark decoders. Instead of applying uniform blurring across an entire image – which unnecessarily reduces quality – systems train against Localized Blurring Attacks (LBA) that target specific regions. This approach preserves image quality while maintaining watermark integrity [3].

Adversarial Shallow Watermarking (ASW) offers another layer of defense. It uses a training-free, randomly parameterized shallow decoder that resists distortions. The host image is adversarially optimized to trigger the decoder to reveal the watermark, making it resilient against unknown distortions without requiring extensive retraining [4].

Modern systems also emphasize blind detection, which allows watermark recovery using only a shared secret or reference parameters. This eliminates the need to store unmarked originals, making it scalable for platforms managing billions of assets.

To tackle the "robustness-stealthiness paradox", advanced training incorporates multi-channel feature learning to identify and address exploitable redundancies. Research shows this method can improve detection evasion success rates by 60% and forgery accuracy by 51% compared to older techniques [1]. These insights help create watermarks that balance durability with subtlety.

These advanced training methods seamlessly integrate with AI-powered detection systems, which continually adapt to new attack methods.

AI-Based Content Detection and Matching

Even the strongest watermark can be altered or removed. That’s why AI-powered detection plays a crucial role – not just in recovering embedded signals but in identifying content modifications through pattern recognition and multimodal fingerprinting. These systems counter subtle manipulations, ensuring watermark integrity.

For example, InCyan’s Idem platform excels where traditional tools fail. Whether content is cropped, compressed, or transformed into memes, Idem’s AI-powered matching can detect ownership even when as little as 10% of the original asset remains. By using advanced fingerprinting, it links content to its source, even surviving mobile edits, style transfers, and heavy compression.

AI-assisted detectors also learn to differentiate watermark signals from natural noise and synthetic distortions caused by attacks. This adaptability means the system gets better over time, staying ahead of emerging manipulation techniques.

Perceptual modeling further strengthens detection by embedding watermarks in textured, less noticeable areas. This ensures they remain invisible while still detectable, even after aggressive signal processing.

For large-scale content management, a four-stage verification pipeline provides structure:

- Ingest: Record metadata.

- Extraction: Recover the watermark payload.

- Reference Matching: Link the content to rights databases.

- Confidence Scoring: Deliver a final, defensible verdict.

This systematic approach ensures detection results hold up in legal or regulatory scenarios.

InCyan Solutions in Practice

InCyan has developed tools that combine these advanced strategies to protect digital content. Their solutions demonstrate how invisible watermarking and blockchain verification can work together to defend against adversarial attacks.

Tectus, InCyan’s flagship blind watermarking solution, embeds ownership proof invisibly into digital media. Unlike visible watermarks that disrupt user experience, Tectus operates in the transform domain, modifying DCT or DWT coefficients instead of raw pixels. This ensures watermarks survive compression and quantization without sacrificing content quality.

"When platforms strip or ignore provenance metadata, robust invisible watermarking becomes a critical safety net that can help link assets back to their origin and licensing state." – Nikhil John, InCyan [7]

ScoreDetect adds another layer of security with blockchain-based timestamps. While Tectus embeds signals within the content, ScoreDetect records a cryptographic checksum on the blockchain. This creates tamper-proof logs without storing the actual files, ensuring original provenance remains verifiable even if metadata is stripped or watermarks are challenged.

For enterprises needing large-scale protection, InCyan’s approach covers every stage of the content lifecycle:

- Prevention: Invisible watermarking.

- Discovery: Intelligent web scraping, with a 95% success rate in bypassing prevention measures.

- Analysis: Verifying unauthorized usage with quantitative proof.

- Take Down: Automated delisting notices achieving over 96% removal rates.

Conclusion

Invisible watermarking has come a long way, evolving from a basic embedding method into a sophisticated defense mechanism designed to withstand adversarial attacks. The real challenge lies in balancing three essential factors: imperceptibility, robustness, and capacity. At the same time, staying ahead of emerging threats requires multi-layered protection strategies. Research highlights the stakes – advanced adversarial techniques have increased evasion rates by 60%, proving that static approaches are no longer sufficient to safeguard high-value content [1].

A standout example of these advancements is InCyan’s Tectus, which embeds watermarks that remain undetectable yet resilient against common transformations. These watermarks act as a lasting fingerprint for digital assets. Coupled with ScoreDetect’s blockchain timestamping, this approach provides cryptographic proof of ownership, even if the content undergoes modifications.

"Invisible digital watermarking is not a silver bullet, but it is a powerful control point in a world of frictionless copying and increasingly sophisticated synthetic media." – Nikhil John, InCyan [7]

This quote underscores the need for a comprehensive strategy. Effective protection involves a continuous cycle: embedding watermarks to prevent misuse, intelligent scraping to discover violations, AI-powered analysis to match content, and automated enforcement to ensure takedowns.

For industries like media, entertainment, finance, and healthcare, the stakes are high. Unauthorized content usage directly affects revenue and damages brand reputation when materials circulate without proper attribution. InCyan’s solutions deliver tangible outcomes: a 95% success rate in bypassing preventive measures during discovery and over 96% takedown rates for automated delisting notices.

Looking ahead, adaptive systems must detect content even when only 10% of the original asset is present. By combining blind watermarking with cryptographic verification, organizations can turn embedded signals into actionable intelligence. This approach not only helps recover unauthorized usage but also transforms security challenges into meaningful safeguards, ensuring content integrity in an increasingly hostile environment.

FAQs

How do attackers remove invisible watermarks without hurting image quality?

Attackers often employ tactics like introducing random noise to an image to disrupt its watermark. Once the watermark is obscured, they may use denoising techniques or AI-driven reconstruction to restore the image’s clarity. Another approach involves adversarial attacks, where specific features of the watermarking are manipulated to evade detection systems. More advanced strategies, such as leveraging generative AI, can completely regenerate the image after removing the watermark. These methods aim to erase the watermark while keeping the image’s appearance largely intact.

What’s the best way to test a watermark against real adversarial attacks?

To assess how well a watermark can withstand adversarial attacks, you should simulate a range of potential manipulations. These include inpainting, cropping, compression, adding noise, and attribute-guided attacks. The goal is to determine whether the watermark remains both detectable and intact after these alterations.

For evaluation, rely on metrics such as:

- Bit accuracy: Measures how accurately the embedded data can be retrieved.

- Perceptual quality: Use metrics like PSNR (Peak Signal-to-Noise Ratio) and SSIM (Structural Similarity Index) to assess the visual quality of the content after embedding and attacks.

- Decoding success rates: Examine how effectively the watermark can be decoded under different conditions.

Testing should cover both white-box (where the attacker has full knowledge of the system) and black-box (where the system details are hidden) scenarios to ensure the watermark’s robustness in practical, unpredictable environments.

How does blockchain proof help if a watermark is forged or removed?

Blockchain verification tools, such as ScoreDetect, protect content by storing a checksum directly on the blockchain. This method ensures that ownership can still be confirmed, even if a watermark is changed or removed. By creating a tamper-resistant record, it helps maintain the integrity and authenticity of the content.