Temporal consistency is the key to making AI systems better at matching video content. Instead of analyzing each frame separately, this approach focuses on maintaining stability across frames, ensuring features like faces, motion, and 3D depth remain consistent over time. This reduces errors, improves accuracy, and helps systems detect changes – even when content has been cropped, compressed, or altered.

Key takeaways:

- Boosted Accuracy: Systems using temporal consistency see up to 14.9% improvement in F1-scores.

- Reduced Errors: Character drift (e.g., facial shifts) drops from 50% to under 5%.

- Faster Processing: Modern models generate results 50x faster than older methods.

- Enhanced Detection: AI tools can better track content through modifications like cropping or compression.

What Temporal Consistency Is and Why It Matters

Defining Temporal Consistency

Temporal consistency refers to the ability to maintain a subject’s visual identity – features, clothing, and proportions – across sequential frames in videos, audio, or image sequences [1][3]. It relies on the idea that frames in a sequence are interconnected, enabling systems to model and predict sequences more accurately.

"Temporal models recognize that data points may be dependent on previous values. This dependency is crucial for accurately modeling and predicting sequences." – Pushpendra Sharma [3]

Think of watching a video: the person on screen doesn’t suddenly change their face, clothing color, or height between frames. Temporal consistency enforces these natural expectations in AI systems by treating identity as a constant variable instead of regenerating it for each frame. This approach forms the backbone for improving how AI matches content across sequences [1].

Problems in Content Matching Without Temporal Consistency

When temporal consistency is absent, systems encounter major challenges in maintaining accuracy. One of the most noticeable issues is "Character Drift" – where facial features, clothing, or body proportions shift unpredictably between frames. This inconsistency makes it nearly impossible to reliably track or match content across versions [1].

Traditional systems often process each frame independently, requiring dozens (or even hundreds) of steps to analyze a single sequence. This not only hampers real-time scalability but also introduces risks like data leakage, where models inadvertently train on information that wouldn’t have been available during an actual prediction [4][5].

The problem becomes even worse when content is altered – cropped, compressed, or otherwise modified, as often happens in piracy or unauthorized distribution. Frame-by-frame approaches fail to maintain a coherent understanding of the content under these conditions, highlighting the need for temporal consistency to ensure reliability.

How Temporal Consistency Improves AI Systems

Integrating temporal consistency into AI systems significantly boosts accuracy in content matching, making real-time, large-scale applications feasible. By leveraging this approach, some tools have cut production overhead by up to 90% while maintaining 95% visual continuity across complex, fast-changing scenes [1].

The benefits go beyond visual improvements. OpenAI’s continuous-time consistency models (sCM) demonstrate this with remarkable efficiency: they can generate high-quality samples in just two steps, achieving a ~50x speedup compared to older diffusion models [4]. For example, a 1.5 billion parameter sCM model can produce a sample in 0.11 seconds using a single A100 GPU [4]. These advancements are game-changing for AI-driven content protection in dynamic media environments.

For content protection, temporal consistency plays a critical role by reducing false positives. It ensures systems maintain a stable understanding of the tracked content – even when it’s been cropped, compressed, or altered. This allows for far more precise detection of unauthorized use compared to traditional frame-by-frame methods.

sbb-itb-738ac1e

Testing AI Reflections & Consistency: Seedance 2.0 vs Kling 3.0

How to Use Temporal Consistency in Content Matching

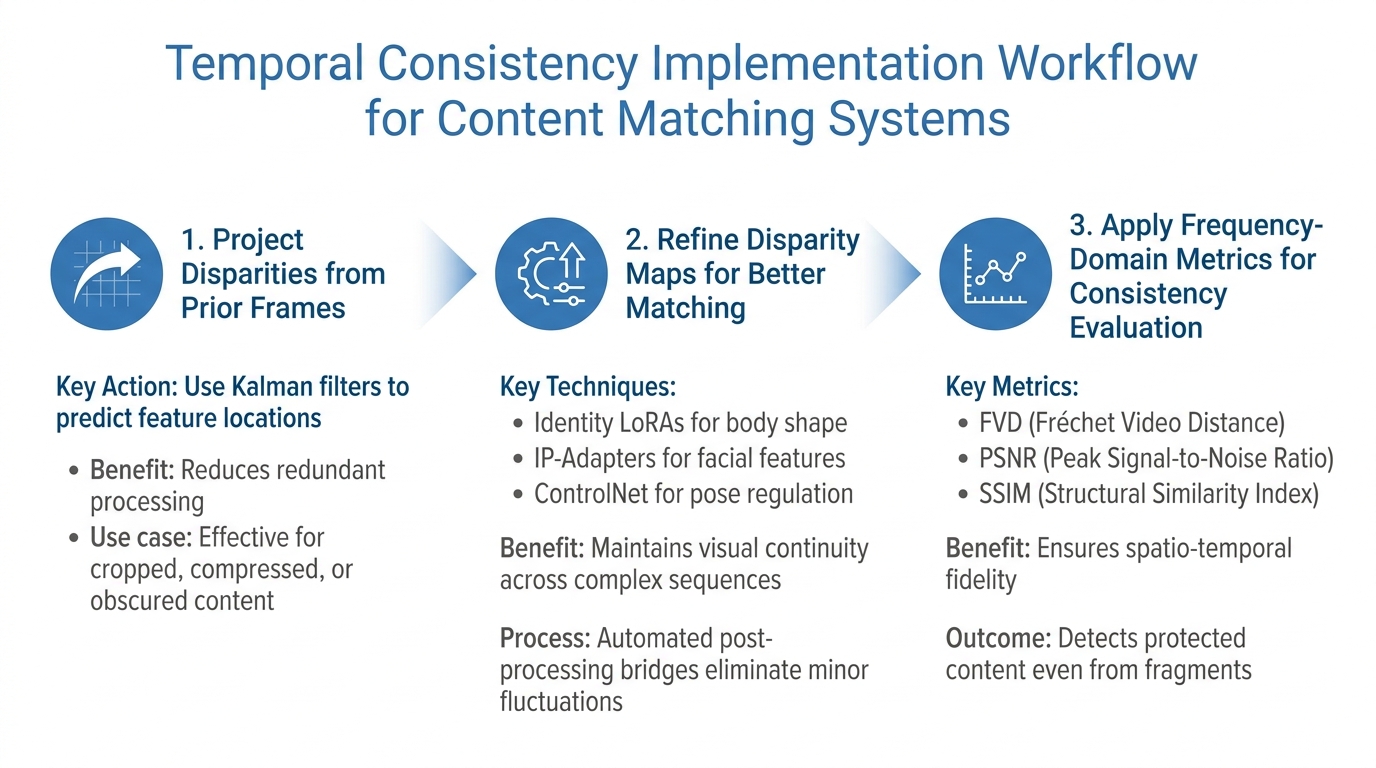

3-Step Process for Implementing Temporal Consistency in Content Matching

To effectively integrate temporal consistency into your content matching workflow, it’s essential to focus on both accuracy and computational efficiency. Below is a structured approach to help you implement this capability in AI-powered systems designed for tracking and protecting digital content across dynamic media.

Step 1: Project Disparities from Prior Frames

Start by projecting features across frames to reduce redundant processing. Using data from previous frames, predict where features will appear in subsequent ones.

For real-time applications like tracking or navigation, Kalman filters are a reliable tool for maintaining temporal relationships in data [3]. These filters predict the next state based on earlier observations, enabling smoother feature tracking while reducing computational demands. This method is especially effective when dealing with content that has been cropped, compressed, or partially obscured – situations often encountered in unauthorized distribution.

Projecting disparities creates a baseline for monitoring content changes, helping to identify manipulations, authenticate AI creations, or detect drops in matching confidence. Once these initial projections are set, refine the disparity maps to improve frame alignment further.

Step 2: Refine Disparity Maps for Better Matching

After establishing initial projections, the next step is to refine them to address inconsistencies caused by rapid scene changes, lighting shifts, or content alterations.

To achieve high accuracy, combine constraint layers like Identity LoRAs for body shape, IP-Adapters for facial features, and ControlNet for pose regulation [1]. This hybrid approach ensures visual continuity even across complex sequences.

Refinement also involves automated post-processing bridges, which help eliminate minor fluctuations in character features after the initial render [1]. These temporal smoothing techniques are critical for maintaining stable identification, even when content undergoes small transformations between frames. Once the refinements stabilize alignment, you can move on to evaluating consistency with frequency-domain metrics.

Step 3: Apply Frequency-Domain Metrics for Consistency Evaluation

Finally, use frequency-domain metrics like Fréchet Video Distance (FVD) to assess temporal naturalness and stability [2]. These metrics capture variations that time-domain methods might miss.

For a thorough evaluation, include measurements like PSNR (Peak Signal-to-Noise Ratio) and SSIM (Structural Similarity Index) to ensure strong spatio-temporal fidelity [2]. These metrics are particularly valuable in content protection systems, as they directly impact matching accuracy. Maintaining robust temporal consistency allows these systems to detect protected content – even when only fragments of the original asset remain visible – making them highly effective against sophisticated piracy attempts.

How InCyan‘s Tools Support Temporal Consistency

InCyan’s enterprise tools are built to leverage temporal consistency, ensuring dynamic content is secured and accurately matched. These tools apply the principles of temporal consistency discussed earlier to enhance both content matching and anti-piracy efforts. Let’s dive into how each tool achieves this.

Idem: Multimodal Matching That Thrives Despite Content Modifications

Idem is InCyan’s tool for identifying ownership, even when content has been heavily altered. It uses spatiotemporal feature extraction, analyzing both spatial and temporal elements to detect behaviors and patterns, rather than relying solely on static visuals that can be easily edited.

By combining spatial feature extraction through CNNs with temporal modeling via LSTMs or Transformers, Idem overcomes the limitations of frame-based matching. This hybrid approach captures visual semantics alongside long-term temporal dynamics, delivering 12-15% higher F1 scores in complex detection tasks compared to single-mode methods.

For instance, it uses optical flow estimation to track velocity vector fields, which remain consistent even when content undergoes changes like cropping, compression, or color adjustments. Additionally, Idem employs cross-sequence temporal alignment to identify shared structures across different versions of the same content. Through iterative refinement, it preserves semantic alignment, even after significant transformations.

Tectus: Invisible Watermarking to Prove Ownership

Tectus provides an invisible watermarking solution that embeds ownership markers into digital media without compromising quality. Using Temporal-Spatial Attention Mechanisms (TSAM), Tectus ensures watermarks stay intact across dynamic media environments while maintaining the motion and spatial coherence of the content.

The system employs multi-scale temporal reasoning to enhance watermark durability without degrading video quality. For example, videos processed with Tectus show a 15.3% improvement in LPIPS (Learned Perceptual Image Patch Similarity) and a 12.7% increase in FVD (Fréchet Video Distance) metrics. Additionally, content with high temporal consistency sees an 18.9% boost in user preference, outperforming methods that struggle with maintaining motion coherence.

Unlike metadata such as EXIF or IPTC – which can be stripped during transcoding or on social media platforms – Tectus watermarks remain intact throughout the content lifecycle, providing a reliable and persistent identifier.

Using ScoreDetect for Blockchain Timestamping

ScoreDetect adds a cryptographic layer to InCyan’s ecosystem by anchoring content provenance on the blockchain. It creates an immutable log, recording when content was registered and by whom, without storing the actual digital files.

"By anchoring content fingerprints and provenance claims on a suitable chain, asset owners gain an immutable log of when an assertion was made, who signed it, and how it relates to specific files or identifiers." – Nikhil John, InCyan [6]

The integration process is seamless: Tectus embeds a durable identifier in the content, while ScoreDetect anchors a cryptographic hash of that identifier and the asset on the blockchain. When Idem identifies a match in modified content, it queries the ScoreDetect ledger to confirm creation and licensing details. This shifts questions about ownership and integrity to mathematical proofs, eliminating the need for negotiation [6].

For businesses managing large volumes of content, ScoreDetect uses Merkle trees to group multiple asset hashes into a single root hash, reducing costs on public blockchains while preserving verification accuracy. It also offers developer-friendly APIs and a WordPress plugin that logs each published or updated article, creating verifiable proof of ownership while boosting SEO with enhanced E-E-A-T signals.

Measuring the Impact of Temporal Consistency on Content Matching

Understanding the role of temporal consistency is crucial for evaluating its effect on content matching. By using the right metrics, you can clearly see the difference between systems that incorporate temporal consistency and those that don’t.

Key Metrics for Evaluating Temporal Consistency

Video Consistency Distance (VCD) is a metric designed to measure how well video frames maintain their attributes over time. It works by analyzing frames in the frequency domain using the Discrete Fourier Transform (DFT). Through this, it captures global attributes like color and illumination (via amplitude) and local attributes like shapes and edges (via phase components) [8]. This metric is especially helpful for detecting unnatural changes in style or object appearance that could result in false positives during content matching.

Mask Consistency Score (MCS) focuses on the stability of video object segmentation across frames. It evaluates how consistently objects are tracked as they move, rotate, or partially disappear by modeling instance consistency alongside referring segmentation [7]. InCyan’s tools use MCS to ensure accurate tracking, even when objects undergo complex transformations.

The VBench-I2V Suite takes a more granular approach by breaking temporal consistency into three dimensions:

- Subject Consistency: Ensures the main object remains unchanged.

- Background Consistency: Verifies that the environment remains stable.

- Temporal Flickering: Measures unnatural changes between adjacent frames [8].

These detailed metrics allow for precise identification of where consistency issues arise, enabling targeted improvements and better performance evaluations.

Matching Accuracy Before and After Temporal Consistency

Once these metrics are in place, it becomes easier to assess how matching accuracy improves with the implementation of temporal consistency. For example, in April 2026, researchers at the University of Virginia introduced the ChronoScope benchmark, which included 1.4 million question chains. Their findings showed a significant drop in accuracy when temporal scope wasn’t maintained – models struggled to retain historical accuracy without it [9].

In practical applications, using Knowledge Distillation with Semantic Similarity Propagation (KD-SSP) improved temporal consistency by 12.5% on the UAVid dataset and 6.7% on RuralScapes [10]. These increases highlight the difference between systems that lose track of content and those that reliably maintain identification across frame changes.

Multi-turn interactions further illustrate the importance of temporal grounding. A single lapse in maintaining temporal reference can reduce chain accuracy from 0.000 to 0.015 in self-conditioned settings [9]. Such lapses can disrupt the entire interaction chain, causing a cascade of errors. To prevent this, InCyan’s tools focus on preserving temporal references throughout the content lifecycle, from initial watermarking with Tectus to final verification with Idem and blockchain anchoring via ScoreDetect. This comprehensive approach ensures consistent and reliable performance.

Conclusion

Temporal consistency takes content matching to a new level by moving beyond isolated frame analysis. Instead, it ensures continuity over time, allowing AI models to achieve greater accuracy and maintain identification even when content undergoes major changes.

InCyan’s suite of tools integrates temporal consistency at every stage. Through features like invisible watermarking, multimodal matching, and blockchain timestamping, their platform ensures digital assets remain traceable and verifiable, no matter how they are altered.

The efficiency of these models is notable. They can produce high-quality samples in just 0.11 seconds on a single A100 GPU, delivering a roughly 50× speed improvement compared to traditional diffusion models [4]. This level of speed makes real-time asset protection possible, enabling organizations to proactively safeguard their media from creation to distribution, rather than reacting to infringements after the fact. For businesses managing dynamic media, blending temporal consistency with InCyan’s tools creates a seamless process that prioritizes both speed and precision while reinforcing digital rights protection.

The evolution from static, frame-by-frame analysis to systems that account for temporal awareness marks a major step forward in AI-driven content matching. As Chalk aptly states, "Temporal consistency is crucial for ensuring your model’s training performance is reflective of production" [5]. This concept is just as vital for protecting content, managing assets, and enforcing digital rights. Beyond improving model performance, this shift strengthens defenses against piracy and unauthorized use, offering a robust solution for today’s digital challenges.

FAQs

How is temporal consistency different from frame-by-frame matching?

Temporal consistency ensures that relationships across different time points remain accurate, capturing how elements change over time. This approach helps reduce estimator variance and boosts reliability, especially in dynamic media like videos. On the other hand, frame-by-frame matching treats each frame as an isolated unit, analyzing it independently. While this method can work, it’s more vulnerable to inconsistencies caused by fleeting changes or noise. That’s why temporal consistency often proves to be a more dependable choice for matching content in sequences such as videos.

What video edits (cropping, compression, re-encoding) can temporal consistency still match?

Temporal consistency is a powerful tool for handling video edits such as cropping, compression, and re-encoding. These changes usually don’t interfere with the fundamental 3D geometric temporal patterns that AI detection methods depend on. Recent research on video detection and temporal coherence backs this up, showing that these core patterns remain intact despite such adjustments.

How do I measure temporal consistency in my matching system (FVD, VCD, MCS)?

Temporal consistency is assessed using metrics such as FVD (Fréchet Video Distance), VCD (Video Content Distance), and MCS (Multi-Scale Structural Similarity). Each of these plays a specific role in evaluating how well content remains coherent over time:

- FVD measures temporal fidelity by comparing feature distributions, helping to gauge how naturally the content flows across frames.

- VCD focuses on the stability of the video’s content, ensuring elements remain consistent throughout.

- MCS evaluates structural similarity across frames, ensuring the video maintains its visual integrity.

These metrics work together to ensure videos deliver stable and coherent content over time.