Audio watermarking embeds hidden signals into audio files to protect copyrights and verify ownership. Modern deep learning methods outperform older techniques by improving audio quality, resilience to transformations (like MP3 compression), and data embedding capacity. Here’s what matters most:

- Imperceptibility: Keeps watermarks inaudible while maintaining high audio quality. Metrics like Signal-to-Noise Ratio (SNR), Objective Difference Grade (ODG), and Noise-to-Mask Ratio (NMR) measure this.

- Resilience: Ensures watermarks survive audio changes like compression, noise, resampling, and cropping. Deep learning models use attack layers and psychoacoustic principles to improve durability.

- Capacity: Balances the amount of data embedded with audio quality and resilience. Advanced models achieve 32 bits per second without noticeable degradation.

Tools like SilentCipher-16k and platforms like ScoreDetect combine speed, efficiency, and accuracy to protect media content across industries. Challenges remain with advanced attacks, but these systems continue to evolve.

Audio Watermarking Performance Metrics Comparison: SilentCipher vs Traditional Models

Responsible AI for Offline Plugins – Tamper-Resistant Neural Audio Watermarking – Kanru Hua ADC 2024

sbb-itb-738ac1e

Imperceptibility: Maintaining Audio Quality

Imperceptibility is all about whether a watermark can be embedded in audio without listeners noticing any drop in quality. The goal is to add a digital signature while keeping the audio sounding just as clear and professional as the original, a key component of digital piracy solutions with watermarking.

Perceptual Quality Metrics

To measure imperceptibility, the industry uses a variety of metrics to evaluate how well audio quality is maintained. Here’s a breakdown of the most commonly used ones:

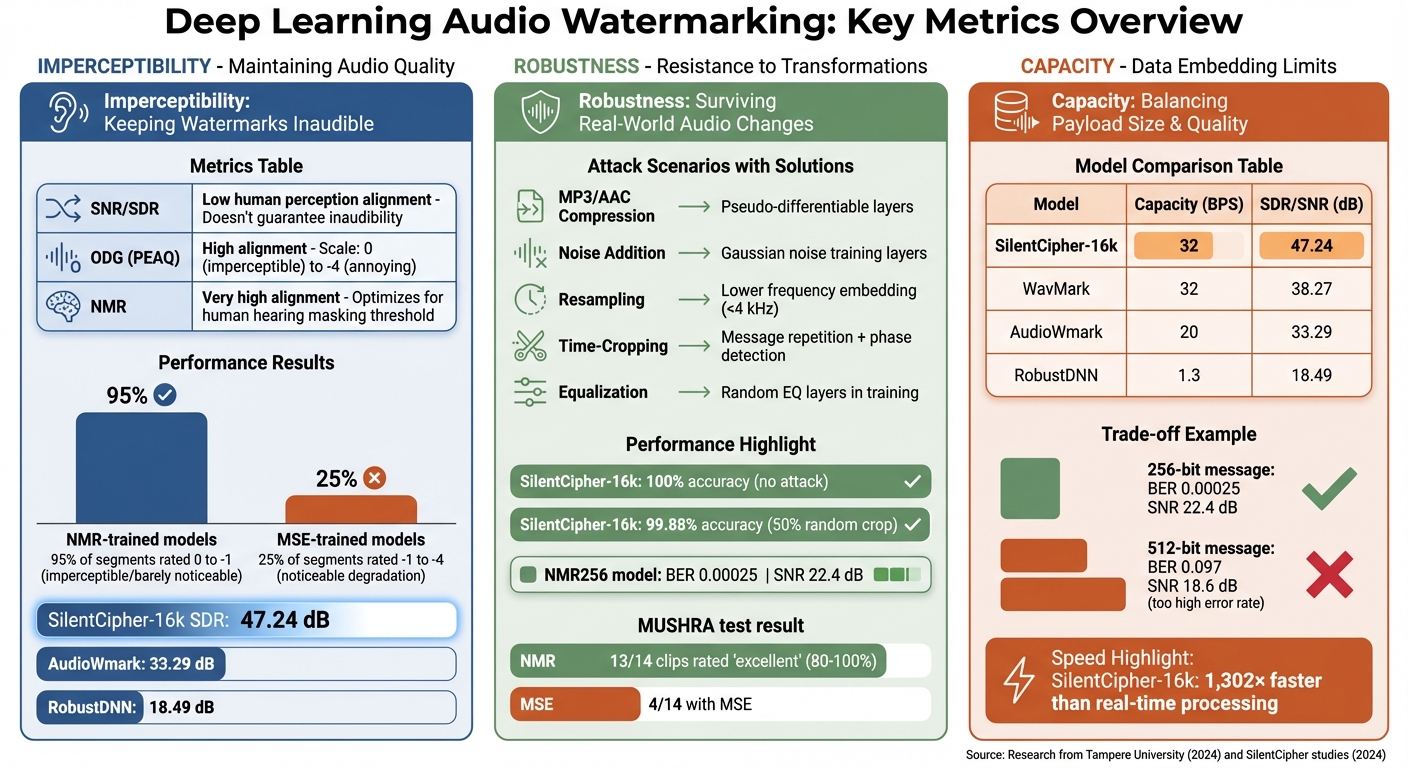

- Signal-to-Noise Ratio (SNR) and Signal-to-Distortion Ratio (SDR): These metrics compare the power of the original audio signal to that of the watermark noise or distortion. However, high SNR or SDR values don’t always mean the watermark is inaudible [2][3].

- Objective Difference Grade (ODG): Part of the PEAQ standard, ODG rates audio quality on a scale from 0 (imperceptible) to -4 (very annoying). Unlike SNR and SDR, it aligns more closely with human perception, addressing the limitations of purely numerical metrics [3].

- Noise-to-Mask Ratio (NMR): This measures how watermark noise compares to the masking threshold of human hearing. It considers how louder sounds can naturally mask quieter ones, making this metric particularly effective for ensuring inaudibility [3].

| Metric | Description | Human Perception Alignment |

|---|---|---|

| SNR / SDR | Ratio of signal power to noise/distortion power | Low; doesn’t guarantee inaudibility [2][3] |

| ODG (PEAQ) | Rates audio quality from 0 (imperceptible) to -4 (annoying) | High; standardized by ITU-R [3] |

| NMR | Compares noise to the ear’s masking threshold | Very high; optimizes deep learning loss functions [3] |

These metrics serve as the foundation for training deep learning models to embed watermarks without compromising audio quality.

Deep Learning Improvements

Deep learning has taken watermarking to the next level by incorporating psychoacoustic principles – essentially, how humans perceive sound. Traditional approaches often relied on minimizing statistical distortion using Mean Square Error (MSE) loss functions. But newer models use NMR loss, which accounts for frequency masking. This phenomenon occurs when low-energy sounds are rendered inaudible by louder sounds at similar frequencies.

Here’s how these advancements have improved results:

- Models trained with NMR loss achieved PEAQ ratings between 0 and -1 for over 95% of audio segments, meaning the watermarks were either imperceptible or barely noticeable.

- In contrast, about 25% of segments watermarked using MSE-trained models scored between -1 and -4, indicating noticeable degradation [3].

- SilentCipher-16k, a state-of-the-art system, achieved an impressive SDR of 47.24 dB, outperforming earlier models like AudioWmark (33.29 dB) and RobustDNN (18.49 dB) [2].

SilentCipher stands out by employing a unique technique: it sets the watermark phase to π relative to the original carrier and keeps the watermark’s magnitude below the original signal’s frequency bins. This psychoacoustic thresholding ensures that the watermark remains inaudible, even in professional audio environments. Plus, users can tweak the SDR threshold during inference without retraining the model, making it highly adaptable [2].

Robustness: Resistance to Audio Transformations

Robustness evaluates whether a watermark can withstand real-world audio changes, whether intentional or accidental. These changes include MP3 and AAC compression, noise addition, resampling, and editing. If a watermark vanishes after basic processing, it fails its purpose for copyright protection or ownership confirmation.

"The watermarks need to be robust against intentional or unintentional attacks such as compression, filtering or noise addition." – Martin Moritz, Toni Olán, and Tuomas Virtanen, Tampere University [3]

Common Transformation Scenarios

Audio watermarks face numerous challenges in practical use. Lossy compression formats like MP3 and AAC are designed to reduce file size by discarding "irrelevant" audio data, which can unintentionally strip away weak watermarks. Noise, whether from recording environments or transmission, can interfere with the watermark’s signal. Resampling, which changes sample rates, shifts frequency components and complicates detection. Time-cropping and jitter introduce synchronization issues, making it difficult for the extractor to locate the watermark in edited files. For instance, removing a few seconds from the start of a track or suppressing random samples can disrupt the system’s ability to detect the watermark, often requiring synchronization codes or pattern-matching techniques to restore functionality [3].

Deep Learning-Based Solutions

To address these challenges, deep learning frameworks have adopted adaptive training methods. These systems include attack layers between the encoder and decoder, effectively training the network to embed watermarks that can survive specific distortions. For example, introducing noise, filtering, or sample suppression during training forces the model to develop features that are more resilient [3].

Pseudo-differentiable compression layers are another innovation. Since codecs like MP3 and AAC are not differentiable, researchers apply actual compression during the forward pass of training but bypass it during the backward pass. This approach allows models to adapt to codec-induced artifacts without interrupting the training process [2].

In August 2024, researchers Martin Moritz, Toni Olán, and Tuomas Virtanen from Tampere University introduced an audio watermarking system that leveraged a Noise-to-Mask Ratio (NMR) loss function. Their NMR256 model achieved an impressive Bit Error Rate (BER) of 0.00025 and a Signal-to-Noise Ratio (SNR) of 22.4 dB. Subjective MUSHRA tests with 20 naive listeners revealed that 13 out of 14 clips marked with NMR-based models earned an "excellent" quality rating (80–100%), far surpassing traditional MSE-based models, where only 4 clips reached that standard [3].

Frequency-specific embedding offers additional protection, focusing the watermark within critical frequency bands (typically 80 Hz to 5.6 kHz) to guard against basic filtering [3]. Advanced models like SilentCipher-16k demonstrate remarkable performance, achieving 100% accuracy under no-attack conditions and maintaining 99.88% accuracy even after a 50% random crop attack [2].

| Transformation Scenario | Effect on Watermark | Deep Learning Solution |

|---|---|---|

| MP3/AAC Compression | Removes "irrelevant" audio data, weakening watermark | Pseudo-differentiable layers and psychoacoustic thresholding |

| Noise Addition | Masks watermark signal patterns | Training with additive Gaussian noise layers |

| Resampling | Shifts frequency components, complicating detection | Embedding messages in lower frequency bins (e.g., below 4 kHz) |

| Time-Cropping | Creates desynchronization issues | Brute-force phase detection and message repetition |

| Equalization | Alters frequency response | Random equalization layers during training |

These advancements in robustness work hand-in-hand with capacity optimization, ensuring that watermarks remain imperceptible while retaining their resilience.

Capacity: Data Embedding Limits

When it comes to audio watermarking, capacity refers to the maximum amount of data that can be hidden within an audio file without compromising its quality or the watermark’s resilience. If too little data is embedded, the watermark becomes impractical. On the other hand, embedding too much data can degrade the audio quality and weaken the watermark’s durability.

Payload Size Trade-Offs

The size of the payload – how much data is embedded – comes with trade-offs. Psychoacoustic masking thresholds play a critical role here. Essentially, the watermark’s energy must stay below the threshold of human hearing across various frequencies. Research from Tampere University highlights this balance. When the message length increased from 256 to 512 bits in a 1.1-second audio segment, the Bit Error Rate (BER) rose sharply from 0.00025 to 0.097, while the Signal-to-Noise Ratio (SNR) dropped from 22.4 dB to 18.6 dB [3].

"For this longer messages the BER is becoming very high and the SNR slightly worse and those models would not be accurate enough to be used for efficient audio watermarking." – Martin Moritz, Toni Olán, Tuomas Virtanen, Tampere University [3]

Higher sampling rates can help expand capacity by offering more frequency bins for data embedding. For instance, increasing the sampling rate from 16 kHz to 44.1 kHz allows models to scale from 32-bit to 40-bit message lengths, making them suitable for professional-grade audio applications [2]. However, there’s a catch: capacity often competes with robustness. To withstand attacks like cropping or noise, messages are repeated across the time axis, sacrificing unique payload size for redundancy [2].

These challenges pave the way for deep learning techniques that aim to optimize capacity while preserving audio quality.

Optimization with Deep Learning

Deep learning models strike a balance between maximizing capacity and maintaining audio integrity. They do this by optimizing message extraction (using Binary Cross Entropy) and preserving audio quality (using loss functions like MSE or NMR). Take the SilentCipher-16k model, for example – it achieves a capacity of 32 bits per second (BPS) with a Signal-to-Distortion Ratio (SDR) of 47.24 dB. This is a huge leap compared to older methods like RobustDNN, which offers only 1.3 BPS at 18.49 dB [2]. Additionally, SilentCipher-16k processes audio at speeds up to 1,302 times faster than real-time [2].

Frequency-domain optimization is another way to boost capacity. By embedding watermarks within specific frequency bands (typically between 80 Hz and 5.6 kHz), models ensure that the watermark isn’t easily removed by standard filtering techniques, all while maintaining high payload limits [3]. During training, pseudo-differentiable compression layers simulate real-world codec processing (like MP3 or AAC), ensuring the watermark survives compression [2]. To further enhance reliability, the decoder averages predictions across repeated message segments, reducing errors and increasing effective capacity even in noisy conditions [2].

| Model | Capacity (BPS) | SDR/SNR (dB) |

|---|---|---|

| SilentCipher-16k | 32 | 47.24 |

| WavMark | 32 | 38.27 |

| AudioWmark | 20 | 33.29 |

| RobustDNN | 1.3 | 18.49 |

Evaluation Frameworks and Benchmarks

Test Dataset Diversity

When it comes to evaluating audio watermarking systems, having a variety of test datasets is essential. Different audio types – like speech, music, and environmental sounds – each bring unique challenges that can influence how well a watermarking method performs. By testing across a broad spectrum of audio, researchers can better understand a system’s reliability under different conditions.

For example, in September 2024, researchers assessing the SilentCipher model used a massive dataset totaling 372 hours. This included speech datasets like VCTK, NUS-48E, and NHSS (about 47 hours combined), along with the multilingual VoxPopuli corpus to evaluate performance across various languages. For music and instrumental tracks, they turned to MUSDB18, which offers 6.5 hours of multi-track music. Additionally, they included an internal dataset spanning 321 hours, covering diverse broadcast scenarios [1][2]. This is particularly relevant for watermarking for live sports streams, where real-time robustness is paramount.

This level of diversity is critical. A model trained on just one type of audio – like speech – might struggle when applied to music or environmental sounds. By using varied datasets, researchers can establish a more comprehensive understanding of a system’s strengths and weaknesses, paving the way for standardized benchmarks and more effective AI content protection strategies.

Standardized Benchmarks

Standardized benchmarks provide a consistent way to measure and compare the performance of different watermarking algorithms. They focus on key metrics – imperceptibility, robustness, and capacity – under controlled testing conditions. These benchmarks often use well-known baseline models like WavMark, RobustDNN, Audiostamp, Dear (designed for re-recording resilience), and AudioWmark [1][2].

To simulate real-world challenges, benchmarks rely on standardized attack suites. These include:

- Compression: MP3, OGG, and AAC formats at bitrates of 64, 128, and 256 kbps.

- Signal Processing: Additive Gaussian noise at 40 dB and random equalization.

- Temporal Modifications: Techniques like time-jittering, random resampling (e.g., 6.4 kHz to 16 kHz), and random cropping of up to 50% of the audio duration [2].

Performance is assessed using both objective metrics – such as SDR (Signal-to-Distortion Ratio), SNR (Signal-to-Noise Ratio), BER (Bit Error Rate), and runtime efficiency – and subjective evaluations. For instance, blind tests by audio experts help gauge perceived inaudibility [1][2].

A practical example comes from Martin Moritz and his team at Tampere University. They tested their NMR-trained model using the FMA (Free Music Archive) dataset, achieving over 95% imperceptibility ratings for audio segments [3]. This highlights how benchmarks not only measure technical performance but also incorporate human perception to ensure real-world applicability.

Current Challenges and Limitations

Vulnerabilities to Advanced Transformations

Deep learning–based audio watermarking has made strides, but no system can currently withstand every type of distortion. A 2025 study by the University of Hawaii tested 22 watermarking schemes against 22 removal attacks using over 200,000 audio samples. The researchers uncovered eight new black-box attacks that proved highly effective against all the tested methods [4].

A major challenge comes from distortions introduced by AI technologies. Generative AI tools like Voice Conversion (VC) and Text-to-Speech (TTS) can re-synthesize audio, effectively removing embedded watermarks while retaining excellent audio quality [4].

"None of the surveyed watermarking schemes is robust enough to withstand all tested distortions in practice." – Yizhu Wen et al., University of Hawaii at Manoa [4]

Physical-level distortions also present significant hurdles. Many watermarks fail to survive over-the-air transformations, such as when audio is played through a speaker and then re-recorded. This issue, often referred to as the "analog hole", remains a persistent vulnerability. Watermarks relying on frequency-domain patterns are especially susceptible to attacks like filtering and spectral shaping. Additionally, aggressive low-bitrate compression (e.g., 64 kbps MP3, OGG, or AAC) tends to eliminate the subtle modifications introduced by watermarking algorithms [4][2]. These challenges are further amplified by common post-production processes, making watermark recovery even more difficult.

Post-Processing Modifications

Even standard post-processing techniques can disrupt watermark integrity. Methods like denoising and equalization often impair detection. Similarly, re-sampling and low-pass filtering – especially at 3.5 kHz or 7 kHz – can strip watermarks embedded in higher-frequency regions [2][3].

Synchronization issues exacerbate these problems. Techniques like time-jittering, sample suppression, and random cropping (which can remove up to 50% of the audio’s duration) cause desynchronization, making watermark extraction nearly impossible. For instance, advanced models like SilentCipher-16k maintain 100% detection accuracy under additive Gaussian noise at 40 dB. However, baseline models such as AudioWmark show dramatic performance drops – down to 1.22% accuracy under random cropping and 26.11% accuracy when mixed with speech at -15 dB [2][3].

The core issue is that once a watermark becomes publicly accessible, attackers have numerous ways to compromise it without significantly reducing audio quality. These challenges highlight the ongoing need for how AI improves audio watermarking accuracy to ensure reliable content protection.

Practical Applications and Tools

Content Protection and Copyright Management

Deep learning watermarking is finding its way into various industries, offering practical solutions for content protection and copyright management. For instance, music and entertainment companies now embed transparent ownership messages into audio files, ensuring that the sound quality remains intact across digital distribution formats. Tools like SilentCipher-44k are used by professional studios to embed copyright identifiers while maintaining studio-quality audio fidelity [2].

This technology also plays a key role in identifying synthesized content created by generative tools like voice conversion and text-to-speech. By embedding watermarks, it becomes possible to protect against deepfake audio and manipulation of content without authorization [2]. In the realm of audio journalism, these systems are instrumental in verifying content authenticity and safeguarding intellectual property, especially in fast-paced digital environments where content redistribution can happen almost instantly.

One standout feature of these systems is their speed. For example, the SilentCipher-16k model can watermark audio at a rate 1,302 times faster than real-time. This efficiency makes large-scale deployment practical for media companies managing extensive audio libraries, directly addressing operational challenges [2].

ScoreDetect for Audio Watermarking

Platforms like ScoreDetect are making these advancements accessible and actionable. Developed by InCyan, ScoreDetect integrates deep learning watermarking principles into an enterprise-grade platform for content protection. Its invisible watermarking technology prevents unauthorized use while preserving the audio’s perceptual quality, solving the challenge of embedding identifiers without affecting the listening experience.

ScoreDetect takes copyright protection a step further by combining digital watermarking with blockchain-backed verification and automated enforcement workflows. Instead of storing the actual audio files, the platform creates a checksum of the content, offering verifiable proof of ownership while maintaining privacy for sensitive material.

One of its standout features is its intelligent web scraping capability, which boasts a 95% success rate in bypassing prevention measures. When unauthorized use is detected, ScoreDetect automatically generates delisting notices. These notices have an impressive success rate, achieving over a 96% takedown rate, making it a powerful tool for organizations in media, entertainment, academia, and content creation.

Conclusion

Deep learning-based audio watermarking relies on finding the right balance between imperceptibility, robustness, and capacity. However, achieving these goals isn’t always straightforward. As the SilentCipher research team pointed out:

"Higher SDR doesn’t necessarily guarantee imperceptible artifacts to human perception. This discrepancy arises from the neglect of psychoacoustics, which determines human perception of artefacts in presence of the carrier" [2].

To address this, the transition from traditional Mean Square Error (MSE) to Noise-to-Mask Ratio (NMR) loss functions has been a game-changer. NMR-based models have shown much better perceptual quality ratings, bridging the gap between technical performance and human auditory perception [3].

When it comes to robustness, modern systems have made strides in handling real-world transformations like MP3 compression (64 kbps) or AAC encoding. This is achieved by integrating pseudo-differentiable layers into training, which simulate these transformations without disrupting backpropagation. SilentCipher exemplifies this approach, delivering strong performance while maintaining scalability [2]. This balance between robustness and efficiency is crucial for the success of deep learning watermarking systems.

The challenge of capacity remains just as important. While embedding a 256-bit message can result in a Bit Error Rate (BER) as low as 0.00025, doubling the payload to 512 bits significantly increases the BER to 0.097 [3]. This highlights the need for careful optimization to align message size with specific application requirements.

For real-world deployment, tools like ScoreDetect offer practical solutions. They combine invisible watermarking with blockchain-backed verification and automated enforcement workflows, making them highly effective for protecting content across industries like media, entertainment, and academia. With a 95% success rate in web scraping and a takedown rate exceeding 96%, ScoreDetect demonstrates how watermarking principles can deliver measurable results. Striking this balance between technology and usability is essential for advancing digital content protection.

FAQs

Which metric best reflects whether a watermark is truly inaudible?

When it comes to determining if a watermark is genuinely undetectable in audio, psychoacoustic loss functions are the gold standard. One example is the Noise-to-Mask Ratio (NMR) loss, which closely aligns with how humans perceive sound. By using these metrics, you can ensure that the watermark stays hidden without compromising the overall audio quality.

What audio edits most often disrupt watermark detection?

Audio tweaks that often interfere with watermark detection include desynchronization, temporal cuts, compression, noise addition, and resampling. These changes can compromise the embedded watermark, making it more challenging to identify.

How do you choose the right payload size without hurting quality?

Choosing the right payload size in deep learning-based audio watermarking is all about finding the sweet spot between audio quality and watermark durability. If the payload is too large, it can noticeably degrade the sound. On the other hand, a payload that’s too small might reduce how reliably the watermark can be detected.

To tackle this, advanced techniques incorporate psychoacoustic loss functions and train models with real-world distortions to maintain audio quality. Tools like Signal-to-Noise Ratio (SNR) and perceptual quality assessments are key for determining the ideal payload size, ensuring the watermark remains both resilient and undetectable.